Analyzing Mutual Information Data from Google Adsense (2007-2010): Clicks and Income Insights

This analysis explores mutual information data from Google Adsense spanning January 2007 to December 2010, covering 1,459 days. It examines the relationship between page clicks and daily income, represented through quantized data formats. The study provides valuable insights into the joint and marginal distributions of clicks and income, employing statistical measures such as joint entropy, marginal entropy, conditional entropy, and mutual information. These metrics help in understanding the underlying patterns and dependencies between the two variables, offering strategic implications for advertising effectiveness.

Analyzing Mutual Information Data from Google Adsense (2007-2010): Clicks and Income Insights

E N D

Presentation Transcript

Data: Google Adsense January 2007 through December 2010 (1459 days)Blue: X=Page ClicksGreen: Y= Income Quantize Data: Page Clicks 100 and Daily Income, $1/3. p(X,Y) = joint pdf 66 25 7 6 2 1 2 0 0 1 122 51 24 9 2 5 2 0 1 1 88 50 31 19 15 4 3 1 3 2 72 59 51 13 12 7 6 9 1 5 49 38 43 23 14 18 6 3 4 8 26 24 30 22 11 20 7 9 3 12 18 24 18 17 9 14 8 7 3 6 8 9 17 17 7 8 4 5 5 5 2 6 4 5 3 5 6 4 0 6 3 3 7 3 6 7 9 7 3 13

Marginal pdf’s Joint pdf estimated from normalized histogram X Y Joint Entropy Marginal Entropy

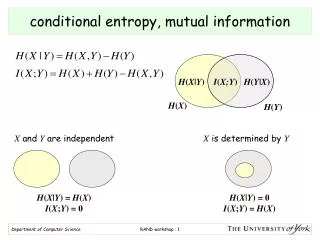

Conditional Entropy Mutual Information