COS 320 Compilers

COS 320 Compilers. David Walker. Outline. Last Week Introduction to ML Today: Lexical Analysis Reading: Chapter 2 of Appel. The Front End. Lexical Analysis : Create sequence of tokens from characters Syntax Analysis : Create abstract syntax tree from sequence of tokens

COS 320 Compilers

E N D

Presentation Transcript

COS 320Compilers David Walker

Outline • Last Week • Introduction to ML • Today: • Lexical Analysis • Reading: Chapter 2 of Appel

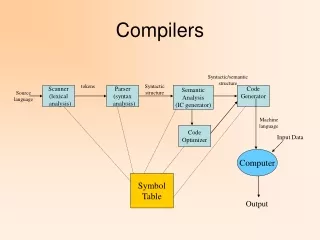

The Front End • Lexical Analysis: Create sequence of tokens from characters • Syntax Analysis: Create abstract syntax tree from sequence of tokens • Type Checking: Check program for well-formedness constraints stream of characters stream of tokens abstract syntax Lexer Parser Type Checker

Lexical Analysis • Lexical Analysis: Breaks stream of ASCII characters (source) into tokens • Token: An atomic unit of program syntax • i.e., a word as opposed to a sentence • Tokens and their types: Characters Recognized: foo, x, listcount 10.45, 3.14, -2.1 ; ( 50, 100 if Type: ID REAL SEMI LPAREN NUM IF Token: ID(foo), ID(x), ... REAL(10.45), REAL(3.14), ... SEMI LPAREN NUM(50), NUM(100) IF

Lexical Analysis Example x = ( y + 4.0 ) ;

Lexical Analysis Example x = ( y + 4.0 ) ; ID(x) Lexical Analysis

Lexical Analysis Example x = ( y + 4.0 ) ; ID(x) ASSIGN Lexical Analysis

Lexical Analysis Example x = ( y + 4.0 ) ; ID(x) ASSIGN LPAREN ID(y) PLUS REAL(4.0) RPAREN SEMI Lexical Analysis

Lexer Implementation • Implementation Options: • Write a Lexer from scratch • Boring, error-prone and too much work

Lexer Implementation • Implementation Options: • Write a Lexer from scratch • Boring, error-prone and too much work • Use a Lexer Generator • Quick and easy. Good for lazy compiler writers. Lexer Specification

Lexer Implementation • Implementation Options: • Write a Lexer from scratch • Boring, error-prone and too much work • Use a Lexer Generator • Quick and easy. Good for lazy compiler writers. Lexer Specification Lexer lexer generator

Lexer Implementation • Implementation Options: • Write a Lexer from scratch • Boring, error-prone and too much work • Use a Lexer Generator • Quick and easy. Good for lazy compiler writers. stream of characters Lexer Specification Lexer lexer generator stream of tokens

How do we specify the lexer? • Develop another language • We’ll use a language involving regular expressions to specify tokens • What is a lexer generator? • Another compiler ....

Some Definitions • We will want to define the language of legal tokens our lexer can recognize • Alphabet – a collection of symbols (ASCII is an alphabet) • String – a finite sequence of symbols taken from our alphabet • Language of legal tokens – a set of strings • Language of ML keywords – set of all strings which are ML keywords (FINITE) • Language of ML tokens – set of all strings which map to ML tokens (INFINITE) • A language can also be a more general set of strings: • eg: ML Language – set of all strings representing correct ML programs (INFINITE).

Regular Expressions: Construction • Base Cases: • For each symbola in alphabet, a is a RE denoting the set {a} • Epsilon (e) denotes { } • Inductive Cases (M and N are REs) • Alternation (M | N) denotes strings in M or N • (a | b) == {a, b} • Concatenation (M N) denotes strings in M concatenated with strings in N • (a | b) (a | c) == { aa, ac, ba, bc } • Kleeneclosure (M*) denotes strings formed by any number of repetitions of strings in M • (a | b )* == {e, a, b, aa, ab, ba, bb, ...}

Regular Expressions • Integers begin with an optional minus sign, continue with a sequence of digits • Regular Expression: (- | e) (0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9)*

Regular Expressions • Integers begin with an optional minus sign, continue with a sequence of digits • Regular Expression: (- | e) (0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9)* • So writing (0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9) and even worse (a | b | c | ...) gets tedious...

Regular Expressions (REs) • common abbreviations: • [a-c] == (a | b | c) • . == any character except \n • \n == new line character • a+ == one or more • a? == zero or one • all abbreviations can be defined in terms of the “standard” REs

Ambiguous Token Rule Sets • A single RE is a completely unambiguous specification of a token. • call the association of an RE with a token a “rule” • To lex an entire programming language, we need many rules • but ambiguities arise: • multiple REs or sequences of REs match the same string • hence many token sequences possible

Ambiguous Token Rule Sets • Example: • Identifier tokens: [a-z] [a-z0-9]* • Sample keyword tokens: if, then, ... • How do we tokenize: • foobar ==> ID(foobar) or ID(foo) ID(bar) • if ==> ID(if) or IF

Ambiguous Token Rule Sets • We resolve ambiguities using two conventions: • Longestmatch: The regular expression that matches the longest string takes precedence. • RulePriority: The regular expressions identifying tokens are written down in sequence. If two regular expressions match the same (longest) string, the first regular expression in the sequence takes precedence.

Ambiguous Token Rule Sets • Example: • Identifier tokens: [a-z] [a-z0-9]* • Sample keyword tokens: if, then, ... • How do we tokenize: • foobar ==> ID(foobar) or ID(foo) ID(bar) • use longest match to disambiguate • if ==> ID(if) or IF • keyword rules have higher priority than identifier rule

Lexer Implementation Implementation Options: • Write Lexer from scratch • Boring and error-prone • Use Lexical Analyzer Generator • Quick and easy ml-lex is a lexical analyzer generator for ML. lex and flex are lexical analyzer generators for C.

ML-Lex Specification • Lexical specification consists of 3 parts: User Declarations (plain ML types, values, functions) %% ML-LEX Definitions (RE abbreviations, special stuff) %% Rules (association of REs with tokens) (each token will be represented in plain ML)

User Declarations • User Declarations: • User can define various values that are available to the action fragments. • Two values must be defined in this section: • type lexresult • type of the value returned by each rule action. • fun eof () • called by lexer when end of input stream is reached.

ML-LEX Definitions • ML-LEX Definitions: • User can define regular expression abbreviations: • Define multiple lexers to work together. Each is given a unique name. DIGITS = [0-9] +; LETTER = [a-zA-Z]; %s LEX1 LEX2 LEX3;

Rules • Rules: • A rule consists of a pattern and an action: • Pattern in a regular expression. • Action is a fragment of ordinary ML code. • Longest match & rule priority used for disambiguation • Rules may be prefixed with the list of lexers that are allowed to use this rule. <lexer_list> regular_expression => (action.code) ;

Rules • Rule actions can use any value defined in the User Declarations section, including • type lexresult • type of value returned by each rule action • val eof : unit -> lexresult • called by lexer when end of input stream reached • special variables: • yytext: input substring matched by regular expression • yypos: file position of the beginning of matched string • continue (): doesn’t return token; recursively calls lexer

A Simple Lexer datatype token = Num of int | Id of string | IF | THEN | ELSE | EOF type lexresult = token (* mandatory *) fun eof () = EOF (* mandatory *) fun itos s = case Int.fromString s of SOME x => x | NONE => raise fail %% NUM = [1-9][0-9]* ID = [a-zA-Z] ([a-zA-Z] | NUM)* %% if => (IF); then => (THEN); else => (ELSE); {NUM} => (Num (itos yytext)); {ID} => (Id yytext);

Using Multiple Lexers • Rules prefixed with a lexer name are matched only when that lexer is executing • Initial lexer is called INITIAL • Enter new lexer using: • YYBEGIN LEXERNAME; • Aside: Sometimes useful to process characters, but not return any token from the lexer. Use: • continue ();

Using Multiple Lexers type lexresult = unit (* mandatory *) fun eof () = () (* mandatory *) %% %s COMMENT %% <INITIAL> if => (); <INITIAL> [a-z]+ => (); <INITIAL> “(*” => (YYBEGIN COMMENT; continue ()); <COMMENT> “*)” => (YYBEGIN INITIAL; continue ()); <COMMENT> “\n” | . => (continue ());

A (Marginally) More Exciting Lexer type lexresult = string (* mandatory *) fun eof () = (print “End of file\n”; “EOF”) (* mandatory *) %% %s COMMENT INT = [1-9] [0-9]*; %% <INITIAL> if => (“IF”); <INITIAL> then => (“THEN”); <INITIAL> {INT} => ( “INT(“ ^ yytext ^ “)” ); <INITIAL> “(*” => (YYBEGIN COMMENT; continue ()); <COMMENT> “*)” => (YYBEGIN INITIAL; continue ()); <COMMENT> “\n” | . => (continue ());

Implementing ML-Lex • By compiling, of course: • convert REs into non-deterministic finite automata • convert non-deterministic finite automata into deterministic finite automata • convert deterministic finite automata into a blazingly fast table-driven algorithm • you did mostly everything but possibly the last step in your favorite algorithms class • need to deal with disambiguation & rule priority • need to deal with multiple lexers

Refreshing your memory: RE ==> NDFA ==> DFA Lex rules: if => (Tok.IF) [a-z][a-z0-9]* => (Tok.Id;)

Refreshing your memory: RE ==> NDFA ==> DFA Lex rules: if => (Tok.IF) [a-z][a-z0-9]* => (Tok.Id;) NDFA: a-z0-9 a-z 1 4 Tok.Id i 2 3 f Tok.IF

Refreshing your memory: RE ==> NDFA ==> DFA Lex rules: if => (Tok.IF) [a-z][a-z0-9]* => (Tok.Id;) NDFA: DFA: a-z0-9 a-z0-9 a-z 1 4 a-hj-z 1 Tok.Id 4 Tok.Id a-eg-z0-9 i i a-z0-9 2 3 a-z0-9 2,4 3,4 f Tok.IF Tok.Id f Tok.IF (could be Tok.Id; decision made by rule priority)

Table-driven algorithm • NDFA: a-z0-9 a-hj-z 1 4 Tok.Id a-eg-z0-9 i a-z0-9 2,4 3,4 Tok.Id f Tok.IF

Table-driven algorithm • NDFA (states conveniently renamed): a-z0-9 a-hj-z S1 S4 Tok.Id a-eg-z0-9 i a-z0-9 S2 S3 Tok.Id f Tok.IF

Table-driven algorithm • DFA: Transition Table: S1 S2 S3 S4 a-z0-9 a a-hj-z S1 S4 b Tok.Id a-eg-z0-9 ... i a-z0-9 S2 S3 i Tok.Id f Tok.IF ...

Table-driven algorithm • DFA: Transition Table: S1 S2 S3 S4 a-z0-9 a a-hj-z S1 S4 b Tok.Id a-eg-z0-9 ... i a-z0-9 S2 S3 i Tok.Id f Tok.IF ... Final State Table: S1 S2 S3 S4

Table-driven algorithm • DFA: Transition Table: S1 S2 S3 S4 a-z0-9 a a-hj-z S1 S4 b Tok.Id a-eg-z0-9 ... i a-z0-9 S2 S3 i Tok.Id f Tok.IF ... • Algorithm: • Start in start state • Transition from one state to next • using transition table • Every time you reach a potential final • state, remember it + position in stream • When no more transitions apply, revert • to last final state seen + position • Execute associated rule code Final State Table: S1 S2 S3 S4

Dealing with Multiple Lexers Lex rules: <INITIAL> if => (Tok.IF); <INITIAL> [a-z][a-z0-9]* => (Tok.Id); <INITIAL> “(*” => (YYBEGIN COMMENT; continue ()); <COMMENT> “*)” => (YYBEGIN INITIAL; continue ()); <COMMENT> . => (continue ());

Dealing with Multiple Lexers Lex rules: <INITIAL> if => (Tok.IF); <INITIAL> [a-z][a-z0-9]* => (Tok.Id); <INITIAL> “(*” => (YYBEGIN COMMENT; continue ()); <COMMENT> “*)” => (YYBEGIN INITIAL; continue ()); <COMMENT> . => (continue ()); INITIAL (* COMMENT *) [a-z][a-z0-9] .

Summary • A Lexer: • input: stream of characters • output: stream of tokens • Writing lexers by hand is boring, so we use a lexer generator: ml-lex • lexer generators work by converting REs through automata theory to efficient table-driven algorithms. • Moral: don’t underestimate your theory classes! • great application of cool theory developed in the 70s. • we’ll see more cool apps as the course progresses