Benchmarking CPMD Performance on Ranger, Pople, and Abe: Insights and Future Directions

This report examines the comparative performance of the Car-Parrinello Molecular Dynamics (CPMD) code on various platforms—Ranger, Pople, and Abe—focusing on benchmarking practices and system memory requirements. It discusses the challenges of benchmarking due to the diversity in chemical systems and the choice of pseudopotentials. Key parameters such as energy cutoff and basis sets are analyzed in relation to computational memory. Future work aims to enhance benchmarking methods and system performance through profiling tools and adapting the CPMD for different metallic systems.

Benchmarking CPMD Performance on Ranger, Pople, and Abe: Insights and Future Directions

E N D

Presentation Transcript

Preliminary CPMD Benchmarks On Ranger, Pople, and Abe TG AUS Materials Science Project Matt McKenzie LONI

What is CPMD ? • Car Parrinello Molecular Dynamics • www.cpmd.org • Parallelized plane wave / pseudopotential implementation of Density Functional Theory • Common chemical systems: liquids, solids, interfaces, gas clusters, reactions • Large systems ~500atoms • Scales w/ # electrons NOT atoms

Key Points in Optimizing CPMD • Developers have done a lot of work here • The Intel compiler is used in this study • BLAS/LAPACK • BLAS levels 1 (vector ops) and 3 (matrix-matrix ops) • Some level 2 (vector-matrix) • Integrated optimized FFT Library • Compiler flag: -DFFT_DEFAULT

Benchmarking CPMD is difficult because… • Nature of the modeled chemical system • Solids, liquids, interfaces • Require different parameters stressing the memory along the way • Volume and # electrons • Choice of the pseudopotential (psp) • Norm-conserving, ‘soft’, non-linear core correction (++memory) • Type of simulation conducted • CPMD, BOMD, Path Integral, Simulated Annealing, etc… • CPMD is a robust code • Very chemical system specific • Any one CPMD sim. cannot be easily compared to another • However, THERE ARE TRENDS • FOCUS: simple wave function optimization timing • This is a common ab initio calculation

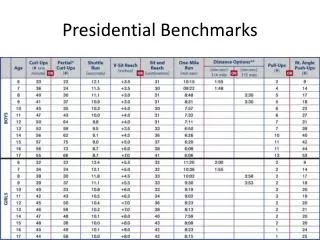

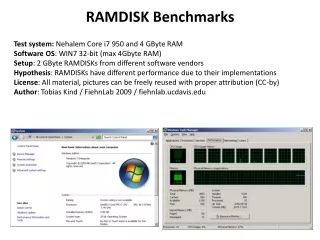

Probing Memory Limitations • For any ab initio calculation: • Accuracy is proportional to # basis sets used • Stored in matrices, requiring increased RAM • Energy cutoff determines the size of the Plane wave basis set, NPW = (1/2π2)ΩEcut3/2

Model Accuracy & Memory Overview Image obtained from the CPMD user’s manual Pseudopotential’s convergence behavior w.r.t. basis set size (cutoff) NOTE: Choice of psp is important i.e. ‘softer’ psp = lower cutoff = loss of transferability VASP specializes in softpsp’s ; CPMD works with any psp’s

Memory ComparisonΨoptimization, 63 Si atoms, SGS psp Ecut= 50 Ryd Ecut = 70 Ryd • NPW≈ 134,000 • Memory = 1.0 GB • NPW ≈ 222,000 • Memory = 1.8 GB Well known CPMD benchmarking model: www.cpmd.org Results can be shown either by: Wall time = (n steps x iteration time/step) + network overhead Typical Results / Interpretations, nothing new here Iteration time = fundamental unit, used throughout any given CPMD calculation It neglects the network, yet results are comparable Note, CPMD runs well on a few nodes connected with gigabyte ethernet Two important factor which affects CPMD performance MEMORY BANDWIDTH FLOATING-POINT

Results I • All calculations ran no longer than 2 hours • Ranger is not the preferred machine for CPMD • Scales well between 8 and 96 cores • This is a common CPMD trend • CPMD is known to super-linearity scale above ~1000 processors • Will look into this • Chemical system would have to change as this smaller simulation is likely not to scale in this manner

Results II • Pople and Abe gave the best performance • IF a system requires more than 96 procs, Abe would be a slightly better choice • Knowing the difficulties in benchmarking CPMD, ( psp, volume, system phase, sim. protocol ) this benchmark is not a good representation of all the possible uses of CPMD. • Only explored one part of the code • How each system performs when taxed with additional memory requirements is a better indicator of CPMD’s performance • To increase system accuracy, increase Ecut

Percent Difference between 70 and 50 Ryd%Diff = [(t70-t50) / t50]*100

Conclusions RANGER • Re-ran Ranger calculations • Lower performance maybe linked to Intel compiler on AMD chips • PGI compiler could show an improvement • Nothing over 5% is expected: still be the slowest • Wanted to use the same compiler/math libraries ABE • Possible super-linear scaling, tAbe, 256procs < tothers, 256procs • Memory size effects hinders performance below 96 procs POPLE • Is the best system for wave function optimization • Shows a (relatively) stable, modest speed decrease as the memory requirement is increased, it is the recommended system

Future Work • Half-node benchmarking • Profiling Tools • Test the MD part of CPMD • Force calculations involving the non-local parts of the psp will increase memory • Extensive level 3 BLAS & some level 2 • Many FFT all-to-all calls, Now the network plays a role • Memory > 2 GB • A new variable ! Monitor the fictitious electron mass • Changing the model • Metallic system (lots of electrons, change of psp; Ecut) • Check super-linear scaling