Automatic learning of morphology

Automatic learning of morphology. John Goldsmith July 2003 University of Chicago. Language learning: unsupervised learning. Not “theoretical” – but based on a theory with solid foundations. Practical, real data.

Automatic learning of morphology

E N D

Presentation Transcript

Automatic learning of morphology John Goldsmith July 2003 University of Chicago

Language learning: unsupervised learning • Not “theoretical” – but based on a theory with solid foundations. • Practical, real data. • Don’t wait till your grammars are written to start worrying about language learning. You don’t know what language learning is till you’ve tried it. (Like waiting till your French pronunciation is perfect before you start writing a phonology of the language.)

What you need (to write a language learning device) does not look like the stuff you codified in your grammar. Segmentation and classification.

Maximize the probability of the data. • This leads to Minimum Description Length theory, which says: • Minimize the sum of: • Positive log probability of the data + • Length of the grammar • It thus leads to a non-cognitive foundation for a science of linguistics – if you happen to be interested in that. You do not need to be. I am.

!! • Discovery of structure in the data is always equivalent to an increase in the probability that the model assigns to that data. • The devil is in the details.

Classes to come: • Tuesday: the basics of probability theory, and the treatment and learning of phonotactics, and their role in nativization and alternations • Thursday: MDL and the discovery of “chunks” in an unbroken string of data.

Looking ahead • Probability involves a set of numbers (not negative) that sum to 1. • Logarithm of numbers between 0 and 1 are negative. So we shift our attention to -1 times the log (the positiv log: plog).

23 = 8, so log2 (8) is 3. • 24 = 16, so log2 (16) is 4. • 210 = 1024, so log2 (1024) is 10 • 2-1 = ½ , so log2 (1/2) is -1. • 2-2 = 1/4 , so log2 (1/4) is -2. • 2-10 = 1/1024 , so log2 (1/1024) is -10

Plog (positive logs) • These numbers get bigger when the fraction gets smaller (closer to zero). • They get smaller when the fraction gets bigger (close to 1). Since we want big fractions (high probability), we want small plogs. • The plog is also the length of the compressed form a word. When you use WinZip, the length of the file is the sum of a lot of plogs for all the words (not exactly words, really, but close).

Learning • The relationship between data and grammar. • The goal is to create a device that learns aspects of language, given data: a little linguist in a tin box. • Today: morphological structure.

Linguistica • A C++ program that runs under Windows that is available at my homepage http://humanities.uchicago.edu/ faculty/goldsmith/ There are explanations and other downloads available there.

Technical description in Computational Linguistics (June 2001) “Unsupervised Learning of the Morphology of a Natural Language”

Overview • Look at Linguistica in action: English, French • Why do this? • What is the theory behind it? • What are the heuristics that make it work? • Where do we go from here?

Linguistica • A program that takes in a text in an “unknown” language… • and produces a morphological analysis: • a list of stems, prefixes, suffixes; • more deeply embedded morphological structure; • regular allomorphy

raw data Linguistica Analyzed data

Here: lists of stems, affixes, signatures, etc. Here: some messages from the analyst to the user. Actions and outlines of information

Read a corpus • Brown corpus: 1,200,000 words of typical English • French Encarta • or anything else you like, in a text file. • First set the number of words you want read, then select the file.

List of stems A stem’s signature is the list of suffixes it appears with in the corpus, in alphabetical order. abilit ies.y abilities, ability aboli tion abolition absen ce-t absence, absent absolute NULL-ly absolute, absolutely

Signature: NULL ed ing s for example, account accounted accounting accounts add added adding adds

More sophisticated signature… Signature <e>ion . NULL composite concentrate corporate détente discriminate evacuate inflate opposite participate probate prosecute tense What is this? composite and composition composite composit composit + ion It infers that iondeletes a stem-final ‘e’ before attaching.

Top signatures in French In French, we find that the outermost layer of morphology is not so interesting: it’s mostly é, e, and s. But we can get inside the morphology of the resulting stems, and get the roots:

Why do this? • (It is a lot of fun.) • It can be of practical use: stemming for information retrieval, analysis for statistically-based machine translation. • This clarifies what the task of language-acquisition is.

Language acquisition • It’s been suggested that (since language acquisition seems to be dauntingly, impossibly hard) it must require prior (innate) knowledge. • Let’s choose a task where innate knowledge cannot plausibly be appealed to, and see (i) if the task is still extremely difficult, and (ii) what kind of language acquisition device could be capable of dealing with the problem.

Learning of morphology • The nature of morphology-acquisition does not become clearer by reducing the number of possible analyses of the data, but rather by • Better understanding the formal character of knowledge and learning.

Over-arching theory • The selection of a grammar, given the data, is an optimization problem. (this has nothing to do with Optimality theory, which does not optimize any function! Optimization means finding a maximum or minimum – remember calculus?) Minimum Description Length provides us with a means for understanding grammar selection as minimizing a function. (We’ll get to MDL in a moment)

What’s being minimized by writing a good morphology? • Number of letters, for one: • compare:

Naive Minimum Description Length Corpus: jump, jumps, jumping laugh, laughed, laughing sing, sang, singing the, dog, dogs total: 62 letters Analysis: Stems: jump laugh sing sang dog (20 letters) Suffixes: s ing ed (6 letters) Unanalyzed: the (3 letters) total: 29 letters. Notice that the description length goes UP if we analyze sing into s+ing

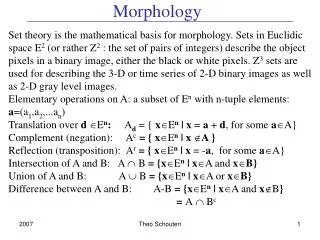

Minimum Description Length (MDL) • Jorma Rissanen 1989 • The best “theory” of a set of data is the one which is simultaneously: • 1 most compact or concise, and • 2 provides the best modeling of the data • “Most compact” can be measured in bits, using information theory • “Best modeling” can also be measured in bits…

Description Length = • Conciseness: Length of the morphology. It’s almost as if you count up the number of symbols in the morphology (in the stems, the affixes, and the rules). • Length of the modeling of the data. We want a measure which gets bigger as the morphology is a worse description of the data. • Add these two lengths together = Description Length

Conciseness of the morphology Sum all the letters, plus all the structure inherent in the description, using information theory. The essence of what you need to know from information theory is this: that mentioning an object can be modeled by a pointer to that object, whose length (complexity) is equal to -1 times the log of its frequency. But why you should care about -log (freq(x)) = is much less obvious.

Conciseness of stem list and suffix list Number of letters in suffix l = number of bits/letter < 5 cost of setting up this entity: length of pointer in bits Number of letters in stem

Signature list length list of pointers to signatures <X> indicates the number of distinct elements in X

Length of the modeling of the data Probabilistic morphology: the measure: • -1 * log probability ( data ) where the morphology assigns a probability to any data set. This is known in information theory as the optimal compressed length of the data (given the model).

Probability of a data set? A grammar can be used not (just) to specify what is grammatical and what is not, but to assign a probability to each string (or structure). If we have two grammars that assign different probabilities, then the one that assigns a higher probability to the observed data is the better one.

This follows from the basic principle of rationality in the Universe: Maximize the probability of the observed data.

From all this, it follows: There is an objective answer to the question: which of two analyses of a given set of data is better? (modulo the differences between different universal Turing machines) However, there is no general, practical guarantee of being able to find the best analysis of a given set of data. Hence, we need to think of (this sort of) linguistics as being divided into two parts:

An evaluator (which computes the Description Length); and • A set of heuristics, which create grammars from data, and which propose modifications of grammars, in the hopes of improving the grammar. (Remember, these “things” are mathematical things: algorithms.)

Let’s get back down to Earth • Why is this problem so hard at first? • Because figuring out the best analysis of any given word generally requires having figured out the rough outlines of the whole overall morphology. (Same is true for other parts of the grammar!). How do we start?

We’ll modify a suggestion made by Zellig Harris (1955, 1967, 1979[1968]). Harris always believed this would work. • It doesn’t, but it’s clever and it’s a good start – but only that.