Automatically Tuned Linear Algebra Software (ATLAS)

Automatically Tuned Linear Algebra Software (ATLAS) adapts to different architectures through Automated Empirical Optimization of Software (AEOS) techniques. This optimization package primarily provides Basic Linear Algebra Subprograms (BLAS) and intelligently searches the optimization space to match application-specific architectures, making it essential for improving performance on diverse platforms. ATLAS supports sophisticated timers, code generation, and parameterization, thus ensuring optimized code remains portable and cost-effective. Future updates include pthread support and enhanced libraries for advanced numerical computations.

Automatically Tuned Linear Algebra Software (ATLAS)

E N D

Presentation Transcript

Automatically Tuned Linear Algebra Software (ATLAS) R. Clint Whaley University of Tennessee www.netlib.org/atlas

What is ATLAS • A package that adapts to differing architectures via AEOS techniques • Initially, supply BLAS • Automated Empirical Optimization of Software (AEOS) • Machine searches opt space • Finds application-apparent architecture • AEOS requires: • Method of code variation • Code generation • Multiple implement. • Parameterization • Sophisticated Timers • Robust search heuristic

Why ATLAS is needed • BLAS require many man-hours / platform • Only done if financial incentive is there • Many platforms will never have an optimal version • Lags behind hardware • May not be affordable by everyone • Improves vendor code • Allows for portably optimal codes • Obsolescence insurance • Operations may be important, but not general enough for standard

ATLAS Software • Coming soon • pthread support • Open source kernels • SSE & 3DNOW! • GOTO ev5/6 BLAS • Performance for banded and packed • More LAPACK • Coming not-so-soon • Sparse support • User customization • Currently provided • Full BLAS (C & F77) • Level 3 BLAS • Generated GEMM • 1-2 hours install time per precision • Recursive GEMM-based L3 BLAS • Antoine Petitet • Level 2 BLAS • GEMV & GER ker • Level 1 BLAS • Some LAPACK • LU, LLt

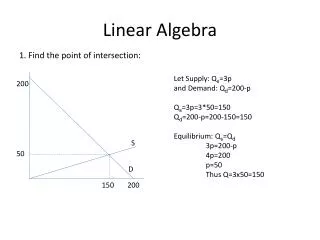

N K N A M B M K * NB C Algorithmic Approach for Matrix Multiply • Only generated code is on-chip multiply • All BLAS operations written in terms of generated on-chip multiply • All transpose cases coerced through data copy to 1 case of on-chip multiply • Only 1 case generated per platform

Algorithmic approach for Level 3 BLAS Recursive TRMM • Recur down to L1 cache block size • Need kernel at bottom of recursion • Use gemm-based kernel for portability 0 0 0 0 0 0 0