Game Playing

Game Playing. Kevin Chernishenko Nick Quach Mervyn Yong Terry Yan. All information and figures provided by Artificial Intelligence: A Modern Approach (Stuart Russell and Peter Norvig, 1995) unless stated otherwise. Introduction.

Game Playing

E N D

Presentation Transcript

GamePlaying Kevin Chernishenko Nick Quach Mervyn Yong Terry Yan All information and figures provided by Artificial Intelligence: A Modern Approach (Stuart Russell and Peter Norvig, 1995) unless stated otherwise.

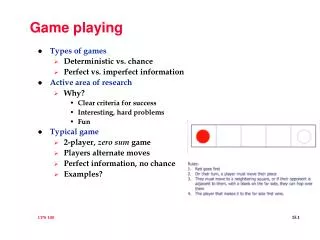

Introduction • The state of a game is easy to represent and the agents are usually restricted to a small number of well-defined moves. • Using games as search problems is one of the oldest endeavors in Artificial Intelligence. • As computers became programmable in the 1950s, both Claude Shannon and Alan Turing had written the first chess programs.

Chess as a First Choice • It provides proof that a machine can actually do something that was thought to require intelligence. • It has simple rules. • The world state is fully accessible to the program. • The computer representation can be correct in every relevant detail.

Complexity of Searching • The presence of an opponent makes the decision problem more complicated. • Games are usually much too hard to solve. • Games penalize inefficiency very severally.

Things to Come… • Perfect Decisions in Two-Person Games • Imperfect Decisions • Alpha-Beta Pruning • Games That Include an Element of Chance

Games a Search Problem • Some games can normally be defined in the form of a tree. • Branching factor is usually an average of the possible number of moves at each node. • This is a simple search problem: a player must search this search tree and reach a leaf node with a favorable outcome.

Components of a Game • Initial state • Set of operators • Terminal Test • Terminal State is the state the player can be in at the end of a game • Utility Function

Two Player Game • Two players: Max and Min • Objective of both Max and Min to optimize winnings • Max must reach a terminal state with the highest utility • Min must reach a terminal state with the lowest utility • Game ends when either Max and Min have reached a terminal state • upon reaching a terminal state points maybe awarded or sometimes deducted

Search Problem Revisited • Simple problem is to reach a favorable terminal state • Problem Not so simple... • Max must reach a terminal state with as high a utility as possible regardless of Min’s moves • Max must develop a strategy that determines best possible move for each move Min makes.

Example: Two-Ply Game 3 12 8 2 4 6 14 5 2

Minimax Algorithm • Minimax Algorithm determines optimum strategy for Max: • Generate entire search tree • Apply utility function to terminal states • use utility value of current layer to determine utility of each node for the upper layer • continue when root node is reached • Minimax Decision - maximizes the utility for Max based on the assumption that Min will attempt to Minimize this utility.

Two-Ply Game: Revisited 3 3 2 2 3 12 8 2 4 6 14 5 2

An Analysis • This algorithm is only good for games with a low branching factor, Why? • In general, the complexity is: O(bd) where: b = average branching factor d = number of plies

Is There Another Way? • Take Chess on average has: • 35 branches and • usually at least 100 moves • so game space is: • 35100 • Is this a realistic game space to search? • Since time is important factor in gaming searching this game space is highly undesirable

Why is it Imperfect? • Many game produce very large search trees. • Without knowledge of the terminal states the program is taking a guess as to which path to take. • Cutoffs must be implemented due to time restrictions, either buy computer or game situations.

Evaluation Functions • A function that returns an estimate of the expected utility of the game from a given position. • Given the present situation give an estimate as to the value of the next move. • The performance of a game-playing program is dependant on the quality of the evaluation functions.

How to Judge Quality • Evaluation functions must agree with the utility functions on the terminal states. • It must not take too long ( trade off between accuracy and time cost). • Should reflect actual chance of winning.

Design • Different evaluation functions must depend on the nature of the game. • Encode the quality of a position in a number that is representable within the framework of the given language. • Design a heuristic for value to the given position of any object in the game.

Different Types • Material Advantage Evaluation Functions • Values of the pieces are judge independent of other pieces on the board. A value is returned base on the material value of the computer minus the material value of the player. • Weighted Linear Functions • W1f1+w2f2+……wnfn W’s are weight of the pieces F’s are features of the particular positions

Example • Chess : Material Value – each piece on the board is worth some value ( Pawn = , Knights = 3 …etc) www.imsa.edu/~stendahl/comp/txt/gnuchess.txt • Othello : Value given to # of certain color on the board and # of colors that will be converted lglwww.epfl.ch/~wolf/java/html/Othello-desc.html

Different Types • Use probability of winning as the value to return. • If A has a 100% chance of winning then its value to return is 1.00

Cutoff Search • Cutting of searches at a fixed depth dependant on time • The deeper the search the more information is available to the program the more accurate the evaluation functions • Iterative deepening – when time runs out return the program returns the deepest completed search. • Is searching a node deeper better than searching more nodes?

Consequences • Evaluation function might return an incorrect value. • If the search in cutoff and the next move results involves a capture then the value that is return maybe incorrect. • Horizon problem • Moves that are pushed deeper into the search trees may result in an oversight by the evaluation function.

Improvements to Cutoff • Evaluation functions should only be applied to quiescent position. • Quiescent Position : Position that are unlikely to exhibit wild swings in value in the near future. • Non quiescent position should be expanded until on is reached. This extra search is called a Quiescence search. • Will provide more information about that one node in the search tree but may result in the lose of information about the other nodes.

Pruning • What is pruning? • The process of eliminating a branch of the search tree from consideration without examining it. • Why prune? • To eliminate searching nodes that are potentially unreachable. • To speedup the search process.

Alpha-Beta Pruning • A particular technique to find the optimal solution according to a limited depth search using evaluation functions. • Returns the same choice as minimax cutoff decisions, but examines fewer nodes. • Gets its name from the two variables that are passed along during the search which restrict the set of possible solutions.

Definitions • Alpha – the value of the best choice so far along the path for MAX. • Beta – the value of the best choice (lowest value) so far along the path for MIN.

Implementation • Set root node alpha to negative infinity and beta to positive infinity. • Search depth first, propagating alpha and beta values down to all nodes visited until reaching desired depth. • Apply evaluation function to get the utility of this node. • If parent of this node is a MAX node, and the utility calculated is greater than parents current alpha value, replace this alpha value with this utility.

Implementation (Cont’d) • If parent of this node is a MIN node, and the utility calculated is less than parents current beta value, replace this beta value with this utility. • Based on these updated values, it compares the alpha and beta values of this parent node to determine whether to look at any more children or to backtrack up the tree. • Continue the depth first search in this way until all potentially better paths have been evaluated.

a = -∞ • b = + ∞ • a = -∞ • b = + ∞ • a = -∞ • b = + ∞ a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = 8 • b = +∞ a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = 8 • a = 8 a = -∞ • b = +∞b = 8 a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = 8 • a = 8 a = 3 • b = +∞b = 8 a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = 3 • a = 8 a = 3 • b = +∞ b = 8 a = -∞ b = + ∞ a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = +∞ a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞ a = 3 • b = 3 b = + ∞ • a = 8 a = 3 a = 2 • b = + ∞ b = 8 b = + ∞ a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞ a = 3 • b = 3 b = 2 • a = 8 a = 3 a = 2 • b = + ∞ b = 8 b = + ∞ a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞ a = 3 • b = 3 b = 2 • a = 8 a = 3 a = 2 • b = + ∞ b = 8 b = + ∞ a = -∞ • b = +∞ • a = -∞ • b = +∞ • a = -∞ • b = 8 • a = 8 • b = +∞ a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞ a = 3 • b = 3 b = + ∞ • a = 8 a = 3 a = 3 • b = + ∞ b = 8 b = + ∞ a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞a = 3 • b = 3 b = + ∞ • a = 8 a = 3 • b = + ∞ b = 8 a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞ • b = 3 • a = 8 a = 3 • b = + ∞ b = 8 a = -∞ • b = 3 • a = 3 a = -∞ • b = + ∞ b = 3 • a = -∞ a = 3 a = -∞ • b = 3 b = 2 b = 3 • a = 8 a = 3 a = 2 a = -∞ • b = + ∞ b = 8 b = + ∞ b = 3 a = -∞ • b = 3 • a = 3 a = -∞ • b = + ∞ b = 3 • a = -∞ a = 3 a = -∞ • b = 3 b = 2 b = 3 • a = 8 a = 3 a = 2 a = 14 • b = + ∞ b = 8 b = + ∞ b = 3 a = -∞ • b = 3 • a = 3 a = 3 • b = + ∞ b = 3 • a = -∞ a = 3 a = -∞ • b = 3 b = 2 b = 3 • a = 8 a = 3 a = 2 a = 14 • b = + ∞ b = 8 b = + ∞ b = 3 a = -∞ • b = 3 • a = 3 a = 3 • b = + ∞ b = 3 • a = -∞ a = 3 a = -∞ • b = 3 b = 2 b = 3 • a = 8 a = 3 a = 2 a = 14 • b = + ∞ b = 8 b = + ∞ b = 3 a = -∞ • b = 3 • a = 3 a = -∞ • b = + ∞b = 3 • a = -∞ a = 3 a = -∞ • b = 3 b = 2 b = 3 • a = 8 a = 3 a = 2 a = 14 • b = + ∞b = 8 b = + ∞ b = 3 a = -∞ • b = + ∞ • a = 3 • b = + ∞ • a = -∞a = 3 • b = 3 b = 2 • a = 8 a = 3 a = 2 • b = + ∞b = 8 b = + ∞ a = -∞ • b = 3 • a = 3 a = -∞ • b = + ∞ b = 3 • a = - J a = 3 • b = 3 b = 2 • a = 8 a = 3 a = 2 • b = + ∞ b = 8 b = + ∞ a = -∞ • b = 3 • a = 3 a = -∞ • b = + ∞ b = 3 • a = -∞ a = 3 a = -∞ • b = 3 b = 2 b = 3 • a = 8 a = 3 a = 2 • b = + ∞ b = 8 b = + ∞ a = -∞ • b = + ∞ • a = -∞ • b = + ∞ Example: Depth = 4 MIN MAX

Effectiveness • The effectiveness depends on the order in which the search progresses. • If b is the branching factor and d is the depth of the search, the best case for alpha-beta is O(bd/2), compared to the best case of minimax which is O(bd).

Problems • If there is only one legal move, this algorithm will still generate an entire search tree. • Designed to identify a “best” move, not to differentiate between other moves. • Overlooks moves that forfeit something early for a better position later. • Evaluation of utility usually not exact. • Assumes opponent will always choose the best possible move.

Chance Nodes • Many games that unpredictable outcomes caused by such actions as throwing a dice or randomizing a condition. • Such games must include chance nodes in addition to MIN and MAX nodes. • For each node, instead of a definite utility or evaluation, we can only calculate an expected value.

Calculating Expected Value • For the terminal nodes, we apply the utility function. • We can calculate the expected value of a MAX move by applying an expectimax value to each chance node at the same ply. • After calculating the expected value of a chance node, we can apply the normal minimax-value formula.

Expectimax Function • Provided we are at a chance node preceding MAX’s turn, we can calculate the expected utility for MAX as follows: • Let di be a possible dice roll or random event, where P(di) represents the probability of that event occurring. • If we let S denote the set of legal positions generated by each dice roll, we have the expectimax function defined as follows: expectimax(C) = ΣiP(di) maxs єS(utility(s)) • Where the function maxs єS will return the move MAX will pick out of all the choices available. • Alternately, you can generate an expextimin function for chance nodes preceding MIN’s move. • Together they are called the expectiminimax function.

Application to an Example MAX Chance 3.56 .6 .4 MIN 3.0 4.4 Chance 3.0 5.8 4.4 3.6 .6 .4 .6 .4 .6 .4 .6 .4 MAX 4 3 7 3 5 6 2 3 2 1 2 3 5 2 4 3 2 3 2 1 7 5 6 1

Chance Nodes: Differences • For minimax, any order-preserving transformation of leaves do not affect the decision. • However, when chance nodes are introduced, only positive linear transformations will keep the same decision.

Complexity of Expectiminimax • Where minimax does O(bm), expectiminimax will take O(bmnm), where n is the number of distinct rolls. • The extra cost makes it unrealistic to look too far ahead. • How much this effects our ability to look ahead depends on how many random events that can occur (or possible dice rolls).

Things to Consider • Calculating optimal decisions are intractable in most cases, thus all algorithms must make some assumptions and approximations. • The standard approach based on minimax, evaluation functions, and alpha-beta pruning is just one way of doing things. • These search techniques do not reflect how humans actually play games.

Demonstrating A Problem • Given this two-ply tree, the minimax algorithm will select the right-most branch, since it forces a minimum value of no less than 100. • This relies on the assumption that 100, 101, and 102 are in fact actually better than 99.

Summary • We defined the game in terms of a search. • Discussion of two-player games given perfect information (minimax). • Using cut-off to meet time constraints. • Optimizations using alpha-beta pruning to arrive at the same conclusion as minimax would have. • Complexity of adding chance to the decision tree.