Dimensionality reduction

E N D

Presentation Transcript

Dimensionality reduction kevin Labille & Susan Gauch University of Arkansas

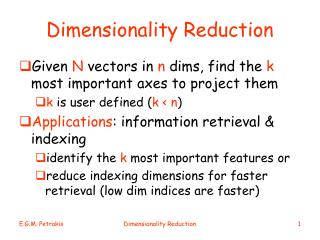

Dimensionality Reduction • Large dimensional space makes computation really expensive for any NLP or Machine learning task • Often, the features represented in the space are correlated and redundant • Dimensionality reduction techniques aim to find a compact low-dimensional subset of the high-dimensional feature space • Algebraic techniques based on Singular Value Decomposition (SVD): • Principal Component Analysis (PCA) • Latent Semantic Analysis (LSA) • Latent Semantic Indexing (LSI) • Probabilistic techniques: • Probabilistic Latent Semantic Analysis (pLSA) • Latent Dirichlet Allocation (LDA) Kevin Labille & Susan Gauch - 2018

Singular Value Decomposition • Singular Value Decomposition (SVD) is a algebraic technique for factorizing a matrix, i.e., reducing its dimension • The SVD of a matrix X is the factorization into the product of 3 matrices: X = SΣUT where: • X is an m x n matrix • U is an m x n orthogonal matrix that contains the left singular vectors • S is an n x n diagonal matrix that contains the singular values (square roots of the eigenvalues) • V is an n x n orthogonal matrix that contains the right singular vectors • As a result, the factorized matrix X’ has a dimension that is much lower than X k k x k x n x n x UT Σ n n = X’ m m = S m X n m n n n r n Select k dimension n n Kevin Labille & Susan Gauch - 2018

Principal Component Analysis • Principal Component Analysis (PCA) is an application of SVD on a matrix X n x p where n is the number of variables and p the number of sample • In PCA, the matrix X is the covariance matrix of the data points: • Perform SVD on X: X’ = SΣUT • To reduce dimensionality from p to k with k<< p, take the first k columns of S, the k x k upper left part of Σ, the product SkΣkis the n x k matrix containing the k Principal Components • If we multiply the PC by UkTwe get Xk = SkΣkUkTwhich is the original matrix X of lower rank. The lower rank matrix Xk is a reconstruction of the original data using the k principal components. This matrix has the lowest possible reconstruction error. p X = n Kevin Labille & Susan Gauch - 2018

Latent Semantic Analysis dn d… d2 d1 ∙ ∙ ∙ ∙ t1 ∙ ∙ ∙ ∙ • Latent Semantic Analysis (LSA) is an application of SVD on a word-document matrix X n x p where n are the documents and p are the words (or terms) • Sometimes Xi,j is the TF-IDF of term iin document j rather than the raw count of the frequency • When applying SVD: X’ = SΣUT. S is the SVD term matrix and UTis SVD document matrix • To reduce dimensionality from p to k with k<< p, take the first k columns of S, the k x k upper left part of Σ, and the k first rows of U. We get Xk = SkΣkUkTwhich is the lower-dimensional approximation of rank k of the high-dimensional matrix X. • Terms and documents now have a new representation that contains latent relationships between words and documents. t2 p ∙ ∙ ∙ ∙ X = t3 ∙ ∙ ∙ ∙ t… ∙ ∙ ∙ ∙ tp n Words in new space = row vectors of SkΣk Documents in new space = column vectors of ΣkUkT Kevin Labille & Susan Gauch - 2018

Latent Semantic Indexing • Latent Semantic Indexing (LSI) is an application of LSA (and therefore SVD) for Information Retrieval purposes • LSI uses the matrices resulting from LSA to compute search queries into the low-dimensional space that resulted from performing LSA. It allows us to compute query-document similarity scores in the low-dimensional representation Terms: row vector of SkΣk Documents: column vector ΣkUkT Queries: centroid of low-rank vectors of each term iin the query: q = Search result LSA Query: die, dagger Kevin Labille & Susan Gauch - 2018 Low-dimensional vector space k=2 Source: http://webhome.cs.uvic.ca/~thomo/svd.pdf

Probabilistic Latent Semantic Analysis • Probabilistic Latent Semantic Analysis (pLSA) is a probabilistic approach to dimensionality reduction of the space (as opposed to an algebraic approach) • The goal is to model co-occurrence information under a probabilistic model in order to discover latent structure of the data and reduce the dimensionality of the space • Idea: Each document can be represented as a mixture of latent concept and each word expresses a topic (see figure) N: Number of documents in the collection. Nw: Number of words in document d Each word w has associated a latent concept z from which is generated. The shaded circles indicate observed variables, while the unshaded one represents the latent variables. Source: http://homepages.inf.ed.ac.uk/rbf/CVonline/ LOCAL_COPIES/AV1011/oneata.pdf Kevin Labille & Susan Gauch - 2018

Probabilistic Latent Semantic Analysis • Probability of a document d with W words: • P(d) = P(w1|d) P(w2|d)… P(wW|d) = • Now if we have K hidden concepts or topics to consider: • P(w|d) =. = • The parameters of the model are P(w|k) and P(k|d) • They can be estimated through Maximum Likelihood Estimation (MLE) • (byfinding those values that maximize the predictive probability for the observed word co-ocurrences) • The objective function is then • L= with c = n(d,w) • (c is the number of times word w appears in document d) • The optimization problem can be solved by using the Expectation-Maximization (EM) algorithm. Source: http://homepages.inf.ed.ac.uk/rbf/CVonline/ LOCAL_COPIES/AV1011/oneata.pdf Once the model has converged, all word w can be expressed as P(w|k) with dimension k. Kevin Labille & Susan Gauch - 2018

TOPIC MOdeling • There are many ways to obtain “topics” from text • LDA (Latent Dirichlet Allocation) is most popular • Really, just a dimensionality reduction technique • V unique words map onto K dimensions (K << V) • These K dimensions are assumed to be “topics” since many v reduce into each k Kevin Labille & Susan Gauch - 2018

Latent DirichletAllocation • Latent Dirichlet Allocation (LDA) is another probabilistic approach to dimensionality reduction based on the following assumption: each document is a mixture of multiple topics, and each document can have different topics weights. It is similar to pLSA with the only difference that the topics or concepts have a Dirichletprior distribution White nodes = hidden variable Grey node = observed variable Black nodes = hyper parameters Source: https://www.utdallas.edu/~nrr150130/cs6375/2015fa/lects/Lecture_20_LDA.pdf ⍺ and η are the parameters over the θ and β distributions θdis the distribution of topics/concept for document d (vector of |K| ) βk is the distribution of word for topic k (vector of |V|) Zd,nis the topic nth of document dth(integer in {1, … , K} ) Wd,nis the nthword of document dth(integer in {1, … , V} ) There are K topics and D documents Kevin Labille & Susan Gauch - 2018

Latent Dirichlet AllocationGenerative process • Draw K sample distributions (each of size V) from a Dirichlet distribution βk ~Dir(η) • They are the topics or concepts distribution • These distributions are called βk • For each document: • Draw another sample distribution (of size K) from a Dirichlet distribution θd~Dir(⍺) • This distribution is called θd • For each word in the document: • Draw topic Zd,n~Multi(θd) • Draw word Wd,n~Multi(βZd,n)from the topic • Find the parameters α and β which maximize the likelihood of the observed data • Use an Expectation-Maximization based approach called variational EM • Not very successful Kevin Labille & Susan Gauch - 2018

Latent Dirichlet AllocationGibbs sampling • Gibbs sampling is an algorithm for obtaining a sequence of observations which are approximated from a specified multivariate probability distribution, when direct sampling is difficult • Randomly initialize word-topic assignment list Z : go through each document and randomly assign each word in the document to one of the K topics • Randomly initialize word-topic matrix CWT: count of each word being assigned to each topic • Randomly initialize document-topic matrix CDT: number of words assigned to each topic for each document • This random assignment already gives you both the topic representations of all the documents and word distributions of all the topics, albeit not very good ones => improve them iteration by iteration using Gibbs sampling method • For each word w of each document d reassign a new topic to w • choose topic t with the probability of word w given topic t • multiply with • probability of topic t given document d • Resample word topic assignment Z, Resample document-topic distribution , Resample word-topic distribution after each iteration Kevin Labille & Susan Gauch - 2018

Latent Dirichlet AllocationGibbs sampling • Gibbs sampling is an algorithm for obtaining a sequence of observations which are approximated from a specified multivariate probability distribution, when direct sampling is difficult • Randomly initialize word-topic assignment list Z : go through each document and randomly assign each word in the document to one of the K topics • Randomly initialize word-topic matrix CWT: count of each word being assigned to each topic • Randomly initialize document-topic matrix CDT: number of words assigned to each topic for each document • This random assignment already gives you both the topic representations of all the documents and word distributions of all the topics, albeit not very good ones => improve them iteration by iteration using Gibbs sampling method • For each word w of each document d reassign a new topic to w • choose topic t with the probability of word w given topic t • multiply with • probability of topic t given document d • Resample word topic assignment Z, Resample document-topic distribution , Resample word-topic distribution after each iteration Kevin Labille & Susan Gauch - 2018

CONCEPTUAL SEARCH BASED ON ONTOLOGIES • “Semantic” approach to dimensionality reduction • Rather than using math to “learn” lower number of dimensions, use an existing ontology/concept hierarchy to represent the documents • Gauch: • Select appropriate ontology source (Magellan, Yahoo!, Open Directory Project (ODP), ACM CCS, Wikipedia, …) • Use reasonable subset (top 3 levels – 1,000 categories, top 4 levels -> 10,000 categories) • Train categorizer using linked documents • Categorize your documents • Creates a vector or category weights (dimensionality 1,000 vs 1,000,000) • Actually, a tree of category weights Kevin Labille & Susan Gauch - 2018

Why Reduce Dimensionality? • Machine learning • Cannot easily train on millions of dimensions • Classification • Recommendation • Augment search (reduces ambiguity) • Increase recall • Decrease precision Kevin Labille & Susan Gauch - 2018

Resources • LDA python and R • https://wiseodd.github.io/techblog/2017/09/07/lda-gibbs/ • https://ethen8181.github.io/machine-learning/clustering_old/topic_model/LDA.html Kevin Labille & Susan Gauch - 2018