Morphological Evolution: Insights from Computational Modeling and Linguistic Phenomena

This work, presented at the 3rd Workshop on Quantitative Investigations in Theoretical Linguistics (QITL-3), explores the evolution of morphological paradigms through computational models. It highlights key phenomena such as the emergence of paradigms, stem alternations, and rules of referral in languages, particularly using examples from Sanskrit. Two experiments investigate syncretism and the dynamics of multiple paradigms in language evolution. The integration of evolutionary modeling provides insights into how languages simplify and adapt over time, addressing both theoretical and practical implications in linguistic study.

Morphological Evolution: Insights from Computational Modeling and Linguistic Phenomena

E N D

Presentation Transcript

Richard Sproat University of Illinois at Urbana-Champaign rws@uiuc.edu 3rd Workshop on "Quantitative Investigations in Theoretical Linguistics" (QITL-3) Helsinki, 2-4 June 2008 (Preliminary)Experiments in Morphological Evolution

Overview • The explananda • Previous work on evolutionary modeling • Computational models and preliminary experiments

Phenomena • How do paradigms arise? • Why do words fall into different inflectional “equivalence classes” • Why do stem alternations arise? • Why is there syncretism? • Why are there “rules of referral”?

Stem alternations in Sanskrit zero guna Examples from: Stump, Gregory (2001) Inflectional Morphology: A Theory of Paradigm Structure. Cambridge University Press.

Stem alternations in Sanskrit morphomic(Aronoff, M. 1994. Morphology by Itself. MIT Press.) vrddhi lexeme-class particular lexeme-class particular

Evolutionary Modeling (A tiny sample) • Hare, M. and Elman, J. L. (1995) Learning and morphological change. Cognition, 56(1):61--98. • Kirby, S. (1999) Function, Selection, and Innateness: The Emergence of Language Universals. Oxford • Nettle, D. "Using Social Impact Theory to simulate language change". Lingua, 108(2-3):95--117, 1999. • de Boer, B. (2001) The Origins of Vowel Systems. Oxford • Niyogi, P. (2006) The Computational Nature of Language Learning and Evolution. Cambridge, MA: MIT Press.

Rules of referral • Stump, Gregory (1993) “On rules of referral”. Language. 69(3), 449-479 • (After Zwicky, Arnold (1985) “How to describe inflection.” Berkeley Linguistics Society. 11, 372-386.)

Are rules of referral interesting? • Are they useful for the learner? • Wouldn’t the learner have heard instances of every paradigm? • Are they historically interesting: • Does morphological theory need mechanisms to explain why they occur?

Another example: Böğüstani nominal declension sSg Du Pl sSg Du Pl sSg Du Pl Nom Acc Gen Dat Loc Inst Abl Illat • Böğüstani • A language of Uzbekistan ISO 639-3: bgs Population 15,500 (1998 Durieux). Comments Capsicum chinense and Coffea arabica farmers

Monte Carlo simulation(generating Böğüstani) • Select a re-use bias B • For each language: • Generate a set of vowels, consonants and affix templates • a, i, u, e • n f r w B s x j D • V, C, CV, VC • Decide on p paradigms (minimum 3), r rows (minimum 2), c columns (minimum 2)

Monte Carlo simulation • For each paradigm in the language: • Iterate over (r, c): • Let α be previous affix stored for r: with p = B retain α in L • Let β be previous affix stored for c: with p = B retain β in L • If either L is non-empty, set (r, c) to random choice from L • Otherwise generate a new affix for (r, c) • Store (r, c)’s affix for r and c • Note that P(new-affix) = (1-B)2

Sample language: bias = 0.04 Consonants x n p w j B t r s S m Vowels a i u e Templates V, C, CV, VC

Sample language: bias = 0.04 Consonants n f r w B s x j D Vowels a i u e Templates V, C, CV, VC

Sample language: bias = 0.04 Consonants r p j d G D Vowels a i u e o y O Templates V, C, CV, VC,CVC, VCV, CVCV, VCVC

Sample language: bias = 0.04 Consonants D k S n b s l t w j B g G d Vowels a i u e Templates V, C, CV, VC

Results of Monte Carlo simulations(8000 runs, 5000 languages per run)

Interim conclusion • Syncretism, including rules of referral, may arise as a chance byproduct of tendencies to reuse inflectional exponents --- and hence reduce the number of exponents needed in the system. • Side question: is the amount of ambiguity among inflectional exponents statistically different from that among lexemes? (cf. Beard’s Lexeme-Morpheme-Base Morphology) • Probably not since inflectional exponents tend to be shorter, so the chances of collisions are much higher

Experiment 2:Stabilizing Multiple Paradigms in a Multiagent Network

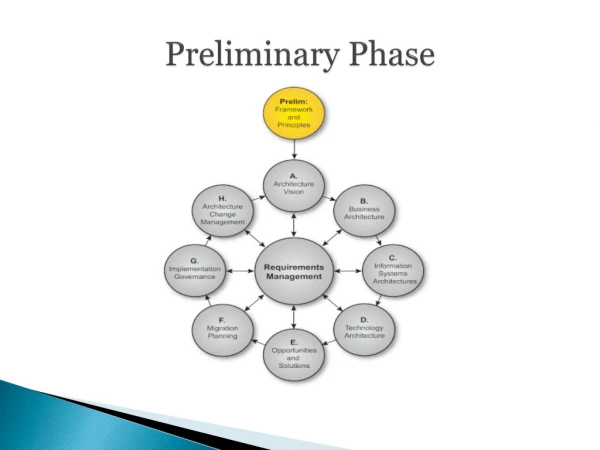

Paradigm Reduction in Multi-agent Models with Scale-Free Networks • Agents connected in scale-free network • Only connected agents communicate • Agents more likely to update forms from interlocutors they “trust” • Each individual agent has pressure to simplify its morphology by collapsing exponents: • Exponent collapse is picked to minimize an increase in paradigm entropy • Paradigms may be simplified – removing distinctions and thus reducing paradigm entropy • As the number of exponents decreases so does the pressure to reduce • Agents analogize paradigms to other words

Scale-free networks • Connection degrees follow the Yule-Simon distribution: where for sufficiently large k: i.e. reduces to Zipf’s law (cf. Baayen, Harald (2000) Word Frequency Distributions. Springer.)

Relevance of scale-free networks • Social networks are scale-free • Nodes with multiple connections seem to be relevant for language change. • cf: James Milroy and Lesley Milroy (1985) “Linguistic change, social network and speaker innovation.” Journal of Linguistics, 21:339–384.

Scale-free networks in the model • Agents communicate individual forms to other agents • When two agents differ on a form, one agent will update its form with a probability p proportional to how well connected the other agent is: • p = MaxP X ConnectionDegree(agent)/MaxConnectionDegree • (Similar to Page Rank)

Paradigm entropy • For exponents φ and morphological functions μ, define the Paradigm Entropy as: (NB: this is really just the conditional entropy) • If each exponent is unambiguous, the paradigm entropy is 0

Syncretism tends to be most common in “rarer” parts of paradigm

Simulation • 100 agents in scale-free or random network • Roughly 250 connections in either case • 20 bases • 5 “cases”, 2 “numbers”: each slot associated with a probability • Max probability of updating one’s form for a given slot given what another agent has is 0.2 or 0.5 • Probability of analogizing within one’s own vocabulary is 0.01, 0.02 or 0.05 • Also a mode where we force analogy every 50 iterations • Analogize to words within same “analogy group” (4 such groups in current simulation) • Winner-takes all strategy • (Numbers in the titles of the ensuing plots are given as UpdateProb/AnalogyProb (e.g. 0.2/0.01)) • Run for 1000 iterations

Features of simulation • At nth iteration, compute: • The paradigm distribution over agents for each word. • Paradigm purity is the proportion of the “winning paradigm” • The number of distinct winning paradigms

Sample final state 0.24 0.21 0.095 0.095 0.06 0.12 0.095 0.048 0.024 0.012

Adoption of acc/acc/acc/acc/acc/ACC/ACC/ACC/ACC/ACCin a 0.5/0.05 run

Interim conclusions • Scale-free networks don’t seem to matter: convergence behavior seems to be no different from a random network • Is that a big surprise? • Analogy matters • Paradigm entropy (conditional entropy) might be a model for paradigm simplification

Experiment 3:Large-scale multi-agent evolutionary modeling with learning(work in progress…)

Synopsis • System is seeded with a grammar and small number of agents • Initial grammars all show an agglutinative pattern • Each agent randomly selects a set of phonetic rules to apply to forms • Agents are assigned to one of a small number of social groups • 2 parents “beget” child agents. • Children are exposed to a predetermined number of training forms combined from both parents • Forms are presented proportional to their underlying “frequency” • Children must learn to generalize to unseen slots for words • Learning algorithm similar to: • David Yarowsky and Richard Wicentowski (2001) "Minimally supervised morphological analysis by multimodal alignment." Proceedings of ACL-2000, Hong Kong, pages 207-216. • Features include last n-characters of input form, plus semantic class • Learners select the optimal surface form to derive other forms from (optimal = requiring the simplest resulting ruleset – a Minimum Description Length criterion) • Forms are periodically pooled among all agents and the n best forms are kept for each word and each slot • Population grows, but is kept in check by “natural disasters” and a quasi-Malthusian model of resource limitations • Agents age and die according to reasonably realistic mortality statistics

Phonological rules • c_assimilation • c_lenition • degemination • final_cdel • n_assimilation • r_syllabification • umlaut • v_nasalization • voicing_assimilation • vowel_apocope • vowel_coalescence • vowel_syncope K = [ptkbdgmnNfvTDszSZxGCJlrhX] L = [wy] V = [aeiouAEIOU&@0âêîôûÂÊÎÔÛãõÕ] ## Regressive voicing assimilation b -> p / - _ #?[ptkfTsSxC] d -> t / - _ #?[ptkfTsSxC] g -> k / - _ #?[ptkfTsSxC] D -> T / - _ #?[ptkfTsSxC] z -> s / - _ #?[ptkfTsSxC] Z -> S / - _ #?[ptkfTsSxC] G -> x / - _ #?[ptkfTsSxC] J -> C / - _ #?[ptkfTsSxC] K = [ptkbdgmnNfvTDszSZxGCJlrhX] L = [wy] V = [aeiouAEIOU&@0âêîôûÂÊÎÔÛãõÕ] [td] -> D / [aeiou&âêîôûã]#? _ #?[aeiou&âêîôûã] [pb] -> v / [aeiou&âêîôûã]#? _ #?[aeiou&âêîôûã] [gk] -> G / [aeiou&âêîôûã]#? _ #?[aeiou&âêîôûã]

Example run • Initial paradigm: • Abog pl+acc Abogmeon • Abog pl+dat Abogmeke • Abog pl+gen Abogmei • Abog pl+nom Abogmeko • Abog sg+acc Abogaon • Abog sg+dat Abogake • Abog sg+gen Abogai • Abog sg+nom Abogako • NUMBER 'a' sg 0.7 'me' pl 0.3 • CASE 'ko' nom 0.4 'on' acc 0.3 'i' gen 0.2 'ke' dat 0.1 • PHONRULE_WEIGHTING=0.60 • NUM_TEACHING_FORMS=1500

Behavior of agent 4517 at 300 “years” Abog pl+acc Abogmeon Abog pl+dat Abogmeke Abog pl+gen Abogmei Abog pl+nom Abogmeko Abog sg+acc Abogaon Abog sg+dat Abogake Abog sg+gen Abogai Abog sg+nom Abogako Abog pl+acc Abogmeô Abog pl+dat Abogmeke Abog pl+gen Abogmei Abog pl+nom Abogmeko Abog sg+acc Abogaô Abog sg+dat Abogake Abog sg+gen Abogai Abog sg+nom Abogako lArpux pl+acc lArpuxmeô lArpux pl+dat lArpuxmeGe lArpux pl+gen lArpuxmei lArpux pl+nom lArpuxmeGo lArpux sg+acc lArpuxaô lArpux sg+dat lArpuxaGe lArpux sg+gen lArpuxai lArpux sg+nom lArpuxaGo lIdrab pl+acc lIdravmeô lIdrab pl+dat lIdrabmeke lIdrab pl+gen lIdravmei lIdrab pl+nom lIdrabmeGo lIdrab sg+acc lIdravaô lIdrab sg+dat lIdravaGe lIdrab sg+gen lIdravai lIdrab sg+nom lIdravaGo 59 paradigms covering 454 lexemes

Another run • Initial paradigm: • Adgar pl+acc Adgarmeon • Adgar pl+dat Adgarmeke • Adgar pl+gen Adgarmei • Adgar pl+nom Adgarmeko • Adgar sg+acc Adgaraon • Adgar sg+dat Adgarake • Adgar sg+gen Adgarai • Adgar sg+nom Adgarako • PHONRULE_WEIGHTING=0.80 • NUM_TEACHING_FORMS=1500