Sampling: Key Concepts and Methods for Valid Data

This guide explains the importance of sampling in research, covering terms, sample size, errors, bias, and various sampling methods such as probability, non-probability, convenience, quota, and purposive sampling. Learn how to select representative samples and ensure validity and reliability in your research.

Sampling: Key Concepts and Methods for Valid Data

E N D

Presentation Transcript

Sampling • Many full scientific texts have been written about this subject, but it is also a general phenomena – we all come to conclusions based on samples of experience that we have had. • Why sample – • More economical and efficient • May be more accurate • More able to control for biases due to over- or under-representation of some population segment

Sampling Terms to Know • 1. Sampling – the process of selecting a part of the population to represent the entire population • 2. Population – an entire aggregation of cases which meet a designated set of criteria – all nurses, all BSN nurses, all nurses in Hamilton County • Accessible population – all cases which conform to the criteria and which are accessible for the study • Target population – the entire field of cases which conform to the criteria

Sampling Terms to Know • 3. Sampling Unit – elements or a set of elements used for sampling – if you want an element of BSN students, send questionnaires to BSN schools – the school is the sampling unit and each student is an element (the most basic unit about which information is collected).

Sample Size and Sample Error • Sample size – Always use the largest sample possible. In general, a sample size should be at least ten for every subdivision of the data. 20-30 is preferable. The absolute size is more important than the relative size. • Sampling error – the difference between values obtained from the sample and the values of the whole population. • Sampling Bias – This occurs when samples are not carefully selected, i.e. some parts of the population are left out – internet samples, volunteers

Steps in Sampling • Identify the target population • Identify the part of the population that is accessible to you • Ask the sample subjects for cooperation • Select subjects randomly if possible • Collect data • Interpret the results based on the sample – be realistic and conservative

Types of Samples • Representative sample – a sample in which the key characteristics of the elements closely approximate those of the population • Probability sample – a sample that uses some form of random selection in choosing the elements – the researcher can specify the probability that each element of the population would be included • Non-probability sample – a sample in which the elements are not chosen by random selection

Types of Samples • Non-probability sample – the elements are selected by non-random methods. This type of sampling is more convenient and economical. • Convenience sample – this is where the researcher uses the most readily available persons – also called accidental samples – such as the first persons who come into a supermarket or a clinic. This is the weakest method of sampling • Snowball sample – persons known to the researcher are asked to participate then the elements are asked to give names of others they know with the same characteristic.

Types of Samples • Quota sampling – the researcher identifies different strata of the population and determines the proportions of elements needed from those various segments of the population (establishes a quota and fills the quota as the elements present themselves) • Purposive sampling (judgmental sampling) the researcher’s knowledge about the population is used to handpick the elements to be included so that the sample meets “the widest type variety” or the”typical” element. It is good for testing instruments or validating tests, but it does risk bias. • Sequential sampling – Sample one person at a time until you prove or disprove a statement (“Seven out of 10 times ASA works better.”)

Quota Sampling Smokers Non-Smokers • Males lll llllI Illll • Females IIIII III IIIII III

Types of Sampling • Probability Sampling • Simple random sampling – establish a list from which the sample will be chosen (a sample frame) and number all elements consecutively. Use a table of random numbers or a computer to draw numbers. This guarantees that the differences in attributes of the sample and of the population are purely a function of chance and the the probability of selecting a deviant sample is low. As the size of the sample increases, the probability of its deviance from the attributes of the population decreases.

Types of Sampling • Stratified random sample – mutually exclusive segments of the population are established by one or more specifications (male/female; below 30yrs/30-45yrs/46yrs and over; diploma/ADN, BSN) and elements are picked randomly from each stratification of the population. (Decisions about which strata the elements belong to are made before the selection as opposed to quota sampling where the person is questioned and then put into a stratum.) This method increases representativeness • Proportional – elements in proportion to population • Disproportional – to compare greatly unequal proportions

Stratified Random Sampling • Numbered list of male smokers - 45 • Numbered list of female smokers - 33 • Numbered list of male non-smokers - 47 • Numbered list of female non-smokers – 37 • ________________________________ • Smokers Non-smokers • Male 10/45 random 10/33 random • Female 10/47 random 10/37 random

Types of Sampling • Cluster sampling – this is a process in which a successive random sampling of units is drawn (states, then cities, then districts, then blocks, then households) moving from the largest unit down to the basic element. It is also called multi-staged sampling. The sampling error may be larger with it. • Systematic sampling – the researcher selects every k th person from a list or a group. It is not random if you select every 10th person walking by nor is it random unless you draw the first number to start the list.

Random Assignment • Random assignment of subjects to groups • This eliminates as much systematic bias as possible. Each subject has an equal chance to be in any of the study groups and differences are explained on the basis of the experimental conditions rather than on differences in subjects. • Random assignment of treatments to groups • Exemplified in double-blind studies carried out in clinical trials. This is particularly useful when the researcher has to deal with intact groups such as in classrooms or on hospital units

Measurement • Measurement is assigning numbers to objects to represent quantities of attributes or concepts. • Measurement procedures are operational definitions of concepts or attributes – the concept or attribute should really exist although it may be an abstraction • Measurement always deals with abstraction – you don’t measure a person, but a characteristic of that person

Measurement • Numbers are assigned to quantify an attribute – “whatever exists, exists in some amount and can be measured” The variability of an attribute is capable of numerical expression which signifies how much of the attribute is present in the element. • Rules for measuring may have to be invented. The researcher must specify under what conditions and according to what criteria, and in what increments, numerical values are to be assigned. • Measurement should have a rational correspondence to reality

Advantages of Measurement • What would you work with if you did not have measurement of height, weight, temperature – intuition, guesses, personal judgment • Objectivity – scoring minimizes subjectivity. Analytical procedures are not subjective • Communication – numbers constitute a non-ambiguous language

Levels of Measurement • Nominal scale – measurement at its weakest – numbers or other symbols are used to classify an element – such as a psychiatric diagnostic number - 295. You can partition a given class of elements into a set of mutually exclusive subclasses – 295.30, 295.20. The only relationship involved is equivalence (=) The kinds of statistics that can be used with this type of measurement are modes and frequency counts. You can test hypotheses regarding distribution of cases among categories (X2).

Nominal Level • Marital Status Gender Total • Male Female • Married 60 100 160 • Single 140 200 340 • Total 200 300 500 • ____________________________________ • Calculate the percentages.

Levels of Measurement • Ordinal scale – this measurement shows relationships among classes such as higher than , more difficult than, etc. It involves two relations: equivalence (=) and greater than (>) The researcher can test hypotheses using non-parametric statistics of order and ranking such as the Spearman Rank Order Correlation or the Mann Whitney U.

Ordinal Level Considerable Moderate Little • Social Support Social Support Social Support • Bulimics 13 30 57 • Non- • Bulimics 35 55 10

Levels of Measurement • Interval scale – this is similar to the ordinal scale, but the distance between any two numbers is of a known size. All parametric tests are able to be used – mean, standard deviation, Pearson correlation, T-test, F-test, etc. It involves three relations: equivalence (=), greater than (>), and a known ratio of any two intervals.

Interval Level • Abused Women Score Before Score After Difference • 1 36 42 6 • 2 25 40 15 • 3 39 45 6 • 4 40 40 0 • 5 41 44 3 • 6 35 40 5

Levels of Measurement • Ratio scale – it is like the interval scale, but it has a true zero point as its origin. You can use arithmetic with it and all parametric tests as well as those involving geometric means. It involves four relationships: equivalence(=), greater than(>), the known ratio of any two intervals, and the known ratio of any two scale values.

Ratio Level • Experimental Group Control Group • Sample Size 35 lbs 35 lbs • Mean Weight Loss 26 lbs 19 lbs • Standard Deviation 2 lbs 4.5 lbs

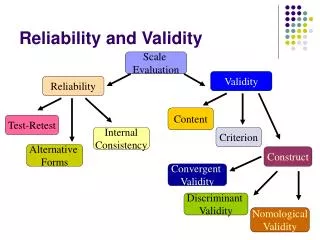

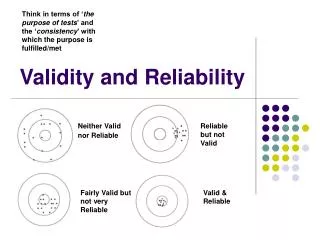

Reliability and Validity - Criteria for Assessing Measuring Tools • Every score is part true and part error • Sources of errors in scores • Situational contaminants • Response set bias • Transitory personal factors • Administration variations • Instrument clarity • Response sampling (a person scores 95% and 90% on two tests which claim to test the same thing) • Instrument format

Reliability • This is the major criterion for assessing a measuring instrument’s quality and adequacy. It is the consistency with which the instrument measures the attribute it is supposed to be measuring. • The reliability of an instrument is not a property of the instrument, but rather of the instrument when administered under certain conditions to a certain sample. (A death anxiety instrument would not measure the same when given to teenagers as it measures for geriatric patients.)

Ways to Check Reliability • Stability (test-retest reliability) – the same test is given to a sample of individuals on two occasions, then the scores are compared by computing a reliability coefficient. (A reliability coefficient is a correlation coefficient between the two scores) • Internal consistency (homogeneity) – all of the subparts of the instrument must measure the same characteristic. Use the split-half technique –split the test items in half, score each half, then compare the scores using a correlation coefficient; or compare each item (by correlation) with the total score (α)

Ways to Check Reliability • Equivalence – can be tested in two ways • 1. Using two or more forms of a test to see if they are equal • 2. Inter-rater reliability • Carefully train observers, develop clearly defined,non-overlapping categories, and use behaviors that are molecular rather than molar • Two or more observers watch the same event simultaneously and independently record variables according to a plan or code • Reliability is computed: • Reliability = number of agreements number of agreements + number of disagreements

Ways to Check Reliability • Interpretation of reliability coefficients • If you are interested only in group-level comparisons, a reliability coefficient of .70 or even .60 is sufficient (male/female, Dr./nurse, smoker/non-smoker) • If you are interested in decisions about individuals, such as who gets into school, then a coefficient of .90 or higher is needed • If the coefficient were .80, then 80% of the scores’ variability would be true variability and 20% would be extraneous

Ways to Improve Reliability • Add more items • Have a more varied group of subjects – the more homogeneous the group the lower the reliability coefficient

Validity • The degree to which an instrument measures what it is supposed to be measuring. Validity is difficult to establish. An instrument that is not reliable cannot be valid, but, an instrument can be reliable and still not be valid. (Example – a patient satisfaction scale does not measure “quality of nursing care.”)

Aspects of Validity • Face Validity • Content Validity • Criterion-related validity • Construct validity

Validity • Face validity – refers to whether the instrument looks as though it is measuring the appropriate attribute. It is based on judgment. There are no objective criteria used for assessment of its appropriateness.

Types of Instrument Validity • Content validity – looks at the sampling adequacy of the content area – used especially for tests that measure knowledge of a specific content area. It is evaluated by examining the extent to which the content of the test represents the total domain of behaviors encompassing the ability being measured. It is usually measured by expert opinion. It is based on judgment. The more experts who agree on the content to be included, the better – a blueprint could be developed or a content validity index (CVI) could be developed –see method in Polit, p. 459

Types of Instrument Validity • Criterion-related validity – this establishes a relationship between the instrument and some other criterion that is accepted as measuring the same attribute. The scores on both should correlate highly indicating directly how valid the instrument is. • Concurrent validity – the criterion measure is obtained at the same time the test is given • Predictive validity – the criterion measure is obtained some time after the test is given and the test is used to predict future performance on the criterion measure

Types of Instrument Validity • Construct validity – asks the question: Is the abstract concept/construct under investigation being adequately measured with this instrument – is there a fit between the conceptual definition and the operational definition of a variable. One way to test it is through the known groups technique – groups expected to differ on the critical attribute are tested and scores should be different. If the test is a sample of behaviors characteristic of the construct. Its items must be representative of the content of the construct. A good way to support this assumption is to use factor analysis.

Types of Instrument Validity • Statistical Conclusion Validity – determines whether the conclusions drawn about the relationships are an accurate reflection of the real world and/or whether the differences drawn from statistical analyses are an accurate reflection of the real world.

Benefits and Limitations of Statistical Conclusion Methods • Benefits • Enhances interpretability of relationships • Easy and economical • Can be used with a large number of extraneous variables • Limitations • Requires knowledge of which variables to control to enhance the independent variable • Requires statistical sophistication regarding statistical power and statistical precision

Interpretation of Validity • Validity cannot be proved but it can be supported. The researcher does not validate the instrument itself, but actually some application of the instrument

Other Criteria for an Instrument • Efficiency – the number of items, the time it takes to complete • Sensitivity – how small a variation in the attribute can be detected and measured – use item analysis of tests • Objectivity – two researchers should agree about its measurement • Comprehensibility – subjects can understand what to do with it • Balance – to minimize response sets • Time allowance – adequate time is available for completion • Simplicity