Understanding Algorithm Efficiency and Big O Notation

This article explores essential concepts in algorithm analysis, focusing on efficiency, algorithm cost, and Big O notation. We define what an algorithm is—a computable set of steps to achieve desired results—and delve into the significance of efficiency in terms of resources and time. Asymptotic analysis is introduced as a method to evaluate algorithm performance as input sizes grow, emphasizing the largest orders of magnitude. Additionally, we discuss practical analysis with real-world examples and typical Big O values for common algorithms, providing insights into comparing their efficiencies.

Understanding Algorithm Efficiency and Big O Notation

E N D

Presentation Transcript

Some definitions • Algorithm: A computable set of steps to achieve a desired result. • Efficiency: The amount of resources used to find an answer. • Algorithm Cost: How much power, resources and time the algorithm takes to run.

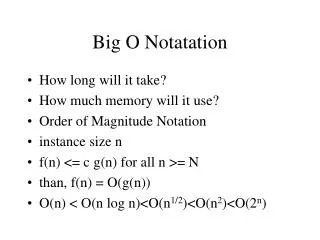

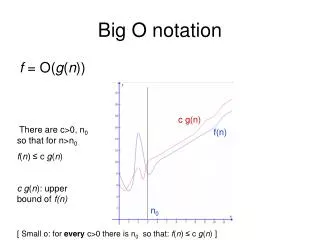

Big O Notation • A theoretical measure of the execution of an algorithm given the problem size n (which is usually the number of items in the input)

Asymptotic Analysis • An asymptote • A curve approaches, but never quite touches, its asymptote • We only care about the largest orders of magnitude

Asymptotic analysis If we reduce the equation to the biggest term, we end up with a function representing the "asymptotic bound" of the equation. This kind of analysis is known as asymptotic analysis.

Practical analysis • Algorithm 1 runs in 10 seconds on computer 1 • Algorithm 2 runs in 20 seconds on computer 2 • Which is the more efficient algorithm? • What if they are running on the same machine? Input size, implementation dependency and platform dependency.

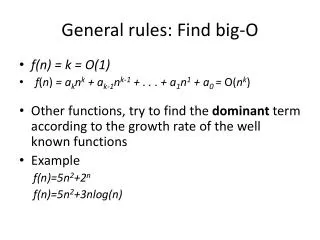

Typical Big O values • O(1) smart sum • O(log n) binary search • O(n) naïve sum • O(n log n) quick sort • O(nc) insertion sort • O(cn) Towers of Hanoi • O(n!) Travelling salesman

Bubble Sort Outer loop, works repeatedly down the list When an unsorted pair is found, it is swapped until there are no more swaps