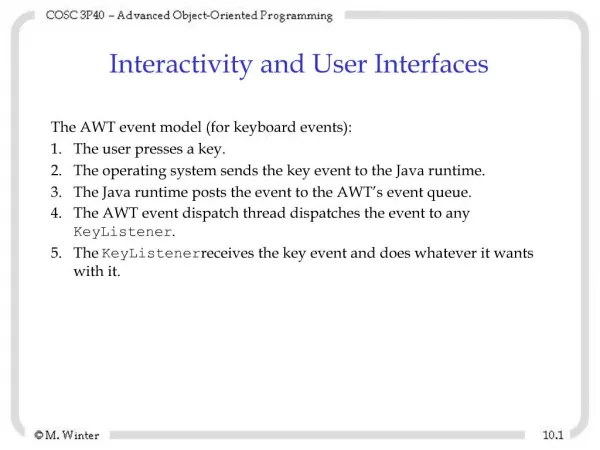

User Interfaces and Algorithms for Fighting Phishing

630 likes | 642 Vues

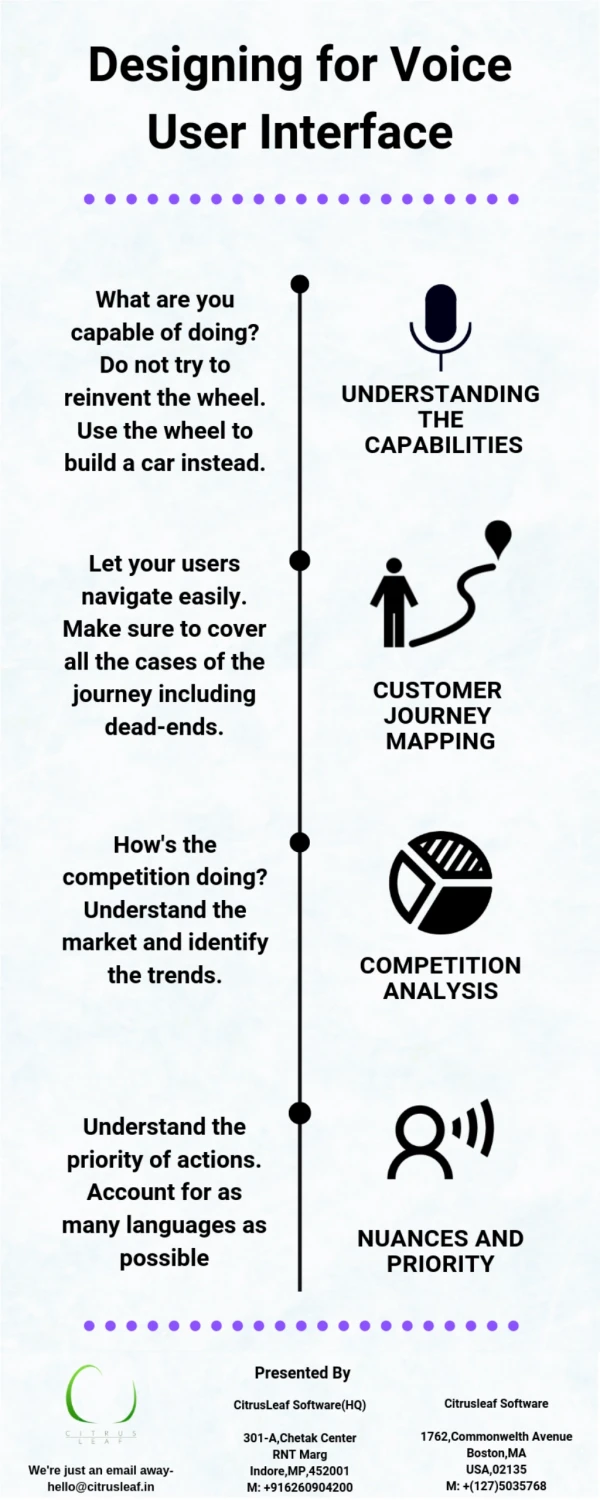

This study focuses on anti-phishing efforts, using a multi-disciplinary approach to help people make better trust decisions and protect themselves against phishing attacks. It includes embedded training and an anti-phishing toolbar.

User Interfaces and Algorithms for Fighting Phishing

E N D

Presentation Transcript

User Interfaces and Algorithms for Fighting Phishing Jason I. HongCarnegie Mellon University

This entire process known as phishing

Fast Facts on Phishing • Estimated 3.5 million people have fallen for phishing • Estimated to cost $1-2 billion a year (and growing) • 9255 unique phishing sites reported in June 2006 • Easier (and safer) to phish than rob a bank

Supporting Trust Decisions • Goal: help people make better trust decisions • Focus on anti-phishing • Large multi-disciplinary team project at CMU • Supported by NSF, ARO, CMU CyLab • Six faculty, five PhD students, undergrads, staff • Computer science, human-computer interaction, public policy, social and decision sciences, CERT

Our Multi-Pronged Approach • Human side • Interviews to understand decision-making • Embedded training • Anti-phishing game • Computer side • Email anti-phishing filter • Automated testbed for anti-phishing toolbars • Our anti-phishing toolbar Automate where possible, support where necessary

Interview Study • Interviewed 40 Internet users, included 35 non-experts • “Mental models” interviews included email role play and open ended questions • Interviews recorded and coded J. Downs, M. Holbrook, and L. Cranor. Decision Strategies and Susceptibility to Phishing. In Proceedings of the 2006 Symposium On Usable Privacy and Security, 12-14 July 2006, Pittsburgh, PA.

Little Knowledge of Phishing • Only about half knew meaning of the term “phishing” “Something to do with the band Phish, I take it.”

Minimal Knowledge of Lock Icon “I think that it means secured, it symbolizes some kind of security, somehow.” • 85% of participants were aware of lock icon • Only 40% of those knew that it was supposed to be in the browser chrome • Only 35% had noticed https, and many of those did not know what it meant

Little Attention Paid to URLs • Only 55% of participants said they had ever noticed an unexpected or strange-looking URL • Most did not consider them to be suspicious

Some Knowledge of Scams • 55% of participants reported being cautious when email asks for sensitive financial info • But very few reported being suspicious of email asking for passwords • Knowledge of financial phish reduced likelihood of falling for these scams • But did not transfer to other scams, such as amazon.com password phish

Naive Evaluation Strategies • The most frequent strategies don’t help much in identifying phish • This email appears to be for me • It’s normal to hear from companies you do business with • Reputable companies will send emails “I will probably give them the information that they asked for. And I would assume that I had already given them that information at some point so I will feel comfortable giving it to them again.”

Other Findings • Web security pop-ups are confusing “Yeah, like the certificate has expired. I don’t actually know what that means.” • Don’t know what encryption means • Summary • People generally not good at identifying scams they haven’t specifically seen before • People don’t use good strategies to protect themselves

Web Site Training Study • Laboratory study of 28 non-expert computer users • Two conditions, both asked to evaluate 20 web sites • Control group evaluated 10 web sites, took 15 minute break to read email or play solitaire, evaluated 10 more web sites • Experimental group same as above, but spent 15 minute break reading web-based training materials • Experimental group performed significantly better identifying phish after training • Less reliance on “professional-looking” designs • Looking at and understanding URLs • Web site asks for too much information People can learn from web-based training materials, if only we could get them to read them!

How Do We Get People Trained? • Most people don’t proactively look for training materials on the web • Many companies send “security notice” emails to their employees and/or customers • But these tend to be ignored • Too much to read • People don’t consider them relevant • People think they already know how to protect themselves

Embedded Training • Can we “train” people during their normal use of email to avoid phishing attacks? • Periodically, people get sent a training email • Training email looks like a phishing attack • If person falls for it, intervention warns and highlights what cues to look for in succinct and engaging format P. Kumaraguru, Y. Rhee, A. Acquisti, L. Cranor, J. Hong, and E. Nunge. Protecting People from Phishing: The Design and Evaluation of an Embedded Training Email System. CyLab Technical Report. CMU-CyLab-06-017, 2006. http://www.cylab.cmu.edu/default.aspx?id=2253 [to be presented at CHI 2007]

Diagram Intervention Explains why they are seeing this message

Diagram Intervention Explains how to identify a phishing scam

Diagram Intervention Explains what a phishing scam is

Diagram Intervention Explains simple things you can do to protect self

Embedded Training Evaluation • Lab study comparing our prototypes to standard security notices • EBay, PayPal notices • Diagram that explains phishing • Comic strip that tells a story • 10 participants in each condition (30 total) • Roughly, go through 19 emails, 4 phishing attacks scattered throughout, 2 training emails too • Emails are in context of working in an office

Embedded Training Results • Existing practice of security notices is ineffective • Diagram intervention somewhat better • Comic strip intervention worked best • Statistically significant • Pilot study showed interventions most effective when based on real brands

Next Steps • Iterate on intervention design • Have already created newer designs, ready for testing • Understand why comic strip worked better • Story? Comic format? • Preparing for larger scale deployment • Include more people • Evaluate retention over time • Deploy outside lab conditions if possible • Real world deployment and evaluation • Need corporate partners to let us spoof their brand

Anti-Phishing Phil • A game to teach people not to fall for phish • Embedded training focuses on email • Game focuses on web browser, URLs • Goals • How to parse URLs • Where to look for URLs • Use search engines instead • Available on our website soon

Outline • Human side • Interviews to understand decision-making • Embedded training • Anti-phishing game • Computer side • Email anti-phishing filter • Automated testbed for anti-phishing toolbars • Our anti-phishing toolbar

How accurate are today’s anti-phishing toolbars?

Some Users Rely on Toolbars • Dozens of anti-phishing toolbars offered • Built into security software suites • Offered by ISPs • Free downloads • Built into latest version of popular web browsers

Some Users Rely on Toolbars • Dozens of anti-phishing toolbars offered • Built into security software suites • Offered by ISPs • Free downloads • Built into latest version of popular web browsers • Previous studies demonstrated usability problems that need further work • But how well do they detect phish?

Testing the Toolbars • April 2006: Manual evaluation of 5 toolbars • Required lots of undergraduate labor over 2-week period • Summer 2006: Created a semi-automated test bed • September 2006: Automated evaluation of 5 toolbars • Used APWG feed as source of phishing URLs • November 2006: Automated evaluation of 10 toolbars • Used phishtank.com as source of phishing URLs • Evaluated 100 phish and 510 legit sites in just 2 days L. Cranor, S. Egelman, J. Hong and Y. Zhang. Phinding Phish: An Evaluation of Anti-Phishing Toolbars.CyLab Technical Report. CMU-CyLab-06-018, 2006. http://www.cylab.cmu.edu/default.aspx?id=2255 [to be presented at NDSS]

Testbed for Anti-Phishing Toolbars • Manual evaluation was tedious, slow, error-prone • Created a testbed that could semi-automatically evaluate these toolbars • Just give it a set of URLs to check (labeled as phish or not) • Checks all the toolbars, aggregates statistics • How to automate this for different toolbars? • Different APIs (if any), different browsers • Image-based approach, take screenshots of web browser and compare relevant portions to known states

Finding Fresh Phish for Test • Need a source with lots of fresh phishing URLs • Can’t use toolbar black lists if we are testing their tools • Sites get taken down within a few days, need phish less than one day old • To observe how fast black lists get updated, the fresher the better • Experimented with several sources • APWG - high volume, but many duplicates and legitimate URLs included • Phishtank.com - lower volume but easier to extract phish • Other phish archives - often low volume or not fresh enough • Choice of feed impacts results

November 2006 evaluation • Tested 10 toolbars • Microsoft Internet Explorer v7.0.5700.6 • Netscape Navigator v8.1.2 • EarthLink v3.3.44.0 • eBay v 2.3.2.0 • McAfee SiteAdvisor v1.7.0.53 • NetCraft v1.7.0 • TrustWatch v3.0.4.0.1.2 • SpoofGuard • Cloudmark v1.0. • Google Toolbar v2.1 (Firefox) • Most use blacklists and simple heuristics • SpoofGuard only one to rely solely on heuristics

November 2006 Evaluation • Test URLs • 100 manually confirmed fresh phish from phishtank.com (reported within 6 hours) • Did not use the fully confirmed ones • 60 legitimate sites linked to by phishing messages • 510 legitimate sites tested by 3Sharp in Sept 2006 report

Results 38% false positives 1% false positives

Results • Only toolbar >90% accuracy has high false positive rate • Several catch 70-85% of phish with few false positives • After 15 minutes of training, users seem to do as well • Few improvements in catch rates seen over 24 hours • Suggests most toolbars not taking advantage of available phish feeds to quickly update black lists • Combination of heuristics and frequently updated black list (and white list?) seems to be most promising approach • Plan to periodically repeat study every quarter • Should only consider this a rough ordering • Different sources of phishing URLs lead to different results

Robust Hyperlinks • Developed by Phelps and Wilensky to solve “404 not found” problem • Key idea was to add a lexical signature to URLs that could be fed to a search engine if URL failed • Ex. http://abc.com/page.html?sig=“word1+word2+...+word5” • How to generate signature? • Found that TF-IDF was fairly effective • Informal evaluation found five words was sufficient for most web pages

Adapting TF-IDF for Anti-Phishing • Can same basic approach be used for anti-phishing? • Scammers often directly copy web pages • With Google search engine, fake should have low page rank Fake Real

Adapting TF-IDF for Anti-Phishing • Rough algorithm • Given a web page, calculate TF-IDF for each word on page • Take five terms with highest TF-IDF weights • Feed these terms into a search engine (Google) • If domain name of current web page is in top N search results, consider it legitimate (N=30 worked well)

Evaluation #1 • 100 phishing URLs fro PhishTank.com • 100 legitimate URLs from 3Sharp’s study

Discussion of Evaluation #1 • Very good results (97%), but false positives (10%) • Added several heuristics to reduce false positives • Many of these heuristics used by other toolbars • Age of domain • Known images • Suspicious URLs (has @ or -) • Suspicious links (see above) • IP Address in URL • Dots in URL (>= 5 dots) • Page contains text entry field • TF-IDF • Used simple forward linear model to weight these

Evaluation #2 • Compared to SpoofGuard and NetCraft • SpoofGuard uses all heuristics • NetCraft 1.7.0 uses heuristics (?) and extensive blacklist • 100 phishing URLs from PhishTank.com • 100 legitimate URLs • Sites often attacked (citibank, paypal) • Top pages from Alexa (most popular sites) • Random web pages from random.yahoo.com