CHAPTER 6 : ADVERSARIAL SEARCH

CHAPTER 6 : ADVERSARIAL SEARCH . GAMZE EROL. Introduct I on. Multi agent environments , any given agent will need to consider the actions of other agents and how they affect its own welfare . The unpredictability of these other agents can introduce many possible contingencies

CHAPTER 6 : ADVERSARIAL SEARCH

E N D

Presentation Transcript

CHAPTER 6 :ADVERSARIAL SEARCH GAMZE EROL

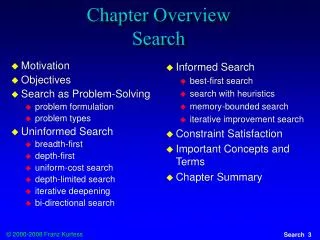

IntroductIon • Multi agent environments , any given agent will need to consider the actions of other agents and how they affect its own welfare. • The unpredictability of these other agents can introduce many possible contingencies • There could be competitive or cooperativeenvironments • Competitive environments, in which the agent’s goals are in conflict require adversarial search,these problems are called as games. (search problem) • Idealization and simplification: • Two players • Alternate moves • MAX player • MIN player • Available information: • Perfect: chess, chequers, tic-tac-toe… (no chance, same knowledge for the two players) • Imperfect: poker(chance, think the others actions.)

Propertıesof Games • Game Theorists • Deterministic, turn-taking, two-player, zero-sum games of perfect information • AI • Deterministic • Fully-observable • Two agents whose actions must alternate • Utility values at the end of the game are equal and opposite • In chess, one player wins (+1), one player loses (-1) • It is this opposition between the agents’ utility functions that makes the situation adversarial

Game representatIon • In the general case of a game with two players: • General state representation • Initial-state definition • Winning-state representation as: • Structure • Properties • Utility function • Definition of a set of operators

Game Tree • Root node represents the configuration of the board at which a decision must be made • Root is labeled a "MAX" node indicating it is my turn; otherwise it is labeled a "MIN" (your turn) • Each level of the tree has nodes that are all MAX or all MIN

Search wIth an opponent Trivial approximation: generating the tree for allmoves Terminal moves are tagged with a utility value, for example: “+1” or “-1” depending on if the winner is MAX or MIN. The goal is to find a path to a winning state. Even if a depth-first search would minimize memory space, in complex games this kind of search cannot be carried out. Even a simple game like tic-tac-toe is too complex to draw the entire game tree.

Search wIth an opponent • Heuristic approximation: defining an evaluation function which indicates how close a state is from a winning (or losing) move • single evaluation function to describe the goodness of a board with respect to BOTHplayers. • Example of an Evaluation Function for Tic-Tac-Toe:f(n) = [number of 3-lengths open for first player] - [number of 3-lengths open for second player] where a 3-length is a complete row, column, or diagonal. • Adversary method • A winning move is represented by the value“+∞”. • A losing move is represented by the value “-∞”. • The algorithm searches with limited depth. • Each new decision implies repeating part of the search.

Example: mınmax e (evaluation function → integer) = number of available rows, columns, diagonals for MAX - number of available rows, columns, diagonals for MIN MAX plays with “X” and desires maximizing e. MIN plays with “0” and desires minimizing e.

Max’s turn Would like the “9” points (the maximum) But if choose left branch, Min will choose move to get 3 left branch has a value of 3 If choose right, Min can choose any one of 5, 6 or 7 (will choose 5, the minimum) right branch has a value of 5 Right branch is largest (the maximum) so choose that move Max 5 3 4 5 Min 3 9 4 5 6 7 Max Example: mınmax

Max’s turn Circles represent Max, Squares represent Min Values inside represent the value the MinMax algorithm Red arrows represent the chosen move Numbers on left represent tree depth Blue arrow is the chosen move MInMax – Second Example Max Min Max Min

SearchIng Game Trees usIng the MInImax AlgorIthm Create start node as a MAX node (since it's my turn to move) with current board configuration, Expand nodes down to some depth (i.e., ply) of lookahead in the game, Apply the evaluation function at each of the leaf nodes, "Back up" values for each of the non-leaf nodes until a value is computed for the root node. At MIN nodes, the backed up value is the minimum of the values associated with its children. At MAX nodes, the backed up value is the maximum of the values associated with its children, Pick the operator associated with the child node whose backed up value determined the value at the root.

The mInImax algorIthm minimax(player,board) if(game over in current board position) return winner children = all legal moves for player from this board if(max's turn) return maximal score of calling minimax on all the children else (min's turn) return minimal score of calling minimax on all the children

The mInImax algorIthm 14 The algorithm first recurses down to the tree bottom-left nodes and uses the Utility function on them to discover that their values are 3, 12 and 8.

The mInImax algorIthm A B 15 Then it takes the minimum of these values, 3, and returns it as the backed-up value of node B. Similar process for the other nodes.

The mInImax algorIthm 16 • If the maximum depth of the tree is m, and there are b legal moves at each point, then the time complexity is O(bm). • The space complexity is: • O(bm) for an algorithm that generates all successors at once • O(m) if it generates successors one at a time. • Problem with minimax search: • The number of game states it has to examine is exponential in the number of moves. • Unfortunately, the exponent can’t be eliminated, but it can be cut in half.

Alpha-beta prunIng 17 • It is possible to compute the correct minimax decision without looking at every node in the game tree. • Alpha-beta pruning allows to eliminate large parts of the tree from consideration, without influencing the final decision. • Alpha-beta pruning gets its name from two parameters. • They describe bounds on the values that appear anywhere along the path under consideration: • α = the value of the best (i.e., highest value) choice found so far along the path for MAX • β = the value of the best (i.e., lowest value) choice found so far along the path for MIN

Alpha-beta prunIng B 18 • The leaves below B have the values 3, 12 and 8. • The value of B is exactly 3. • It can be inferred that the value at the root is at least 3, because MAX has a choice worth 3.

Alpha-beta prunIng B C 19 • C, which is a MIN node, has a value of at most 2. • But B is worth 3, so MAX would never choose C. • Therefore, there is no point in looking at the other successors of C.

Alpha-beta prunIng B C D 20 D, which is a MIN node, is worth at most 14. This is still higher than MAX’s best alternative (i.e., 3), so D’s other successors are explored.

Alpha-beta prunIng B C D 21 The second successor of D is worth 5, so the exploration continues.

Alpha-beta prunIng B C D 22 • The third successor is worth 2, so now D is worth exactly 2. • MAX’s decision at the root is to move to B, giving a value of 3

Alpha-beta prunIng 23 • Alpha-beta search updates the values of α and β as it goes along. • It prunes the remaining branches at a node (i.e., terminates the recursive call) • as soon as the value of the current node is known to be worse than the current α or β value for MAX or MIN, respectively.

The alpha-beta search algorIthm evaluate (node, alpha, beta) if node is a leaf return the heuristic value of node if node is a minimizing node for each child of node beta = min (beta, evaluate (child, alpha, beta)) if beta <= alpha return beta if node is a maximizing node for each child of node alpha = max (alpha, evaluate (child, alpha, beta)) if beta <= alpha return alpha return alpha

FInal comments about alpha-betaprunIng 25 • Pruning does not affect final results. • Entire subtrees can be pruned, not just leaves. • Good move ordering improves effectiveness of pruning. • With perfect ordering, time complexity is O(bm/2). • Effective branching factor of sqrt(b) • Consequence: alpha-beta pruning can look twice as deep as minimax in the same amount of time.

MInMax– AlphaBetaPrunIngExample • From Max point of view, 1 is already lower than 4 or 5, so no need to evaluate 2 and 3 (bottom right) Prune

MInMax – AlphaBetaPrunIng Idea • Two scores passed around in search • Alpha – best score by some means • Anything less than this is no use (can be pruned) since we can already get alpha • Minimum score Max will get • Initially, negative infinity • Beta – worst-case scenario for opponent • Anything higher than this won’t be used by opponent • Maximum score Min will get • Initially, infinity • Recursion progresses, the "window" of Alpha-Beta becomes smaller • Beta < Alpha current position not result of best play and can be pruned

Games wIth Imperfect InformatIon • Minimax and alpha-beta pruning require too much leaf-node evaluations. • May be impractical within a reasonable amount of time. • SHANNON (1950): • Cut off search earlier (replace TERMINAL-TEST by CUTOFF-TEST) • Apply heuristic evaluation function EVAL (replacing utility function of alpha-beta)

CuttIng-off Search • Change: • if TERMINAL-TEST(state) then return UTILITY(state) into • if CUTOFF-TEST(state,depth) then return EVAL(state) • Introduces a fixed-depth limit depth • Is selected so that the amount of time will not exceed what the rules of the game allow. • When cuttoff occurs, the evaluation is performedinstead of utility. • Cut off location is important. The problem with abruptly stopping a search at a fixed depth is called the horizon effect.

HeurIstIc EVAL • Evaluation function ; An evaluation function returns an estimate of the expected utility of the game from a given position. • The heuristic functions of return an estimate of the distance to the goal. It is excepted as a linear function. • Eval(s) = w1 f1(s) + w2 f2(s) + … + wnfn(s)

HeurIstIc EVAL • Idea: produce an estimate of the expected utility of the game from a given position. • Performance depends on quality of EVAL. • Requirements: • Computation may not take too long. • For non-terminal states the EVAL should be strongly correlated with the actual chance of winning.

STOCHASTIC GAMES • Many games including a random element, such as the throwing of dice. It is called stochastic games. • In stochastic game we have 4 node; terminal, min, max and chance node. • Backgammon is a typical game that combines luck and skill. Dice are rolled at the beginning of a player’s turn to determine the legal moves. • Schematic tree of the backgammon.

DETERMINISTIC GAME IN PRACTICE • Checkers : Chinook ended 40-year-reign of human world champion Marion Tinsley in 1994. Used a precomputed endgame database defining perfect play for all positions involving 8 or fewer pieces on the board, a total of 444 billion positions. Checkers is now solved. • Chess: Deep Blue defeated human world champion Garry Kasparov in a six-game match in 1997. Deep Blue searches 200 million positions per second, uses very sophisticated evaluation, and undisclosed methods for extending some lines of search up to 40 ply. Current programs are even better, if less historic. • Othello: human champions refuse to compete against computers, who are too good. • Go: human champions refuse to compete against computers, who are too bad. In Go, b > 300, so most programs use pattern knowledge bases to suggest plausible moves, along with aggressive pruning.

SUMMARY • All the game start with defining by the initial state (how the board is set up), the legal actions in each state, the result of each action, a terminal test (which says when the game is over), and a utility function that applies to terminal states. • In two-player zero-sum games which has perfect information, the minimax algorithm can select optimal moves by a depth-first enumeration of the game tree. • The alpha–beta search algorithm computes the same as minimax, but achieves much greater efficiency by eliminating subtrees that are provably irrelevant. • Usually, it is not feasible to consider the whole game tree (even with alpha–beta) need to cut the search off at some point and apply a heuristic evaluation function that estimates the utility of a state. • Games of chance can be handled by an extension to the minimax algorithm that evaluates. a chance node by taking the average utility of all its children, weighted by the probability of each child.