MM9 Speech Communication

MM9 Speech Communication. MM8 summary Brush-up Conclusions (what you hopefully learned!) MM9 Standard Speech API Hello World. From mm 7. Types of Speech Recognisers. “rule grammar recognition” = “command & control recognition” “dictation”, “large vocabulary recognition”,

MM9 Speech Communication

E N D

Presentation Transcript

MM9 Speech Communication • MM8 summary • Brush-up • Conclusions (what you hopefully learned!) • MM9 • Standard Speech API • Hello World

From mm 7 Types of Speech Recognisers • “rule grammar recognition” = “command & control recognition” • “dictation”, “large vocabulary recognition”, • other types (e.g.. “Speech Commands” on mobile phones, DTW)

Exercise with Dictation • Dictation is not “general recognition” • Dependent on the ”topic” of the text data used for LM-training • E.g. ViaVoice performs better for dictation of business letters than for dictation of fairy-tales! • Dictation performs better after adaptation to the user • Is not 100% speaker-independent!

Exercise with the calculator • Speech recognition is not the same is speech understanding! • Understanding requires • Parsing • Context analysis

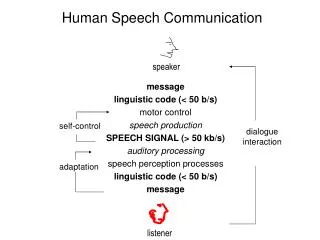

Dialogue System (text)James Allen: Natural Language Understanding, 1995 Synthesis Recognition Grammar & lexicon, Acoustic models

Exercise JHVite 10 dansk advokat har afsløret afdelingsingeniør dansk advokat har afsløret afhængig afdelingsingeniør almindelig dansk advokat har afsløret afdelingsingeniør afhængig afdelingsingeniør angrede begejstret advokat dominerer advokat afviser begejstret afdelingsingeniør advokat angrede dansk advokat angrede advokat afviser en begejstret afdelingsingeniør

Exercise JHVite 10 $adjektiv = dansk | afhængig | begejstret | almindelig; $substantiv = advokat | afdelingsingeniør; $transverb = afviser | har afsløret ; $intransverb = angrede | dominerer; $det = en | den; $np = [$det] {$adjektiv} $substantiv; $vp = ($transverb [$np]) | $intransverb; $s= $np $vp; ($s)

Exercise JHVite 10 • Use variables that correspond to normal grammatical categories (noun, verb, subject, predicate etc.) • Test the grammar • Does it take all sentences of a testset into account? • Does it only generate sentences that are likely to be input to the system?

WHAT IS A SPEECH API? (Conservative) State-of-the-art speech technology dictation speech recognition command and control speech recognition speech synthesis SPEECH API (mark-up language) (grammar) SPEECH APPLICATION e.g. spoken language dialogue system

SAPI • Microsoft+vendors (IBM etc.) • Cross-vendor API • Platform: Windows 32 systems (NT, 2K XP) • Com interface, Ms Visual C++ 4.0, and other MS products • SAPI-compliant speech products: • MS Whisper (free!), + “any” modern speech recogniser /synthesizer for Windows

JSAPI • Sun Microsystems+vendors (Apple Computer, Inc, AT&T, Dragon Systems, IBM, Novell. Inc. Philips, Texas Instruments Incorporated) • cross-vendor API • cross platform API • JAVA • JSAPI-compliant speech products: • ViaVoice for Linux (was free!) and Win32 systems, various speech synthesis systems

JSAPI packages • three packages (collections of objects) javax.speech. javax.speech.synthesis javax.speech.recognition • standard extension to the Java platform (“x”) • Personal Java, Embedded Java

javax.speech • centralized mechanism for • a) registering new speech engines, and • b) selecting available speech engines (from an application) • a locale defines the supported language (e.g. de.ch = Swiss German) • Additional features define • names of speakers that have trained the recognizer, • available synthetic voices • pausing/resuming, notification of events etc.

javax.speech.synthesis Interface javax.speech.synthesis.speakPlainText • argument simple orthographic text Interface javax.speech. synthesis.speak • argument JSML-text, e.g. <PARA>Message from <EMP>John Doe</EMP> regarding <BREAK/> <PROS RATE="-20%">magazine article</PROS>.</PARA>

javax.speech.recognition Interface javax.speech.recognition.FinalRuleResult Interface javax.speech.recognition.Result • 1-best list/n-best-list; for each item in list: • list of tokens (“words”) • list of tags • name of JSGF grammar accepting input • name of public rule accepting input

Java Speech Grammar Format (JSGF) • EBNF-equivalent “traditional style” (like SAPIs CFG-format) plus!: • Java-adapted style (e.g. grammar URLs!) • “semantic tags” (synonymy, multilinguality) • weights (enabling n-gram-statistics) • unification gr.-like “action tags” (Sun Microsystem proposal)

JSGF: JAVA-adapted style • JSGF header: grammar name/import, e.g. grammar dk.mydomain.emailapplication.mailBrowser import <dk.mydomain.ReusableGrammars.date> • documentation comments /** - */ • public rules vs. non-public (“private”) rules public <s> = <np> <vp>; <np>=<det><n>; <n>=man | woman | bird;

JSGF Tags • handling synonymy: <country> = Australia {Oz} | (United States) {USA} | America {USA} | (U S of A) {USA}; • handling multilinguality: <greeting>= (howdy | good morning) {hi}; <greeting>= (ohayo | ohayogozaimasu) {hi}; <greeting>= (guten tag) {hi}; <greeting>= (bon jour) {hi};

JSGF Weights • probabilistic grammars (e.g. bigrams, trigrams) in JSGF <size> = /10/ small | /2/ medium | /1/ large; equivalent to probabilities <size> = /10/13/ small | /2/13/ medium | /1/13/ large;

JSGF Action Tags (proposal) • Unification gr.-like percolation mechanism, but no structure sharing/feature constraints <_juliek> = (julie | "julie kay") { cat = properNoun; // The word is a proper noun. email = juliek; // User's e-mail ID. date = permanent; // Indicates permanent entry in address book. }; <person> = ((<_rickc> | <_juliek> | <_sadams>){$user}) { this = $user; };

N-grams • Sentence: S = w1 w2 ... wQ • Ideal sentence probability: P(S) = P(w1 w2 ... wQ)= P(w1)P(w2|w1)P(w3|w1 w2)...P(wQ|w1 w2 ...wQ-1) • Approximate conditional word probability: P(wQ|w1 w2 ... wQ-1) » p(wQ|wQ-N+1 ... wQ-1) - where N has a constant “windowing” size: • Unigram (N=1), Bigram (N=2), Trigram (N=3)

Trigram smoothing (Jellinek) • Used when there are insufficient data for real trigrams P(w3|w1 w2)= p1 F(w1,w2,w3) + p2 F(w1,w2) + p3 F(w1) F(w1, w2) F(w1) SF(wi) Where: F is number of occurences of the string in its argument SF(wi) is the number of words in corpus p1, p2, p3 are positive values and p1+p2+p3=1

Clustering words in N-grams • N-grams of word classes, categorical N-grams: • Words are “replaced” by (semantic, syntactic) categories before training. (e.g. “w_day” for Monday, Tuesday ...) • Data-driven clustering • Stemming (porter) • ….

N-gram problems • Long distance dependencies exceeding n: [kommoden/bordet/stolene] i værelset på tredje etage skal males [rød, rødt, røde] • Stochastic grammars “freezes” human verbal behaviour at a state reflected in the training data. The verbal behaviour may change. Adaptive approach? • Finding corpora reflecting how humans will communicate with the final system • (Human-human dialogs vs. WOZ-experiments).