Enhancing Computer Interfaces with Continuous Gestures and Language Models

This research addresses the inefficiencies of speech recognizers, which often make mistakes during dictation resulting in slow correction processes. By building a word lattice from the recognizer’s n-best list and expanding it to include likely recognition errors, we explore a more efficient and enjoyable correction interface. Our approach integrates a language model into a continuous gesture interface to simplify confirmation and correction. This study outlines methods for handling insertion errors, generating alternative pronunciations, and improving overall dictation accuracy.

Enhancing Computer Interfaces with Continuous Gestures and Language Models

E N D

Presentation Transcript

Efficient Computer Interfaces Using Continuous Gestures, Language Models, and Speech Keith Vertanen July 30th, 2004

The problem • Speech recognizers make mistakes • Correcting mistakes is inefficient • 140 WPM Uncorrected dictation • 14 WPM Corrected dictation, mouse/keyboard • 32 WPM Corrected typing, mouse/keyboard • Voice-only correction is even slower and more frustrating

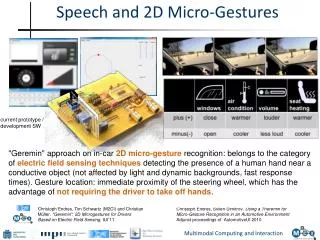

Research overview • Make correction of dictation: • More efficient • More fun • More accessible • Approach: • Build a word lattice from a recognizer’s n-best list • Expand lattice to cover likely recognition errors • Make a language model from expanded lattice • Use model in a continuous gesture interface to perform confirmation and correction

Building lattice • Example n-best list: 1: jack studied very hard 2: jack studied hard 3: jill studied hard 4: jill studied very hard 5: jill studied little

Acoustic confusions • Given a word, find words that sound similar • Look pronunciation up in dictionary: studied s t ah d iy d • Use observed phone confusions to generate alternative pronunciations: s t ah d iy d s t ah d iy d s ao d iy s t ah d iy … • Map pronunciation back to words: s t ah d iy d studied s ao d iy saudi s t ah d iy study

Language model confusions:“Jack studied hard” • Look at words before or after a node, add likely alternate words based on n-gram LM

Probability model • Our confirmation and correction interface requires probability of a letter given prior letters:

Probability model • Keep track of possible paths in lattice • Prediction based on next letter on paths • Interpolate with default language model • Example, user has entered “the_cat”:

Handling word errors • Use default language model during entry of erroneous word • Rebuild paths allowing for an additional deletion or substitution error • Example, user has entered “the_cattle_”:

Evaluating expansion • Assume a good model requires as little information from the user as possible

Results on test set • Model evaluated on held out test set (Hub1) • Default language model • 2.4 bits/letter • User decides between 5.3 letters • Best speech-based model • 0.61 bits/letter • User decides between 1.5 letters