Speech Detection, Classification, and Processing for Improved Automatic Speech Recognition in Multiparty Meetings

530 likes | 824 Vues

Speech Detection, Classification, and Processing for Improved Automatic Speech Recognition in Multiparty Meetings. Kofi A. Boakye Advisor: Nelson Morgan January 17 th , 2007. At-a-glance. Trying to do automatic speech recognition (ASR) in meetings.

Speech Detection, Classification, and Processing for Improved Automatic Speech Recognition in Multiparty Meetings

E N D

Presentation Transcript

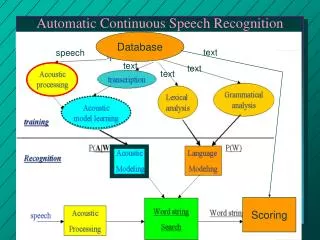

Speech Detection, Classification, and Processing for Improved Automatic Speech Recognition in Multiparty Meetings Kofi A. Boakye Advisor: Nelson Morgan January 17th, 2007 K. Boakye: Qualifying Exam Presentation

At-a-glance Trying to do automatic speech recognition (ASR) in meetings • Personal mics pick up other speakers (crosstalk) • Distant mics pick up multiple speakers at the same time (overlapped speech) Goal: Reduce errors caused by crosstalk and overlapped speech to improve speech recognition in meetings K. Boakye QE » Introduction

Outline of talk • Introduction • Speech activity detection for nearfield microphones • Overlap speech detection for farfield microphones • Overlap speech processing for farfield microphones • Preliminary experiments K. Boakye QE » Introduction

The meeting domain • Multiparty meetings are a rich content source for spoken language technology • Rich transcription • Indexing and summarization • Machine translation • High-level language and behavioral analysis using dialog act annotation • Good automatic speech recognition (ASR) is important K. Boakye QE » Introduction

Meeting ASR set-up • For a typical set-up, meeting ASR audio data is obtained from various sensors located in the room. Common types include: Individual Headset Microphone • Head-mounted mic positioned close to speaker • Best-quality signal for speaker K. Boakye QE » Introduction

Meeting ASR set-up • For a typical set-up, meeting ASR audio data is obtained from various sensors located in the room. Common types include: Lapel Microphone • Individual mic placed on participant’s clothing • More susceptible to interfering speech K. Boakye QE » Introduction

Meeting ASR set-up • For a typical set-up, meeting ASR audio data is obtained from various sensors located in the room. Common types include: Tabletop Microphone • Omni-directional pressure-zone mic • Placed between participants on table or other flat surface • Number and placement vary K. Boakye QE » Introduction

Meeting ASR set-up • For a typical set-up, meeting ASR audio data is obtained from various sensors located in the room. Common types include: Linear Microphone Array • Collection of omni-directional mics with a fixed linear topology • Composition can range from 4 to 64 mics • Enables beamforming for high SNR signals K. Boakye QE » Introduction

Meeting ASR set-up • For a typical set-up, meeting ASR audio data is obtained from various sensors located in the room. Common types include: Circular Microphone Array • Combines central location of tabletop mic and fixed topology of linear array • Consists of 4 to 8 omni-directional mics • Enables source localization and speaker tracking K. Boakye QE » Introduction

S/NS Detection Feature Extraction Prob. Estimation Decoding Decoding Decoding Decoding Words S/NS Detection Feature Extraction Prob. Estimation Words S/NS Detection Feature Extraction Prob. Estimation Words S/NS Detection Feature Extraction Prob. Estimation Words ASR in multiparty meetings • Nearfield recognition is generally performed by decoding each audio channel separately K. Boakye QE » Introduction

S/NS Detection Feature Extraction Prob. Estimation Grammar Model Decoding Decoding Decoding Decoding Words S/NS Detection Feature Extraction Prob. Estimation Words Speech S/NS Detection Feature Extraction Prob. Estimation Words Feature Extraction Decode Words Probability Estimate S/NS Detection Feature Extraction Prob. Estimation Pronunciation Models Words ASR in multiparty meetings • Nearfield recognition is generally performed by decoding each audio channel separately K. Boakye QE » Introduction

ASR in multiparty meetings • Farfield recognition is done in one of two ways: 1) Signal combination S/NS Detection Feature Extraction Prob. Estimation Decoding Signal Combination Words K. Boakye QE » Introduction

Decoding Decoding Decoding Decoding Words ASR in multiparty meetings • Farfield recognition is done in one of two ways: 2) Hypothesis combination S/NS Detection Feature Extraction Prob. Estimation S/NS Detection Feature Extraction Prob. Estimation Hypothesis Combination S/NS Detection Feature Extraction Prob. Estimation S/NS Detection Feature Extraction Prob. Estimation K. Boakye QE » Introduction

Performance metrics • Word error rate (WER) • Token-based ASR performance metric • Diarization error rate (DER) • Time-based diarization performance metric K. Boakye QE » Part II: Overlap Detection

Crosstalk and overlapped speech • ASR in meetings presents specific challenges owing to the domain • Multiple individuals speaking at various times leads to two phenomena in particular • Crosstalk • Associated with close-talking microphones • This non-local speech produces primarily insertion errors • Morgan et al. ’03: WER differed 75% relative between segmented and unsegmented waveforms due largely to crosstalk • Overlapped (co-channel) speech • Most pronounced (and severe) in distant microphone condition • Also produces errors for recognizer • Shriberg et al. ’01: 12% absolute WER difference for overlapped and non-overlapped speech segments for nearfield case K. Boakye QE » Introduction

Scope of project • Speech activity detection (SAD) for nearfield mics • Investigate features for SAD using HMM segmenter • Metrics: word error rate (WER) and diarization error rate (DER) • Baseline features: standard cepstral features for an ASR system • Features will mainly be cross-channel in nature • Overlap detection for farfield mics • Investigate features for overlap detection using HMM segmenter • Metric: diarization error rate • Baseline features: standard cepstral features • Features will mainly be single-channel and pitch-related • Overlap speech processing for farfield mics • Determine if speech separation methods can reduce WER • Harmonic enhancement and suppression (HES) • Adaptive decorrelation filtering (ADF) Crosstalk Overlapped speech K. Boakye QE » Introduction

Part I: Speech Activity Detection for Nearfield Microphones K. Boakye QE » Part I: Speech Activity Detection

Related work • Amount of work specific to multi-speaker SAD is rather small • Wrigley et al. ’03 and ’05 • Performed a systematic analysis of features for classifying multi-channel audio • Key result: from among 20 features examined, best performing for each class was one derived from cross-channel correlation • Pfau et al. ’01 • Thresholding cross-channel correlations as a post-processing step for HMM based SAD yielded 12% relative frame error rate reduction • Laskowski et al. ’04 • Cross-channel correlation thresholding produced ASR WER improvements of 6% absolute over energy-thresholding K. Boakye QE » Part I: Speech Activity Detection

Candidate features • Cepstral features • Consist of 12th-order Mel frequency cepstral coefficients, log-energy, and their first- and second-order time derivatives • Common to a number of speech-related fields • Log-energy is a fundamental component of most SAD systems • MFCCs could distinguish local speech from phenomena with similar energy levels (breaths, coughs, etc.) K. Boakye QE » Part I: Speech Activity Detection

P 1 ¡ X ( ) ( ) ( ) ( ) k k k C t t t ¡ ¡ ¡ m a x x x ¿ w = i j i j ¿ k 0 = Candidate features • Cross-channel correlation • Clear first-choice for cross-channel feature • Wrigley et al.: normalized cross-channel correlation most effective feature for crosstalk detection • Normalization seeks to compensate for channel gain differences and is done based on frame-level energy of • Target channel • Non-target channel • Square root of target and non-target (spherical normalization) K. Boakye QE » Part I: Speech Activity Detection

( ) ( ) ( ) D E E t t t ¡ ( ) ( ) E E E t t ¡ = i j i j = i i i i n o r m m n ; ; Candidate features • Log-energy differences • Just as energy is a good feature for single-channel SAD, relative energy between channels should work well for our scenario • Represents ratio of short-time energy between channels • Much less utilized than cross-channel correlation, though can be more robust • Normalized log-energy difference • Compensate for channel gain differences K. Boakye QE » Part I: Speech Activity Detection

Standard cross-correlation GCC-PHAT Candidate features • Time delay of arrival (TDOA) estimates • Performed well as features for farfield speaker diarization • Ellis and Liu ’04 and Pardo et al. ’06 • Seem particularly well suited to distinguish local speech from crosstalk • Proposed estimation method: generalized cross-correlation with phase transform (GCC-PHAT) K. Boakye QE » Part I: Speech Activity Detection

Feature generation and combination • One issue with cross-channel features: variable number of channels • Varies between 3 and 12 for some corpora • Proposed solution: use order statistics (max and min) • Considered feature combination as well • Simple concatenation • Combination with dimensionality reduction • Principal component analysis (PCA) • Linear discriminant analysis (LDA) • Multilayer perceptron (MLP) K. Boakye QE » Part I: Speech Activity Detection

Work plan for part I • Compare performance of HMM segmentation using proposed features • Metrics: WER and DER • DER typically correlates with WER • DER can be computed quickly • Data: NIST Rich Transcription (RT) Meeting Recognition evaluations • 10-12 min. excerpts of meeting recordings from different sites • Baseline measure: standard cepstral features • Feature performance measured in isolation and with baseline features • Try to determine best combination of features and combination technique that obtains this • Significant amount of this work has been done K. Boakye QE » Part I: Speech Activity Detection

Part II: Overlap Detection for Farfield Microphones K. Boakye QE » Part II: Overlap Detection

Related work • “Usable” speech for speaker recognition • Lewis and Ramachandran ’01 • Compared MFCCs, LPCCs, and proposed pitch prediction feature (PPF) for speaker count labeling on both closed- and open-set scenarios • Shao and Wang ‘03 • Used multi-pitch tracking to identify usable speech for closed-set speaker recognition task • Yantorno et al. • Proposed spectral autocorrelation peak-valley ratio (SAPVR), adjacent pitch period comparison (APPC), and kurtosis K. Boakye QE » Part II: Overlap Detection

Candidate features • Cepstral features • As a representation of speech spectral envelope, should provide information on whether multiple speakers are active • Zissman et al.’90 • Gaussian classifier with cepstral features reported 80% classification accuracy between target-only, jammer-only, and target plus jammer speech K. Boakye QE » Part II: Overlap Detection

Candidate features • Cross-channel correlation • Recall Wrigley et al.: correlation best feature for nearfield audio classification • Unclear if this extends to farfield in overlap case • For nearfield, overlapped speech tends to have low cross-channel correlation • For farfield, large asymmetry in speaker-to-microphone distances not typically present → low correlation may not occur K. Boakye QE » Part II: Overlap Detection

Candidate features • Pitch estimation features • Explore how pitch detectors behave in presence of overlapped speech • Methods can be applied at subband level • May be appropriate here since harmonic energy from different speakers may be concentrated in different bands • Issue regarding unvoiced regions • Include feature that indicates voicing • Energy, zero-crossing rate, spectral tilt Zero-crossing distance Auto-correlation function Average magnitude difference function K. Boakye QE » Part II: Overlap Detection

( ( ) ) R R p p p q 2 1 1 1 ( ) R p 1 l S A P V R 2 0 o g = 1 0 ( ) R q 1 Candidate features • Spectral autocorrelation peak valley ratio Local maximum of non zero-lag spectral autocorrelation Next local maximum not harmonically related, or local minimum between and K. Boakye QE » Part II: Overlap Detection

4 f g E x 3 ¡ · = x f f g g 2 2 E x x Candidate features • Kurtosis • For zero-mean RV , kurtosis defined as: • Measures “Gaussianity” of a RV • Speech signals, which are modeled as Laplacian or Gamma tend to be super-Gaussian • Summing such signals produces a signal with reduced kurtosis (Leblanc and DeLeon ’98 and Krishnamachari et al. ’00) K. Boakye QE » Part II: Overlap Detection

Feature generation and combination • As with nearfield condition, number of channels varies with meeting • Aside from cross-channel correlation, features can be generated with a single channel • Explore two methods: • Select a single “best” channel based on SNR estimates • Combine audio signals using delay-and-sum beamforming to produce a single channel • May adversely affect pitch-derived features • Examine same combination approaches as before K. Boakye QE » Part II: Overlap Detection

Work plan for part II • Compare performance of HMM segmentation using proposed features • Metric: DER • Data: NIST Rich Transcription (RT) Meeting Recognition evaluations • Baseline measure will be standard cepstral features • Feature performance measured in isolation and in conjunction with baseline features • Try to determine overall best combination of features and best combination technique that obtains this K. Boakye QE » Part II: Overlap Detection

Part III: Overlap Speech Processing for Farfield Microphones K. Boakye QE » Part II: Overlap Speech Processing

^ T T T [ [ ] ] W X S S X M N · s s x s x = = = M N i 0 0 : : : : : : ; A X S = Related work • Blind source separation (BSS) Given andrelated by we seek to find If we assume ’s independent and at most one Gaussian distributed, solving method becomes one of independent component analysis (ICA) • Real-world audio signals have convolutive mixing • Reformulate problem in Z-transform domain → similar solutions • Most techniques iterative, based on infomax criteria • Lee and Bell ’97: BSS yielded improved recognition results for digit recognition in real room environment K. Boakye QE » Part III: Overlap Speech Processing

Related work • Blind source separation (BSS) • Another set of approaches based on minimizing cross-channel correlation — adaptive decorrelation filtering • Weinstein et al. ’93 & ’96 • Yen et al. ’96-‘99 Demonstrated improved recognition performance on simulated mixtures with coupling estimated from a real room K. Boakye QE » Part III: Overlap Speech Processing

Related work • Single-channel separation • Techniques based on computational auditory scene analysis (CASA) try to separate by partitioning audio spectrogram • Partitioning relies on certain types of structure in signal and uses cues such as pitch, continuity, and common onset and offset K. Boakye QE » Part III: Overlap Speech Processing

Related work • Single-channel separation • Bach and Jordan ’05 • Used spectral clustering to create speech stream partitions • Morgan et al. ’97 • Used simpler though related method exploiting harmonic structure • Results on keyword spotting suggest approach may be useful in an ASR context K. Boakye QE » Part III: Overlap Speech Processing

Harmonic enhancement and suppression • Single-channel speech separation method • Utilizes harmonic structure of voiced speech to separate • Speaker’s harmonics identified using pitch estimation and signal generated by enhancing • Alternatively, time-frequency bins of short-time Fourier transform in neighborhood of harmonics selected and the others zeroed, followed by signal reconstruction • For additional speaker, first speaker’s harmonics suppressed and/or other speaker’s harmonics enhanced, if pitch can be determined K. Boakye QE » Part III: Overlap Speech Processing

( ( ) ) ( ( ) ) ( ( ) ) ( ( ) ) ( ( ) ) f f f f f f f f f f Y Y H H S S H H S S + + = = 1 2 2 1 1 1 1 1 2 1 2 2 2 2 Adaptive decorrelation filtering • Multi-channel speech separation method • Separates signals by adaptively determining filters governing coupling between channels • Look at two-source, two-channel case: K. Boakye QE » Part III: Overlap Speech Processing

( ( ) ) ( ( ) ) ( ( ) ) ( ( ) ) f f f f f f f f Y Y X X B A X X + + = = 2 1 2 1 2 1 ( ) ( ) ( ) f f f X H S i 1 2 = = i i i i ; ; ( ) f H 1 2 ( ) f A = ( ) f H 2 2 ( ) f H 2 1 ( ) f B = ( ) f H 1 1 Adaptive decorrelation filtering • Multi-channel speech separation method • Separates signals by adaptively determining filters governing coupling between channels • Look at two-source, two-channel case: where K. Boakye QE » Part III: Overlap Speech Processing

^ ^ ( ) ( ) ( ) ( ) f f f f A A B B ( ) ( ) ( ) f f f V C X = = ( ( ) ) = t t ; ; i i x x 1 2 ( ( ) ) f f C C ( ) ( ) ( ) f f f C A B 1 ¡ = ^ ( ) ( ) ( ) ( ) f f f f V Y A Y ¡ = 1 1 2 ^ ( ) ( ) ( ) ( ) f f f f V Y B Y ¡ = 2 2 1 When and is invertible, the signals and can be perfectly restored Since , when is not invertible, linearly distorted versions of the signals can be obtained Adaptive decorrelation filtering • Now process the signals with the separation system: K. Boakye QE » Part III: Overlap Speech Processing

Work plan for part III • Employ speech separation algorithms on overlap segments to try to improve ASR performance • Metric: WER • Focus on WER in overlap regions • Data: NIST RT evaluation (same as in parts I and II) • Subsequent analyses if improvements obtained: • Compare processing entire segment over just overlap region • Process overlap regions as determined by overlap detector in part II • Analyze patterns of improvement, or conversely, which error types persist K. Boakye QE » Part III: Overlap Speech Processing

Preliminary Experiments K. Boakye QE » Preliminary Experiments

Preliminary Experiments • Experiments pertain to part I • Performed using Augmented Multiparty Interaction (AMI) development set meetings for the NIST RT-05S evaluation • Scenario-based meetings each involving 4 participants wearing headset or head-mounted lapel mics • Segmenter • Derived from HMM based speech recognition system • Two classes: “speech” and “nonspeech” each represented with a three-state phone model • Training data: First 10 minutes from 35 AMI meetings • Test data: 12-minute excerpts from four additional AMI meetings K. Boakye QE » Preliminary Experiments

Expt. 1: Single feature performance Diarization • LEDs and NLEDs outperform baseline cepstral features • NMXC features do more poorly • Higher FA rate • NLEDs give lower DER than LEDs • Indicates effectiveness of normalization procedure K. Boakye QE » Preliminary Experiments

Expt. 1: Single feature performance Recognition • NMXC features outperform baseline • LEDs and NLEDs do not • NLEDs give lower WER than LEDs • Cross-channel features reduce insertion rate (between 39% and 46% relative) • 4% difference between best feature (NMXC) and reference K. Boakye QE » Preliminary Experiments

Expt. 2: Initial feature combination • Combination with baseline yields similar performance for features • Exception: base + NMXC +LEDs • Improved performance comes from reduced FA/insertions • 3-way combos degrade performance • May be due to correlation between features • 2% difference between best combo and reference K. Boakye QE » Preliminary Experiments

Decoding Improve this… …to improve this S/NS Detection Feature Extraction Prob. Estimation Words Summary • Goal: Reduce errors caused by crosstalk and overlapped speech to improve speech recognition in meetings • Crosstalk • Use HMM based segmenter to identify local speech regions • Investigate features to effectively do this K. Boakye QE » Summary

Add this… …to improve this S/NS Detection Overlap Detection Overlap Processing Feature Extraction Prob. Estimation Decoding Words Feature Extraction Prob. Estimation Decoding Summary • Goal: Reduce errors caused by crosstalk and overlapped speech to improve speech recognition in meetings • Overlapped speech • Use HMM based segmenter to identify overlapped regions • Investigate features to effectively do this • Process overlap regions to improve recognition performance • Explore two method—HES and ADF—to see if they can do this K. Boakye QE » Summary