K-nearest neighbor methods

K-nearest neighbor methods. William Cohen 10-601 April 2008. But first…. Onward: multivariate linear regression. col is feature. Multivariate. Univariate. row is example. Y. X. ACM Computing Surveys 2002. Review of K-NN methods (so far). Kernel regression.

K-nearest neighbor methods

E N D

Presentation Transcript

K-nearest neighbor methods William Cohen 10-601 April 2008

Onward: multivariate linear regression col is feature Multivariate Univariate row is example

Y X

Kernel regression • aka locally weighted regression, locally linear regression, LOESS, … What does making the kernel wider do to bias and variance?

BellCore’s MovieRecommender • Participants sent email to videos@bellcore.com • System replied with a list of 500 movies to rate on a 1-10 scale (250 random, 250 popular) • Only subset need to be rated • New participant P sends in rated movies via email • System compares ratings for P to ratings of (a random sample of) previous users • Most similar users are used to predict scores for unrated movies (more later) • System returns recommendations in an email message.

Suggested Videos for: John A. Jamus. • Your must-see list with predicted ratings: • 7.0 "Alien (1979)" • 6.5 "Blade Runner" • 6.2 "Close Encounters Of The Third Kind (1977)" • Your video categories with average ratings: • 6.7 "Action/Adventure" • 6.5 "Science Fiction/Fantasy" • 6.3 "Children/Family" • 6.0 "Mystery/Suspense" • 5.9 "Comedy" • 5.8 "Drama"

The viewing patterns of 243 viewers were consulted. Patterns of 7 viewers were found to be most similar. Correlation with target viewer: • 0.59 viewer-130 (unlisted@merl.com) • 0.55 bullert,jane r (bullert@cc.bellcore.com) • 0.51 jan_arst (jan_arst@khdld.decnet.philips.nl) • 0.46 Ken Cross (moose@denali.EE.CORNELL.EDU) • 0.42 rskt (rskt@cc.bellcore.com) • 0.41 kkgg (kkgg@Athena.MIT.EDU) • 0.41 bnn (bnn@cc.bellcore.com) • By category, their joint ratings recommend: • Action/Adventure: • "Excalibur" 8.0, 4 viewers • "Apocalypse Now" 7.2, 4 viewers • "Platoon" 8.3, 3 viewers • Science Fiction/Fantasy: • "Total Recall" 7.2, 5 viewers • Children/Family: • "Wizard Of Oz, The" 8.5, 4 viewers • "Mary Poppins" 7.7, 3 viewers • Mystery/Suspense: • "Silence Of The Lambs, The" 9.3, 3 viewers • Comedy: • "National Lampoon's Animal House" 7.5, 4 viewers • "Driving Miss Daisy" 7.5, 4 viewers • "Hannah and Her Sisters" 8.0, 3 viewers • Drama: • "It's A Wonderful Life" 8.0, 5 viewers • "Dead Poets Society" 7.0, 5 viewers • "Rain Man" 7.5, 4 viewers • Correlation of predicted ratings with your actual ratings is: 0.64 This number measures ability to evaluate movies accurately for you. 0.15 means low ability. 0.85 means very good ability. 0.50 means fair ability.

Algorithms for Collaborative Filtering 1: Memory-Based Algorithms (Breese et al, UAI98) • vi,j= vote of user i on item j • Ii = items for which user i has voted • Mean vote for i is • Predicted vote for “active user” a is weighted sum weights of n similar users normalizer

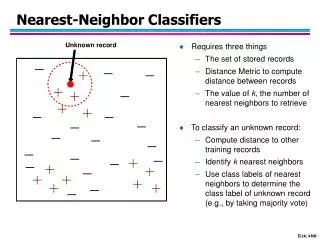

Basic k-nearest neighbor classification • Training method: • Save the training examples • At prediction time: • Find the k training examples (x1,y1),…(xk,yk) that are closest to the test example x • Predict the most frequent class among those yi’s. • Example: http://cgm.cs.mcgill.ca/~soss/cs644/projects/simard/

What is the decision boundary? Voronoi diagram

Convergence of 1-NN x2 P(Y|x’’) P(Y|x) x y2 neighbor y x1 P(Y|x1) y1 assume equal let y*=argmax Pr(y|x)

Basic k-nearest neighbor classification • Training method: • Save the training examples • At prediction time: • Find the k training examples (x1,y1),…(xk,yk) that are closest to the test example x • Predict the most frequent class among those yi’s. • Improvements: • Weighting examples from the neighborhood • Measuring “closeness” • Finding “close” examples in a large training set quickly

K-NN and irrelevant features ? + + + o o o o o o + + o + o o o o o + o o o o o o +

K-NN and irrelevant features + o o + ? o o + o o o o o o + o + + o + + o o o o o o

+ o o + o o + o o o o o o + o + + o + + o o o o o o K-NN and irrelevant features ?

Ways of rescaling for KNN Normalized L1 distance: Scale by IG: Modified value distance metric:

Ways of rescaling for KNN Dot product: Cosine distance: TFIDF weights for text: for doc j, feature i: xi=tfi,j * idfi : #docs in corpus #occur. of term i in doc j #docs in corpus that contain term i

Combining distances to neighbors Standard KNN: Distance-weighted KNN:

William W. Cohen & Haym Hirsh (1998): Joins that Generalize: Text Classification Using WHIRL in KDD 1998: 169-173.

M1 M2 Vitor Carvalho and William W. Cohen (2008): Ranking Users for Intelligent Message Addressing in ECIR-2008, and current work with Vitor, me, and Ramnath Balasubramanyan

Computing KNN: pros and cons • Storage: all training examples are saved in memory • A decision tree or linear classifier is much smaller • Time: to classify x, you need to loop over all training examples (x’,y’) to compute distance between x and x’. • However, you get predictions for every class y • KNN is nice when there are many many classes • Actually, there are some tricks to speed this up…especially when data is sparse (e.g., text)

Efficiently implementing KNN (for text) IDF is nice computationally

Tricks with fast KNN K-means using r-NN • Pick k points c1=x1,….,ck=xkas centers • For each xi, find Di=Neighborhood(xi) • For each xi, let ci=mean(Di) • Go to step 2….

Efficiently implementing KNN dj3 Selective classification: given a training set and test set, find the N test cases that you can most confidently classify dj2 dj4