Asynchronous Assertions

Asynchronous Assertions. Eddie Aftandilian and Sam Guyer Tufts University Martin Vechev ETH Zurich and IBM Research Eran Yahav Technion. Motivation. Assertions are great Assertions are not cheap Cost paid at runtime Limits kinds of assertions Our goal

Asynchronous Assertions

E N D

Presentation Transcript

Asynchronous Assertions Eddie Aftandilian and Sam Guyer Tufts University Martin Vechev ETH Zurich and IBM Research EranYahav Technion

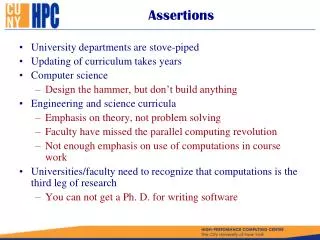

Motivation • Assertions are great • Assertions are not cheap • Cost paid at runtime • Limits kinds of assertions • Our goal • Support more costly assertions (e.g., invariants) • Support more frequent assertions • Do it efficiently

Idea • Asynchronous assertions • Evaluate assertions concurrently with program • Lots of cores (more coming) Enable more expensive assertions • Thank you. Questions? • Two problems • What if program modifies data being checked? • When do we find out about failed assertions?

Solution • Run assertions on a snapshotof program state • Guarantee • Get the same result as synchronous evaluation • No race conditions • How can we do this efficiently?

Our implementation • Incremental construction of snapshots (copy-on-write) • Integrated into the runtime system (Jikes RVM 3.1.1) • Supports multiple in-flight assertions

Overview Program thread Checker thread Needs to see oldo.f wait(); a= assert(OK()); ... OK() { o.f = p; ... ... if (o.f == …); ... b = a.get(); return res; } ..wait.. if (b) { ...

Key Main thread Checker thread if (o.f == …) o.f = p; Write Barrier Read Barrier for each active assertion A if o not already in A’s snapshot o’ = copy o mapping(A, o) := o’ modify o.f if o in my snapshot o’ = mapping(me, o) return o’.f else return o.f

Implementation • How do we know when a copy is needed? • Epoch counter E Ticked each time a new assertion starts • On each object: copied-at timestamp Last epoch in which a copy was made • Write barrier fast path: If o.copied-at < E then some assertion needs a copy • How do we implement the mapping? • Per-object forwarding array • One slot for each active assertion

Tricky bits • Synchronization • Potential race condition • Checker looks in mapping, o not there • Application writes o.f • Checker reads o.f • Lock on copied-at timestamp • Cleanup • Zero out slot in forwarding array of all copied objects • We need to keep a list of them • Copies themselves reclaimed during GC

Optimizations • Snapshot sharing • All assertions that need a copy can share it • Reduces performance overhead by 25-30% • Store copy in each empty slot in forwarding array (for active assertions) • Avoid snapshotting new objects • New objects are not in any snapshot • Idea: initialize copied-at to E • Fast path test fails until new assertion started

Interface • Waiting for assertion result • Traditional assertion model • Assertion triggers whenever check completes • Futures model • Wait for assertion result before proceeding • Another option: “Unfirethe missiles!” • Roll back side-effecting computations after receiving assertion failure

Evaluation • Idea: • We know this can catch bugs • Goal: understand the performance space • Two kinds of experiments • Microbenchmarks • Various data structures with invariants • Pulled from runtime invariant checking literature • pseudojbb • Instrumented with a synthetic assertion check • Performs a bounded DFS on database • Systematically control frequency and size of checks

Key results • Performance • When there’s enough work • 7.2-7.5x speedup vs. synchronous evaluation • With 10 checker threads • Ex. 12x sync slowdown 1.65x async • When there’s less work: 0-60% overhead • Extra memory usage for snapshots • 30-70 MB for JBB (out of 210 MB allocated) • In steady state, almost all mutated objects are copied • Cost plateaus

Pseudojbb graph schema Sync Async Application waiting Normalized runtime Snapshot overhead 1.0 Overloaded OK Assertion workload

Related work • Concurrent GC • Futures • Runtime invariant checking • FAQ: why didn’t you use STM? • Wrong model • We don’t want to abort • In STM, transaction sees new state, other threads see snapshot • Weird for us: the entire main thread would be a transaction

Conclusions • Execute data structure checks in separate threads • Checks are written in standard Java • Handle all synchronization automatically • Enables more expensive assertions than traditional synchronous evaluation Thank you!

Copy-on-write implementation obj a b a c b

Related work • Safe Futures for Java [Welc 05] • Future Contracts [Dimoulas 09] • Ditto [Shankar 07] • SuperPin [Wallace 07] • Speculative execution [Nightingale 08, Kelsey 09, Susskraut 09] • Concurrent GC • Transactional memory