Program Evaluation

Program Evaluation. How Do I Show This Works? Paul F. Cook, PhD UCDHSC School of Nursing. Why Evaluate?. We have to (state, federal, or contract reg.s) In order to compete (JCAHO, NCQA, URAC) It helps us manage staff It helps us manage programs It helps us maintain high-quality programs

Program Evaluation

E N D

Presentation Transcript

Program Evaluation How Do I Show This Works? Paul F. Cook, PhD UCDHSC School of Nursing

Why Evaluate? • We have to (state, federal, or contract reg.s) • In order to compete (JCAHO, NCQA, URAC) • It helps us manage staff • It helps us manage programs • It helps us maintain high-quality programs • It helps us develop even better programs

Targets for Evaluation • Services (ongoing quality of services delivered) • Systems (service settings or workflows) • Programs (special projects or initiatives) • “horse races” to decide how to use limited resources • cost/benefit analysis may be included • People (quality of services by individuals) • provider or site “report cards” • clinical practice guideline audits • supervisor evaluation of individuals Grembowski. (2001). The Practice of Health Program Evaluation

Basic: One-Time Evaluation • Special projects • Grants • Pilot programs May have a control group or use pre-post design Opportunity for better-designed research Finish it and you’re done

Intermediate: Quality Improvement • Measure (access, best practices, patient satisfaction, provider satisfaction, clinical outcomes) • Identify Barriers • Make Improvements • Re-Measure • Etc. Measure Improve Identify Barriers

Advanced: “Management by Data” • Data “dashboards” • Real-time monitoring of important indicators • Requires automatic data capture (no manual entry) and reporting software – sophisticated IT If you want to try this at home: • SQL database (or start small with MS Access) • Crystal Reports report templates • Crystal Enterprise software to automate reporting

Worksheet for a QI Project • Name (action word: e.g., “Improvingx …”) • Needs assessment • Target population • Identified need • Performance measures • Baseline data • Timeframe for remeasurement • Benchmark and/or goal • Barriers and opportunities • Strong and targeted actions • Remeasurement and next steps

“You can accomplish anything in life, provided that you do not mind who gets the credit.” —Harry S. Truman

Start with Stakeholders • Even if you know the right problem to fix, someone needs to buy in – who are they? • Coworkers • Management • Administration • Consumer advocates • Community organizations (CBPR) • The healthcare marketplace • Strategy: sell your idea at several levels • To Succeed: focus on each group’s needs Fisher, Ury, & Patton. (2003). Getting to Yes

Needs Assessment(Formative Evaluation) • Use data, if you have them • Describe current environment, current needs or goals, past efforts & results • Various methods: • Administrative services data • Administrative cost data • Administrative clinical data (e.g., EMR) • Chart review data (a small sample is OK) • Survey data (a small sample is OK) • Epidemiology data or published literature

Common Rationales for a QIP • High Risk • High Cost • High Volume • Need for Prevention

Program Design • Theoretical basis for the program • Resources needed • Time • People • Money/equipment • Space • Concrete steps for implementing the program • Manuals • Software • Tools/supplies • Training • Ongoing supervision

How Implementation May Fail • Lack of fidelity to theory • Providers not adequately trained • Treatment not implemented as designed • Participants didn’t participate • Participants didn’t receive “active ingredients” • Participants didn’t enact new skills • Results didn’t generalize across time, situations Bellg, et al. (2004). Health Psychology, 23(5), 443-451

Selling Innovations It may help to emphasize: • Relative advantage of the change • Compatibility with the current system • Simplicity of the change and of the transition • Testability of the results • Observability of the improvement Rogers. (1995). The Diffusion of Innovations.

“The Commanding General is well aware that the forecasts are no good. However, he needs them for planning purposes.” — Nobel laureate economist Kenneth Arrow, quoting from his time as an Air Force weather forecaster

Asking the Question • Ask the right question • Innovation • Process (many levels) • Outcome • Impact • Capacity-Building/Sustainability • Try to answer only one question • Focus on the data you must have at the end • Consider other stakeholders’ interests • Collect data on side issues as you can • Attend to “respondent burden” concerns

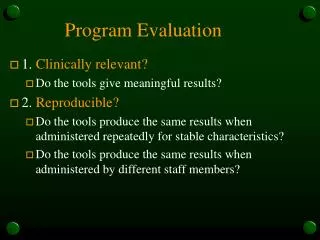

Standard Issues in Measurement • Reliability (data are not showing random error) • Validity (measuring the right construct) • Responsiveness (ability to detect change) • Acceptability (usefulness in practice) Existing measures may not work in all settings; Unvalidated measures may not tell you anything

Data Sources Provider Surveys Qualitative/ Focus Group Data Patient Satisfaction Surveys Safety Monitoring Data Answers Chart Review Data Interview Data Financial Data Encounter Data Patient Outcome Surveys

No Perfect Method • Patient survey • Recall bias • Social desirability bias • Response bias • Observer ratings • Inter-rater variability • Availability heuristic (“clinician’s illusion”) • Fundamental attribution error • Administrative data • Collected for other purposes • Gaps in coverage, care, eligibility, etc. Goal: measures that are “objective, quantifiable, and based on current scientific knowledge”

Benchmarking • Industry standards (e.g., JCAHO) • Peer organizations • “Normative data” for specific instruments • Research literature • Systematic reviews • Meta-analyses • Individual articles in peer-reviewed journals

Setting Goals • An average or a percent is OK • Set an absolute goal: “improve to 50%” vs “improve by 10% over baseline” • “improve by x percentage points”, not • “improve by x percent” (this depends on base rate) • Set an achievable goal: if you’re at 40%, don’t make it 90% • Set a ‘stretch goal’ - not too easy • ‘Zero performance defects’ (100%) is rarely helpful – use 95% or 99% performance instead

Special Issues in Setting Goals • Absolute number may work as a goal in some scenarios – e.g., # of rural health consults/yr – but percents or averages allow statistical significance testing • Improvement from zero to something is usually not seen as improvement (“so your program is new; but what good did it do?”) • Don’t convert a scale to a percent (i.e., don’t use a cut-off point) unless you absolutely must Owen & Froman (2005). RINAH, 28, 496-503

Specifying the “Denominator” • Level of analysis • Unit • Provider • Patient • For some populations, family • A subgroup, or the entire population? • Primary (entire population) • Secondary (at-risk population) • Tertiary (identified patients) • Subgroups of patients (e.g., CHD with complications)

Baseline Data • May be pre-existing • Charts • Administrative data • May need to collect data prior to starting • Surveys • Whatever you do for baseline, you will need to do it exactly the same way for remeasurement • Same type of informant (e.g., providers) • Same instruments (e.g., chart audit tool) • Same method of administration (e.g., by phone)

Sampling • Representativeness • Random sample, stratified sample, quota sample • Characteristics of volunteers • Underrepresented groups • Effect of survey method (phone, Internet) • Response Rate • 10% is good for industry • If you have 100% of the data available, use 100% • Finding the right sample size: http://www.surveysystem.com/sscalc.htm

Evaluation Frequency and Duration • Seasonal trends (selection bias) • Confounding factors • Organizational change (history) • Outside events (history) • Other changes in the organization (maturation) • Change in patient case mix (selection bias) • For the same subjects over time (pre/post): • Notice the shrinking denominator (attrition) • If your subjects know they are being evaluated: • Don’t evaluate too often (testing) • Don’t evaluate too rarely (reactivity)

Snow White and the 7 Threats to Validity • History – external events • Maturation – mere passage of time • Testing – observation changes the results • Instrumentation – random noise on the radar • Mortality/Attrition – data lost to follow-up • Selection Bias – not a representative sample • Reactivity – placebo (Hawthorne) effects Grace. (1996). http://www.son.rochester.edu/son/research/research-fables.

Study Design 9 8 7 6 5 4 3 2 1 Adapted from Bamberger et al. (2006). RealWorld Evaluation

Post Hoc Evaluation • Can you get posttest for the intervention? • Design 9 • Can you get baseline for the intervention? • Design 8 or 7 • Can you get posttest for a comparison group? • Design 6 • Can you get baseline for the comparison group? • Design 5 or 4 • (Randomization requires a prospective design)

Cost-Effectiveness Evaluation • From “does it work” to “can we afford it?” • Methods for cost-effectiveness evaluation: • Cost-offset: does it save more than it spends? • Cost-benefit: do the benefits produced (measured in $ terms – e.g., QALYs, 1/$50K) exceed the $ costs? • Cost-effectiveness: do the health benefits produced (measured as clinical outcomes – e.g. reduced risk based on odds ratios) justify the $ costs? • Cost-utility: do the health benefits produced (measured based on consumer preferences) justify the $ costs? (e.g., based on willingness to pay) Kaplan & Groessl. (2002). J Consult Clin Psych, 70(3), 482-493

Statistics • Descriptive statistics: “how big?” • Averages & Standard Deviations • Correlations • Odds Ratios • Inferential statistics: “how likely?” • Various tests (t, F, chi-square) • Correct test to use depends on the type of data • All give you a p-value (chance the result is random) • “Significant” or not is highly dependent on sample N

Evaluating Actions • Strong • Designed to address the barriers identified • Consistent with past experience/research literature • Seem likely to have an impact • Implemented effectively & consistently • Targeted • Right time • Right place • Right people • Have an impact on the barriers identified NCQA. (2003). Standards and Guidelines for the Accreditation of MBHOs.

Theory-Based Actions • What is the problem? (descriptive) • What causes the problem? (problem theory) • People • Processes • Unmet needs • How to solve the problem? (theory of change) • Educate • Coach or Train • Communicate or build linkages • Redesign existing systems or services • Design new systems or services • Use new technologies

Describe the Process • Needs analysis and stakeholder input • Identification of barriers • Theory basis for the intervention • What actions were considered? • Why were these actions chosen? • How did these actions address the identified barriers? • Implementation • What was done? • Who did it? • How were they monitored, supervised, etc.? • For how long, in what amount, in what way was it done? • Data collection • What measures were used? • How were the data collected, and by whom?

Describe the Results(Summative Evaluation) • What were the outcomes? • Data on the primary outcome measure • Compare to baseline (if available) • Compare to goal • Compare to benchmark • Provide data on any secondary measures that also support your conclusions about program outcomes • What else did you find out? • Answers to any additional questions that came up • Any other interesting findings (lessons learned) • Show a graph of the results

“Getting information from a table is like extracting sunlight from a cucumber.” —Wainer & Thissen, 1981

Conclusions • If the goals were met: • What key barriers were targeted? • What was the most effective action, and why? • If the goals were not met: • Did you miss some key barriers to improvement? • Was the idea good, but there were barriers to implementation that you didn’t anticipate? What were they, and how could they be overcome? • Did you get only part way there (e.g., change in knowledge but not change in behavior)? • Did the intervention produce results on other important outcomes instead?

Dissemination • Back to the original stakeholder groups • Remind them – needs, goals, and actions • Address additional questions or concerns • “That’s a good suggestion; we could try it going forward, and see whether it helps” • “We did try that, and here’s what we found” • “We didn’t have time/money/experience to do that, but we can explore it for the future” • “We didn’t think of that question, but we do have some data that might answer it” • “We don’t have data to answer that question, but it’s a good idea for future study”

Broader Dissemination • Organizational newsletter • Summaries for patients, providers, payors • Trade association conference or publication • Scholarly research conference presentation • Rocky Mountain EBP Conference • WIN Conference • Scholarly journal article • Where to publish depends on rigor of the design • Look at journal “impact factor” (higher = broader reach, but also more selective) • Popular press

Next Steps • PDSA model: after “plan-do-study,” the final step is “act” – roll out the program as widely as possible to obtain all possible benefits • Use lessons learned in this project as the needs analysis for your next improvement activity • Apply what you’ve learned about success in this area to design interventions in other areas • Set higher goals, and design additional actions to address the same problem even better