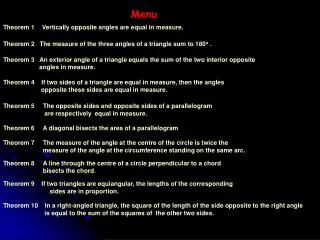

Menu

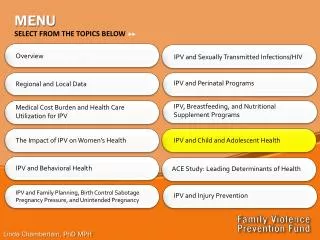

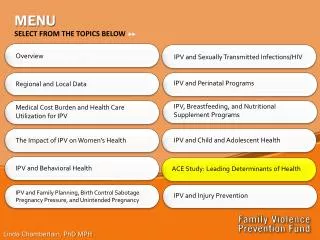

Menu. Report from:. Software Week LHC Alignment Workshop. Few Words on:. ID Detector News CSC for Alignment To Do List Strategy for a physics analysis start Miscellanea (computing, future meetings, etc…). LHC Alignment Workshop.

Menu

E N D

Presentation Transcript

Menu Report from: • Software Week • LHC Alignment Workshop Few Words on: • ID Detector News • CSC for Alignment • To Do List • Strategy for a physics analysis start • Miscellanea (computing, future meetings, etc…)

LHC Alignment Workshop • Very interesting meeting, people from different experiments shared experiences: • LHC people focused on strategies and problems • Running experiments reported how they have tackled the problem (CDF was also represented) Impression was LHC experiments want to achive an unprecedented level of precision. A lot of discussion about methods and common issues to solve (inv. Matrix, speed, validation, etc)

Tracking requirements ATLAS ID TDR impact parameter Degradation due to geometry knowledge: <20% on impact parameter and momentum Florian Bauer, 4/9/2006, LHC Alignment Workshop • Reasonable goal: • Pixel: sRF= 7 mm • SCT: sRF= 12 mm • TRT: sRF= 30 mm Furthermore studies of impact of SCT+Pixel random misalignment on B-Tagging abilities show: light jet reduction get worse by 10% for sRF=10mm light jet reduction get worse by 30% for sRF=20mm momentum S. Corréard et al, ATL-COM-PHYS-2003-049 11

Initial Misalignment • misalign detector elements on simulation level • initial misalignment in the order of 1mm to 30 mm has great impact on tracking performance • misalignment on reconstruction level produces same results Sergio Gonzalez, Grant Gorfine 12 Florian Bauer, 4/9/2006, LHC Alignment Workshop

Software requirements • ID consists of 1744 Pixel, 4088 SCT and 124 TRT modules • => 5956 modules x 6 DoF ~ 35.000 DoFs • This implies an inversion of a 35k x 35k matrix • Use calibration as X-ray and 3Dim measurements to setup • best initial geometry • combine information of tracks and optical measurements like FSI. • Reduce weakly determined modes using constraints: • vertex position, track parameters from other tracking detectors, • Mass constraints of known resonances, overlap hits, modelling, • E/p constraint from calorimeters, known mechanical properties etc. • ability to provide alignment constants 24h after data taking • (Atlas events should be reconstructed within that time) • And last but not least work under the ATLAS framework: ATHENA 13 Florian Bauer, 4/9/2006, LHC Alignment Workshop

Many approaches • Global c2 minimisation (the 35k x 35k inversion) • Local c2minimisation (correlations between modules put to 0, invert only the sub-matrices, iterative method) • Robust Alignment (Use overlap residuals for determining relative module to module misalignment, iterative method) Furthermore work done on: • Runtime alignment system (FSI) • B-field 14 Florian Bauer, 4/9/2006, LHC Alignment Workshop

“Weak modes” - examples “clocking” R (VTX constraint) radial distortions (various) “telescope” z~R • dependent sagitta • XabRcR2 • We need extra handles in order to tackle these. Candidates: • Requirement of a common vertex for a group of tracks (VTX constraint), • Constraints on track parameters or vertex position (external tracking (TRT, Muons?), calorimetery, resonant mass, etc.) • Cosmic events, • External constraints on alignment parameters (hardware systems, mechanical constraints, etc). • [PHYSTAT’05 proceedings] & talk from Tobi Golling • dependent sagitta “Global twist” • Rcot() global sagitta R …

Example “lowest modes” in PIX+SCT as reconstructed by the 2algorithm Global Freedom have been ignored (only one Z slice shown) • The above “weak modes” contribute to the lowest part of the eigen-spectrum. Consequently they dominate the overall error on the alignment parameters. • More importantly, these deformations lead directly to biases on physics (systematic effects).

Latest News: We need much more statistics, try to fix the CASTOR problem and run on multiple file. Try to expidite the code, maybe not redoing the pattern recognition every time Documentation: We can start to write it down the alignment idea and the results. Either one note (algo, calibration and cosmic) or two notes. Besides that rumors inicate people want a big cosmic ID pubblic note….

Some problem with the infrastructure • algorithms discussed here use single CPU: can never work in an online environment, because not feasible to process required amount of data in decent amount of time • need to split algorithms into • part that collects data from tracks (i.e. the chisquare derivatives); perfectly suitable for parallel processing in online reconstruction • part that calculate alignment constants and writes those to database; should on a single CPU, for example at the end or a run, or at fixed intervals • Is somebody designing this system for Atlas? If so, is there already a template for calibration algorithms? I’d like to start using it … … this raised some concern…

Another TRT SW meeting this Thursday to discuss organization and task sharing

Mail from Wouter today about To-Do list

About CSC: • Important to start now to convert the code from cosmic setup to collision setup(+MF). • Daniel personally contacted me about it, he really stressed the importance of that • The NPI postdoc is here for two month and he’s willing to help on that and share the burden • Meeting tomorrow with him, Daniel, Wouter, Peter et al… • My feeling is we should fully go on this

On Analysis Start-up strategy: • Give a talk on what we have done in CDF (AIDA) and why we think we can do better in ATLAS • Look for some “external” collaboration. A lot of student/people involved in commissioning looking for physics topics. Little or no “internal” advising, perfect for an “external” mentoring (need some contact). • Example: Antony from Melbourne… • Caveat: It is useless give a talk and step forward if we don’t have to follow up in a reasonable time frame • (i.e. give a talk and see you in one year….)