Attention for translation

180 likes | 195 Vues

Learn how attention mechanisms enhance translation by encoding input into vectors, selecting essential information during decoding, and aligning inputs with outputs. Dive deeper into how soft attention and context vectors improve translation accuracy. Explore the vital concepts, models, and applications of attention in neural network architectures. Discover the transformative impact of self-attention and multi-headed attention in language processing. Unleash the potential of attention mechanisms for improved RNN performance.

Attention for translation

E N D

Presentation Transcript

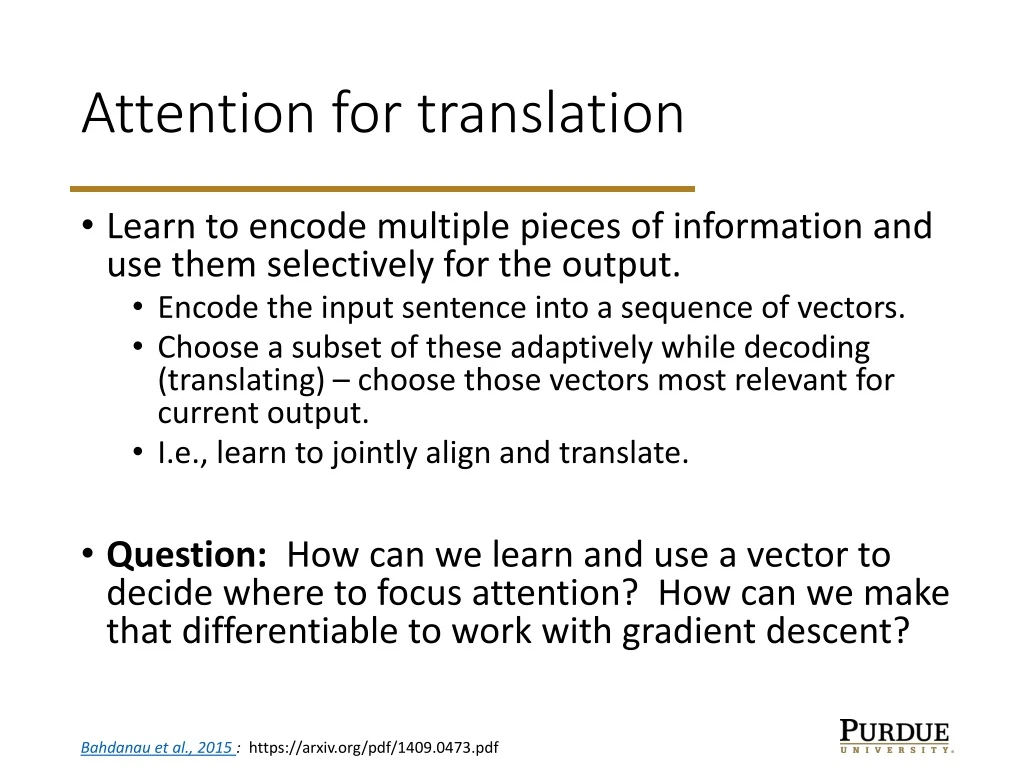

Attention for translation • Learn to encode multiple pieces of information and use them selectively for the output. • Encode the input sentence into a sequence of vectors. • Choose a subset of these adaptively while decoding (translating) – choose those vectors most relevant for current output. • I.e., learn to jointly align and translate. • Question: How can we learn and use a vector to decide where to focus attention? How can we make that differentiable to work with gradient descent? Bahdanau et al., 2015 : https://arxiv.org/pdf/1409.0473.pdf

Soft attention • Use a probability distribution over all inputs. • Classification assigned probability to all possible outputs • Attention uses probability to weight all possible inputs – learn to weight more relevant parts more heavily. https://distill.pub/2016/augmented-rnns/

Attention for translation • Input, x, and output, y, are sequences • Encoder has a hidden state hiassociated with each xi. These states come from a bidirectional RNN to allow information from both sides in encoding. https://lilianweng.github.io/lil-log/2018/06/24/attention-attention.html

Attention for translation • Decoder: each output yi is predicted using • Previous output yi-1 • Decoder hidden state si • Context vector ci • Decoder hidden state depends on • Previous output yi-1 • Previous state si-1 • Context vector ci • Attention is embedded in the context vector via learned weights https://lilianweng.github.io/lil-log/2018/06/24/attention-attention.html

Context vector • The encoder hidden states are combined in a weighted average to form a context vector ct for the tth output. • This can capture features from each part of the input. https://lilianweng.github.io/lil-log/2018/06/24/attention-attention.html

Attention for translation • The weights va and Wa are learned using a feed-forward network trained with the rest of the network. https://lilianweng.github.io/lil-log/2018/06/24/attention-attention.html

Attention for translation • Key ideas: • Implement attention as a probability distribution over inputs/features. • Extend encoder/decoder pair to include context information relevant to the current decoding task. https://lilianweng.github.io/lil-log/2018/06/24/attention-attention.html

Attention with images • Can combine a CNN with an RNN using attention. • CNN extracts high-level features. • RNN generates a description, using attention to focus on relevant parts of the image. https://distill.pub/2016/augmented-rnns/

Self-attention • Previous: focus attention on the input while working on the output • Self-attention: focus on other parts of input while processing the input http://jalammar.github.io/illustrated-transformer/

Self-attention • Use each input vector to produce query, key, and value for that input: each is defined by a matrix multiplication of the embedding with each of WQ, WK, WV http://jalammar.github.io/illustrated-transformer/

Self-attention • Similarity is determined by dot product of the Query of one input with the Key of all the inputs • E.g., for input 1, get a vector of dot products (q1, k1), (q1, k2), … • Do a scaling and softmax to get a distribution over the input vectors. This gives a distribution p11, p12, … that is the attention for input 1 on all inputs. • Use the attention vector to do a weighted sum over the Value vectors for the inputs: z1 = p11v1 + p12v2 + … • This is the output of the self-attention for input 1 http://jalammar.github.io/illustrated-transformer/

Self-attention • Multi-headed attention: run several copies in parallel and concatenate the outputs for next layer.

Related work • Neural Turing Machines – combine an RNN with an external memory. https://distill.pub/2016/augmented-rnns/

Neural Turing Machines • Use attention to do weighted read/writes at every location. • Can combine content-based attention with location-based attention to take advantage of both. https://distill.pub/2016/augmented-rnns/

Related work • Adaptive computation time for RNNs • Include a probability distribution on the number of steps for a single input • Final output is a weighted sum of the steps for that input https://distill.pub/2016/augmented-rnns/

Related work • Neural programmer • Determine a sequence of operations to solve some problem. • Use a probability distribution to combine multiple possible sequences. https://distill.pub/2016/augmented-rnns/

Attention: summary • Attention uses a probability distribution to allow the learning and use of relevant inputs for RNN output • This can be used in multiple ways to augment RNNs: • Better use of input to encoder • External memory • Program control (adaptive computation) • Neural programming