Balanced BST

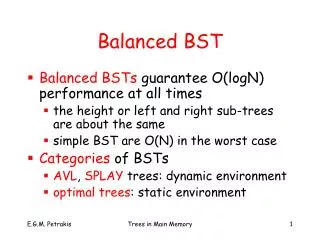

Balanced BST. Balanced BSTs guarantee O(logN) performance at all times the height or left and right sub-trees are about the same simple BST are O(N) in the worst case Categories of BSTs AVL , SPLAY trees: dynamic environment optimal trees : static environment. AVL Trees.

Balanced BST

E N D

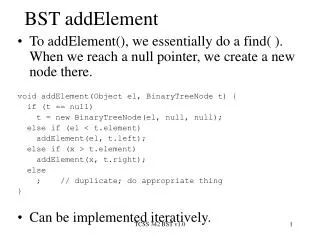

Presentation Transcript

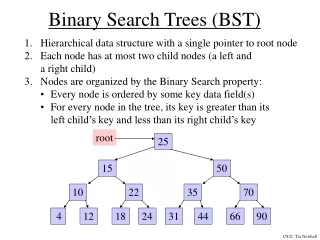

Balanced BST • Balanced BSTs guarantee O(logN) performance at all times • the height or left and right sub-trees are about the same • simple BST are O(N) in the worst case • Categories of BSTs • AVL, SPLAY trees: dynamic environment • optimal trees: static environment Trees in Main Memory

AVL Trees • AVL (Adelson, Lelskii, Landis): the height of the left and right subtrees differ by at most 1 • the same for every subtree • Number of comparisons for membership operations • best case: completely balanced • worst case: 1.44 log(N+2) • expected case: logN + .25 <= Trees in Main Memory

AVL Trees • “—” : completely balanced sub-tree • “/” : the left sub-tree is 1 higher • “\” : the right sub-tree is 1 higher Trees in Main Memory

AVL Trees Trees in Main Memory

Non AVL Trees critical node “//” : the left sub-tree is 2 higher “\\” : the right sub-tree is 2 higher Trees in Main Memory

Α Β Β Α T1 T3 T3 T2 T1 T2 Single Right Rotations • Insertions or deletions may result in non AVL trees => apply rotations to balance the tree Trees in Main Memory

Α B Α Α Α Α Β T1 T3 T2 T3 T1 T2 Single Left Rotations Trees in Main Memory

4 4 2 2 single right rotation insert 1 2 1 4 1 8 4 4 insert 9 single left rotation 4 9 8 8 9 Trees in Main Memory

Α Β C Α Α C C Α A Β A T4 T4 Α Τ1 Α T4 T2 T3 B Α Τ2 or T1 T3 T1 T3 T2 or or Double Left Rotation • Composition of two single rotations (one right and one left rotation) Trees in Main Memory

7 Critical node 4 4 \\ 4 8 2 7 2 8 = / = 9 2 6 6 8 / 7 = = 9 9 6 Example of Double Left Rotation insert 6 Trees in Main Memory

Α Β Α B C Α A Α C Α Α Α B T4 T2 T3 Τ4 Α Β A T1 T4 T3 or T1 T2 T3 T1 T2 or or Double Right Rotation • Composition of two single rotations (one left and one right rotation) Trees in Main Memory

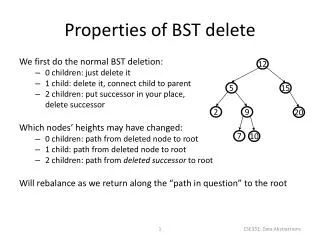

Insertion (deletion) in AVL • Follow the search path to verify that the key is not already there • Insert (delete) the key • Retreat along the search path and check the balance factor • Rebalance if necessary (see next) Trees in Main Memory

Rebalancing • For every node reached coming up from its left sub-tree after insertion readjust balance factor • ‘=’ becomes ‘/’ => no operation • ‘\’ becomes ‘=’ => no operation • ‘/’ becomes ‘//’ => must be rebalanced!! • The “//” node becomes a “critical node” • Only the path from the critical node to the leaf has to be rebalanced!! • Rotation is applied only at the critical node! Trees in Main Memory

Rebalancing (cont.) • The balance factor of the critical node determines what rotation is to take place • single or double • If the child and the grand child (inserted node) of the critical node are on the same direction (both “/”) => single rotation • Else => double rotation • Rebalance similarly if coming up from the right sub-tree (opposite signs) Trees in Main Memory

Performance • Performance of membership operations on AVL trees: • easy for the worst case! • An AVL tree will never be more than 45% higher that its perfectly balanced counterpart (Adelson, Velskii, Landis): • log(N+1) <= hb(N) <= l.4404log(N+2) – 0.302 Trees in Main Memory

Worst case AVL • Sparse tree => each sub-tree has minimum number of nodes Trees in Main Memory

Fibonacci Trees • Th: tree of height h • Th has two sub-trees, one with height h-1 and one with height h-2 • else it wouldn’t have minimum number of nodes • T0 is the empty sub-tree (also Fibonacci) • T1 is the sub-tree with 1 node (also Fibonacci) Trees in Main Memory

Fibonacci Trees (cont.) • Average height • Nh number of nodes of Th • Nh = Nh-1 + Nh-2 + 1 • N0 = 1 • N1 = 2 • From which h <= 1.44 log(N+1) Trees in Main Memory

7 8 7 8 7 9 8 insert9 9 More Examples single rotation Trees in Main Memory

7 6 6 6 8 8 8 insert 7 7 Examples (cont.) double rotation Trees in Main Memory

8 7 8 6 6 8 6 insert 7 7 Examples (cont.) double rotation Trees in Main Memory

single rotation 7 7 8 6 8 8 7 9 9 9 delete 6 Examples (cont.) Trees in Main Memory

6 8 6 8 6 8 9 7 7 9 9 7 delete 5 Examples (cont.) single rotation 5 Trees in Main Memory

6 7 6 8 6 8 8 7 7 delete 5 Examples (cont.) double rotation 5 Trees in Main Memory

5 5 2 8 3 8 delete 8 delete 4 1 3 7 10 2 4 7 10 9 11 1 6 9 11 6 General Deletions Trees in Main Memory

5 5 2 2 7 10 delete 6 1 3 6 1 3 7 10 11 delete 5 delete 8 9 9 11 General Deletions (cont.) Trees in Main Memory

3 2 10 delete 5 1 7 11 9 General Deletions (cont.) Trees in Main Memory

Self Organizing Search • Splay Trees: adapt to query patterns • move to root (front): whenever a node is accessed move it to the root using rotations • equivalent to move-to-front in arrays • current node • insertions: inserted node • deletions: father of deleted node or null if this is the root • membership: the last accessed node Trees in Main Memory

Search(10) 20 20 30 30 15 15 first rotation 10 second rotation 13 8 13 10 14 14 8 20 10 10 30 8 20 third rotation 15 30 8 15 13 13 14 14 Trees in Main Memory

p q p q a c b c a b Splay Cases • If the current node q has no grandfather but it has father p=> only one single rotation • two symmetric cases: L, R Trees in Main Memory

gp q p p LL a d gp b c q a b c d q gp RL gp p p a a q b c d d b c LL symmetric ofRR RL symmetric ofRL • If p has also grandfather qp => 4 cases Trees in Main Memory

1 1 a q α q j 8 j 8 i 2 i 2 LL RR 7 7 h 6 h 6 g 3 g 5 c 4 4 f e current node d 3 5 f c d e Trees in Main Memory

1 1 a q α q j 8 j 5 LR RL i 2 2 8 5 4 i b 6 6 3 4 e 7 7 c f g 3 f d g h c e a, b, c … are sub-trees Trees in Main Memory

5 1 q a 8 j 2 b 6 i 4 7 3 f g h e c d Trees in Main Memory

Splay Performance • Splay trees adapt to unknown or changing probability distributions • Splay trees do not guarantee logarithmic cost for each access • AVL trees do! • asymptotic cost close to the cost of the optimal BST for unknown probability distributions • It can be shown that the “cost of m operations on an initially empty splay tree, where n are insertions is O(mlogn) in the worst case” Trees in Main Memory

Optimal BST • Static environment: no insertions or deletions • Keys are accessed with various frequencies • Have the most frequently accessed keys near the root • Application: a symbol table in main memory Trees in Main Memory

- … α1… α2…αi … αi+1 ….…αn…αn+1 E0 E1 E2 Ei En Ei= (αi , αi+1 ) E0= (- , α1 ) En= (αn , ) Searching • Given symbols a1 < a2 < ….< an and their probabilities: p1, p2, … pn minimize cost • Successful search • Transform unsuccessful to successful • consider new symbols E1, E2, … En Trees in Main Memory

an an-1 unsuccessful search for all values in Ei terminates on the same failure node (in fact, one node higher) Ei an-2 failure node an-3 Unsuccessful Search Trees in Main Memory

(a1, a2, a3) = (do, if, read) p i = q i = 1/7 do if if read if read if do read do cost = 13/7 Optimal BST cost = 15/7 cost = 15/7 Example Trees in Main Memory

read do read do if if cost = 15/7 cost = 15/7 Trees in Main Memory

unsuccessful search successful search Search Cost • If pi is the probability to search for ai and qi is the probability to search in Ei then Trees in Main Memory

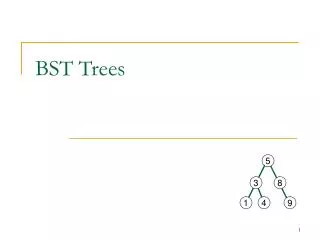

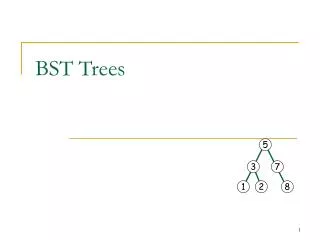

20 10 25 25 5 13 13 24 24 26 26 12 14 14 Observation 1 • In a BST, a subtree has nodes that are consecutive in a sorted sequence of keys (e.g. [5,26]) Trees in Main Memory

Observation 2 • If Tij is a sub-tree of an optimal BST holding keys from i to j then • Tij must be optimal among all possible BSTs that store the same keys • optimality lemma: all sub-trees of an optimal BST are also optimal Trees in Main Memory

Optimal BST Construction • Construct all possible trees: NP-hard, there are trees!! • Dynamic programming solves the problem in polynomial time O(n3) • at each step, the algorithm finds and stores the optimal tree in each range of key values • increases the size of the range at each step until the whole range is obtained Trees in Main Memory

Example (successful search only): keys 1 10 20 40 probabilities 0,3 0,2 0,1 0,4 BSTs with 1 node range1 10 20 40 cost= 0.3 0.2 0.1 0.4 BSTs with 2 nodes k=1-10 k=10-20 k=20-20 range 1-10 range 10-20 range 20-40 optimal 1 optimal 10 20 10 20 40 cost=0.3 1+0.2 2=0.7 cost=0.1+0.8=0.9 cost=0.2+0.1 2=0.4 10 20 optimal 40 1 10 20 cost=0.2 1+0,3.2=0.8 cost=0.1+0.2 2=0.5 cost=0.4+0.2=0.6 Trees in Main Memory

BSTs with 3nodes k=10-40 k=1-20 range 10-40 range 1-20 20 10 40 1 20 10 cost=0.1+2 0.3+3 0.2=1.3 cost=0.2+2 0.4+3 0.1=1.3 10 20 1 10 40 20 cost=0.1+2(0.2+0.4)=1.3 cost=0.2+2(0.3+0.1)=1 40 1 optimal 10 10 20 20 cost=0.4+2 0.2+30.1=1.1 cost=0.3+2 0.2+3 0.1=1 Trees in Main Memory

BSTs with 4 nodes range 1-40 1 40 cost=0.3+20.4+30.2+40.1=2.1 10 20 10 1 cost=0.2+2(0.3+0.4)+3 0.1=1.9 40 20 OPTIMAL BST 20 40 cost=0.1+2(0.3+0.4)+3 0.2=2.1 1 10 40 1 cost=0.4+2 0.3+3 0.2+4 0.1=2 10 Trees in Main Memory 20

Complexity • Compute all optimal BSTs for all Cij, i,j=1,2..n • Let m=j-i: number ofkeys in range Cij • n-m-1Cij’s must be computed • The one with the minimum cost must be found, this takes O(m(n-m-1)) time • For all Cij’s it takes • There is a better O(n2) algorithm by Knuth • There is also a O(n) greedy algorithm Trees in Main Memory

Optimal BSTs • High probability keys should be near the root • But, the value of a key is also a factor • It may not be desirable to put the smallest or largest key close to the root => this may result in skinny trees (e.g., lists) Trees in Main Memory