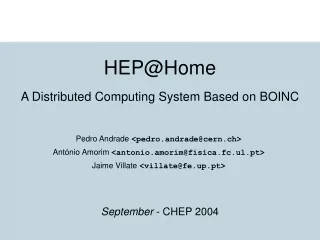

HEP@Home

HEP@Home. A Distributed Computing System Based on BOINC. September - CHEP 2004. Overview. Introduction BOINC HEP@Home ATLAS Use Case Tests and Results Conclusions. Introduction. Project participants: Faculdade de Ciências da Universidade de Lisboa

HEP@Home

E N D

Presentation Transcript

HEP@Home A Distributed Computing System Based on BOINC September - CHEP 2004

Overview • Introduction • BOINC • HEP@Home • ATLAS Use Case • Tests and Results • Conclusions HEP@Home

Introduction • Project participants: • Faculdade de Ciências da Universidade de Lisboa • Faculdade de Engenharia da Universidade do Porto • From Grid-Brick system presented at CHEP2003 • Goals: • Create a distributed computing system • Explore commodity CPU’s and disks and keep them together • Use public computing • Evaluate its use for dedicated HEP clusters. HEP@Home

Overview • Introduction • BOINC • Description • Features • Behavior • Related Work • HEP@Home • ATLAS Use Case • Tests and Results • Conclusions HEP@Home

Description • Stands for Berkeley Open Infrastructure for Network Computing • Generic software platform for distributed computing • Developed by the SETI@Home team • Based on public computing • Key concepts • Project • Application • Workunit (Job) • Result HEP@Home

Features • Generic platform: supports many applications / projects • Projects can be run simultaneously • Common language applications can run as BOINC applications • Fault-tolerance • Monitored through a Web interface • Implements security mechanisms HEP@Home

Behavior Client makes requests, Server is passive • Initial communication • Work request • Hardware characteristics • Server decides • Workunit download • Application • Input files • Results Upload HEP@Home

Related Work • Project-specific solutions: • SETI@Home • Distributed.net • Folding@Home • Commercial solutions • XtremWeb • JXGrid HEP@Home

Overview • Introduction • BOINC • HEP@Home • Background • Additional Features • Behavior • ATLAS Use Case • Tests and Results • Conclusions HEP@Home

Background Grid-Brick project: • Presented at CHEP2003 • Goal was merge storage units with computing farms. • Conclusions: • No central resource manager • Plug and play clients • Increase robustness • Fault-tolerant system HEP@Home

Additional Features • Avoid data movement • User specific applications • Environments • Scripts • Libraries • Environments patches • “get input” apps • Job dependencies HEP@Home

Behavior • Initial communication • Work request • Hardware characteristics • Available input files • Server decides: • Input file exists: ok • No input file: wait, run "get input" app • Workunit download: • Application • Environment / Patches • Results Upload HEP@Home

Overview • Introduction • BOINC • HEP@Home • ATLAS Use Case • Tests and Results • Conclusions HEP@Home

ATLAS Use Case • How can physicists use HEP@Home to run ATLAS jobs. • The actors of this use case can be: • Physicist doing personal job submission • Real production • Let us suppose we have: • Several ATLAS jobs to run • We know what files each job will produce and consume and how to generate or get these files. • We have computers connected to the Internet HEP@Home

ATLAS Use Case • Execution Steps: • Select or submit ATLAS application • Work submission: • environment files (job options files, scripts, etc) • environment patch • input file template • "get input" application • result (output file) template • As a result the user gets the aggregation of the produced output files as a unique output file. HEP@Home

Overview • Introduction • BOINC • HEP@Home • ATLAS Use Case • Tests and Results • Conclusions HEP@Home

Tests • Based on the defined ATLAS Use Case • Typical ATLAS jobs sequence using Muon events: • Generation: e events (1x) • Simulation: e/10 events (10x) • Digitization: e/10 events (10x) • Reconstruction: e/10 events (10x) • Two groups of tests were defined: e = 100, e = 1000. • For each group, 4 tests were made: • One simple client • Two BOINC client • Four BOINC client • Eight BOINC client HEP@Home

Results - Execution Times • Group A: 100 events • Group B: 1000 events HEP@Home

Results - Data Movement • 1000 events in 8 machines: • Seqx: events x00-x99 HEP@Home

Overview • Introduction • BOINC • HEP@Home • ATLAS Use Case • Tests and Results • Conclusions HEP@Home

Conclusions • Several BOINC projects are currently running successfully worldwide • From HEP@Home tests: • Execution of user applications => more flexibility • Environments and patches => easier work submission • Heavier computation => better results • Low data movement => better results • HEP@Home can be brought to physicists daily tasks with not much effort HEP@Home