A Domain Specific On-Chip Network Design for Large Scale Cache Systems

170 likes | 284 Vues

In the quest for high-performance computing, large caches in systems often lead to increased access times and underutilization of network resources. This paper introduces a single-stage multicast router and a new network topology designed for large caches, employing innovative routing algorithms and a Fast-LRU replacement policy. The objective is to minimize network overhead while maintaining performance standards. By optimizing link usage and reducing buffer needs, the proposed architecture aims to enhance overall efficiency and reduce power consumption in large-scale cache systems.

A Domain Specific On-Chip Network Design for Large Scale Cache Systems

E N D

Presentation Transcript

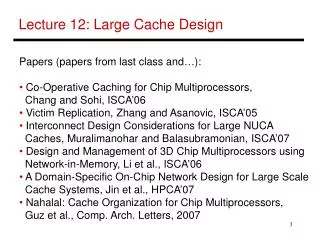

A Domain Specific On-Chip Network Design for Large Scale Cache Systems Yuho Jin, Eun Jung Kim, Ki Hwan Yum HPCA 2007

Motivation • Large caches are becoming the norm of the day • Not optimized/ larger access times • Overprovision and underutilization of network resources(!!) • Can same performance be achieved with lesser resources?

Contributions • Single stage multicast router • Not really new! (Not really feasible??) • New network topology for large caches (banks) • Minimizes the number of links in system • A new routing algorithm • For the new topology • A new replacement policy in NUCA caches • FAST-LRU, exploits multicast Overall aim was to reduce network overhead for minimal performance loss!!

Router Architecture • 4 VCs per PC • Near 1 stage router • Look-ahead routing • Buffer bypass • Spec Switch Alloc • Arbitration precomputation Co-ordinate System

Multicast Support • Multicast: Sync vs Async • Async Multicast => flit replication=> buffer space • Do it without extra h/w? • Use existing VC buffers • Copy flit to a different PC buffer • Use lesser used PCs • Get a free VC • Send flit to different destinations Figure courtesy: Chita R Das, OCIN ‘06

Fast-LRU Replacement • Bank Set arrangement - Sets distributed among banks Column ..……… S0, W1 ……… S1, W0 S0, W2 ……… S0, W3 ……… S0, W4 ………

Network Topology Access Patterns • A – data request • B: Check for data • B/C: Move data • D/E : Data to Core • A’/B’: Hit or Miss • F: Data delivery from mem to MRU • G: Dirty block to mem

Network Topology => Horizontal Links mostly not required Except here!!

Implications • Underutilized links removed => simplified network, area savings(?) • Power savings come for free!! (lesser buffers) • Links removed => constrained routing • XYX routing proposed • XY is dimension order • Algorithm simplified - • If going from core/mem to $ bank travel horizontal first • If going from $ bank to core/mem, travel vertical first

Example Yoff = -ve , Xoff = +ve -> Channel = Y-

Example Yoff = +ve, Xoff = +ve Channel -> X+, then Y+

Results Avg Access latency They target to optimize network latency – 50 -60% of total latency