Implementation of a Network Processor

The paper discusses innovative designs for a network processor aimed at improving data transfer speeds in high-performance multicore computing environments. By exploring direct connections between GPU chips and custom network processors, we aim to eliminate current bottlenecks and enhance latency, which currently stands at 50 microseconds. The proposed architecture outlines the integration of optical links and flexible communication protocols to achieve a target latency significantly lower than existing setups. This research is crucial for optimizing computational efficiency in applications reliant on heavy data processing.

Implementation of a Network Processor

E N D

Presentation Transcript

Implementationof aNetwork Processor G.Grosdidier, P.Matricon, K.Petrov LAL/IN2P3/CNRS & SPhN/IRFU/CEA (PetaQCDproject, 19/10/2011) Confidential

Block Design Multicore Processors Mem. Bus PCI Bus RAM GPU GPU Infiniband

DRAM Memory Bus Multicore Processors PCI Bus G D D R G D D R G D D R GPU GPU GPU Network

Required Multicore Processors (Intel, AMD ?) Mem. Bus 8GB/s r/w mem bus 8GB/sec both ways 6GB++/socket RAM RAM PCI Bus 6GB++/card RAM GPU Nvidia2090 ? GPU GPU 8x 2GB/sec both ways Infiniband ??

Current Multi-core Processors (Intel, AMD ?) ~10GB/s r/w Mem. Bus 8GB/s r/w mem bus 8GB/sec both ways 6GB++/socket RAM RAM PCI Bus ~5 GB/sec both ways 6GB++/card GDDR GPU Nvidia2090 ? GPU GPU 8x 2GB/sec both ways Nothing 0GB/sec

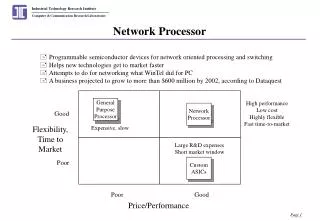

Current GPU-Direct • The performances have been measured in INRIA-Bordeaux • This is obviously preliminary • They got 50 μsec latency ‘one-way’ • This is by far too large for our requirements • What else ? • Lets assume we want these transfer durations to be negligible compared to main computation times, ie at least 10x faster

Next step ? • What can be done to enhance current setup ? • There seems to be a link already between the GPU chip and the HCA one • Green arrow below • Why not to use a straight route thru this link towards the other GPU ? • Red path

In the end • Replace the Infiniband HCA with Custom Network Processors • Better suited to our 8 NTN specific connectivity toplogy • Get rid of any network switches • Does not avoid transit through PCIe though (latencies …) • Increases the PCIe bottleneck on GPU exit Custom Network Processors

Inserting a Network Processor SystemRAM SystemRAM Memory Bus Memory Bus Multicore Processors CPU CPU QPI QPI QPI QPI Chipset Chipset Chipset Chipset Infiniband Infiniband IB HCA IB HCA PCIe x16 PCIe x16 GPU GPU GPU GPU Custom Protocol Network Processor Network Processor Network Processor Network Processor 8 Nearest Topological Neighbours 8 N.T.N. 8 N.T.N. 8 N.T.N.

Simplest case : 1 socket and 1 Chipset DRAM CPU QDR/ EDR/ FDR HCA/IB DMI Chipset 8 N.T.N. (optical links) PCIe Controller NP1 FPGA 16x 8X 8X NP2 FPGA GPU GDDR

Data routes DRAM CPU QDR/ EDR/ FDR HCA/IB DMI Chipset 8 N.T.N. (optical links) PCIe Controller NP1 FPGA 16x 8X 8X NP2 FPGA GPU GDDR

3 cards DRAM CPU QDR/ EDR/ FDR HCA/IB DMI Chipset 8 N.T.N. (optical links) PCIe Controller NP1 FPGA 16x 8X 8X NP2 FPGA GPU GDDR

Sandy Bridge case(?) DRAM Infiniband QDR/EDR/FDR CPU (multicore socket) DMI HCA/IB Chipset 4X 8 N.T.N. (optical links) PCIe Controller NP1 FPGA 16x 8X 8X NP2 FPGA GPU GDDR

Infiniband QDR/EDR/FDR NP1 8 N.T.N. (optical links) HCA/IB DRAM CPU (multicore socket) 4X DMI Chipset UC1 NP2 PCIe Controller Network Processor NP0 (FPGA) 8 N.T.N. (optical links) 8 N.T.N. (optical links) 16x 8X 8X 8x 8x 8x GPU GDDR 8x 8x 8x UC2 UC0 NP3 8 N.T.N. (optical links) UC3

8X 8X 8X 8X 8x 8x