A Recognition Model for Speech Coding

A Recognition Model for Speech Coding. Wendy Holmes 20/20 Speech Limited, UK A DERA/NXT Joint Venture. Introduction. Speech coding at low data rates (a few hundred bits/s) requires compact, low-dimensional representation. => code variable-length speech “segments”.

A Recognition Model for Speech Coding

E N D

Presentation Transcript

A Recognition Model for Speech Coding Wendy Holmes 20/20 Speech Limited, UK A DERA/NXT Joint Venture

Introduction • Speech coding at low data rates (a few hundred bits/s) requires compact, low-dimensional representation. => code variable-length speech “segments”. • Automatic speech recognition is potentially a powerful way to identify useful segments for coding. • BUT: HMM-based coding has limitations: • shortcomings of HMMs as production models • typical recognition feature sets (e.g. cepstral coefficients) impose limits on coded speech quality • difficult to retain speaker characteristics (at least for speaker-independent recognition).

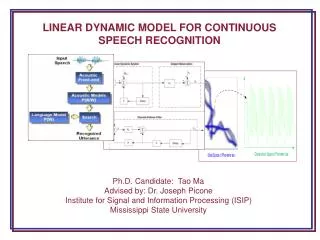

A simple coding scheme • Demonstrate principles of coding using same model for both recognition and synthesis. • Model represents linear formant trajectories. • Recognition: linear trajectory segmental HMMs of formant features. • Synthesis: JSRU parallel-formant synthesizer. • Coding is applied to analysed formant trajectories => relatively high bit-rate (typically 600-800 bits/s). • Recognition is used mainly to identify segment boundaries, but also to guide the coding of the trajectories.

Formant analyser (EUROSPEECH’97) • Each formant frequency estimate is assigned a value representing confidence in its measurement accuracy. When formants are indistinct, confidence is low. • In cases of ambiguity, the analyser offers two alternative sets of formant trajectories for resolution in the recognition process. “four seven”

Linear formant trajectory recognition • Feature set: formant frequencies plus mel-cepstrum coefficients and overall energy feature. • Confidences: represent as variances: low confidence => large variance. Add confidence variance to model variance, so low-confidence features have little influence. • Formant alternatives: choose one giving highest probability for each possible data segment and model state. • Numbers of segments depend on phone identity: e.g. 1 segment for fricatives; 3 for voiceless stops. • Range of durations : segment-dependent minimum and maximum segment duration.

Frame-by-frame synthesizer controls Values for each of 10 synthesizer control parameters are obtained at 10ms intervals: Voicing and fundamental frequency from excitation analysis program. 3 Formant frequency controls from formant analyser. 5 Formant amplitude controls from FFT-based method. • With 6 bits assigned to each of the 10 controls, the baseline data rate is 6000 bits/s.

Segment coding • Segments identified by recognizer are coded using straight-line fits to observed formant parameters. • Use a least mean square error criterion. For formant frequencies, frame error is weighted by confidence variance. Thus the more reliable frames have more influence. • To code a segment, represent value at start, and difference of end value from start value. • Force continuity across segment boundaries where smooth changes are required for naturalness (e.g. semivowel-vowel boundaries). • When there are formant alternatives, use those selected by recognizer.

Coding experiments • Tested on 2 tasks: speaker-independent connected digit recognition and speaker-dependent recognition of airborne reconnaissance reports (500 word vocab.). • Frame-by-frame analysis-synthesis (at 6000 bits/s) generally produced a close copy of original speech. • Segment-coded versions preserved main characteristics. • There were some instances of formant analysis errors. • In some cases, using the recognizer to select between alternative formant trajectories improved segment coding quality. • In general, coding still works well even if there are recognition errors, as main requirement is to identify suitable segments for linear trajectory coding.

Coded at about 600bps Speaker 1: digits Speaker 2: digits Speaker 3: digits Speaker 1: ARM report Natural Speaker 1: digits Speaker 2: digits Speaker 3: digits Speaker 1: ARM report Speech Coding results Achievements of study: Established principle of using formant trajectory model for both recognition and synthesis, including using information from recognition to assist in coding. Future work: better quality coding should be possible by further integrating formant analysis, recognition and synthesis within a common framework.