A Better TOMORROW

620 likes | 661 Vues

Explore the innovative "SHO-FA" technique combining compressive sensing and matrix manipulation for efficient network tomography. Learn about the advancements in node and edge delay estimation using fewer, strategic measurements. Discover the specialized approach for path delay estimation in various graph structures, offering insights into robust compressive sensing applications. Dive into the world of network tomography with streamlined processes and accurate measurements for enhanced performance.

A Better TOMORROW

E N D

Presentation Transcript

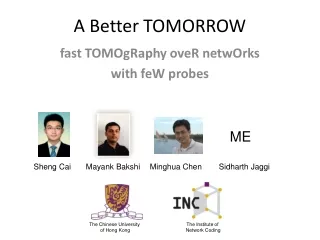

A Better TOMORROW ME fast TOMOgRaphy oveR netwOrks with feW probes Sheng Cai Mayank Bakshi Minghua Chen Sidharth Jaggi The Chinese University of Hong Kong The Institute of Network Coding

FRANTIC ME Fast Reference-based Algorithm for Network Tomography vIa Compressive Sensing Sheng Cai Mayank Bakshi Minghua Chen Sidharth Jaggi The Chinese University of Hong Kong The Institute of Network Coding

Computerized Axial Tomography (CAT scan)

Tomography y = Tx Estimate x given y and T

Network Tomography • Transform T: • Network connectivity matrix (known a priori) • Measurements y: • End-to-end packet delays • Infer x: • Link congestion Hopefully “k-sparse” Compressive sensing? • Challenge: • Matrix T “fixed” • Idea: • “Mimic” random matrix

1. Better CS [BJCC12] “SHO-FA” O(k) measurements, O(k) time

1. Better CS [BJCC12] “SHO-FA” Need “sparse & random” matrix T

SHO-FA A d=3 ck n

Idea 2: “Loopy” measurements , • Fewer measurements • Arbitrary packet injection/ • reception • Not just 0/1 matrices (SHO-FA)

SHO-FA + Cancellations + Loopy measurements • n = |V| or |E| • M = “loopiness” • k = sparsity • Measurements: O(k log(n)/log(M)) • Decoding time: O(k log(n)/log(M)) • General graphs, node/edge delay estimation • Path delay: O(DnM/k) • Path delay: O(D’M/k) (Steiner trees) • Path delay: O(D’’M/k) (“Average” Steiner trees) • Path delay: ??? (Graph decompositions)

2. (Most) x-expansion ≥2|S| |S|

m ? n m<n

Compressive sensing ? m ? n k k ≤ m<n

Robust compressive sensing ? e z y=A(x+z)+e Approximate sparsity Measurement noise

Apps: 1. Compression W(x+z) x+z BW(x+z) = A(x+z) M.A. Davenport, M.F. Duarte, Y.C. Eldar, and G. Kutyniok, "Introduction to Compressed Sensing,"in Compressed Sensing: Theory and Applications, Cambridge University Press, 2012.

Apps: 2. Network tomography Weiyu Xu; Mallada, E.; Ao Tang; , "Compressive sensing over graphs," INFOCOM, 2011 M. Cheraghchi, A. Karbasi, S. Mohajer, V.Saligrama: Graph-Constrained Group Testing. IEEE Transactions on Information Theory 58(1): 248-262 (2012)

Apps: 3. Fast(er) Fourier Transform H. Hassanieh, P. Indyk, D. Katabi, and E. Price. Nearly optimal sparse fourier transform. In Proceedings of the 44th symposium on Theory of Computing (STOC '12). ACM, New York, NY, USA, 563-578.

Apps: 4. One-pixel camera http://dsp.rice.edu/sites/dsp.rice.edu/files/cs/cscam.gif

y=A(x+z)+e (Information-theoretically) order-optimal

(Information-theoretically) order-optimal • Support Recovery

1. Graph-Matrix A d=3 ck n

1. Graph-Matrix A d=3 ck n

2. (Most) x-expansion ≥2|S| |S|

3. “Many” leafs L+L’≥2|S| ≥2|S| |S| 3|S|≥L+2L’ L≥|S| L+L’≤3|S| L/(L+L’) ≥1/2 L/(L+L’) ≥1/3

Encoding – Recap. 0 1 0 1 0