מבוא מורחב למדעי המחשב בשפת Scheme

מבוא מורחב למדעי המחשב בשפת Scheme. תרגול 3. Outline. Let High order procedures. Orders of Growth. We say that function R(n) O(f(n)) if there is a constant c > 0 such that for all n ≥1 R(n) ≤ c f(n).

מבוא מורחב למדעי המחשב בשפת Scheme

E N D

Presentation Transcript

Outline • Let • High order procedures

Orders of Growth • We say that function R(n)O(f(n))if there is a constant c>0such that for all n≥1 • R(n) ≤cf(n) • We say that function R(n)(f(n))if there are constants c1,c2> 0 such that for all n≥1 c1 f(n) ≤ R(n) ≤ c2 f(n) • We say that function R(n)(f(n))if there is a constant c>0such that for all n≥1 • cf(n)≤R(n)

Asymptotic version • Suppose f(n) < g(n) for all n≥N • There is always a constant c such that f(n) < c*g(n) for all n ≥ 1 • C=(max{f(n): n<N}+1)/min{g(n): n<N}

Practice 5n3 + 12n + 300n1/2 + 1 n2 + 30 350 25 n1/2 + 40log5n + 100000 12log2(n+1) + 6log(3n) 22n+3 + 37n15+ 9 (n3) (n2) (1) (n1/2) (log2n) (4n)

Comparing algorithms • Running time of a program implementing specific algorithm may vary depending on • Speed of specific computer(e.g. CPU speed) • Specific implementation(e.g. programming language) • Other factors (e.g. input characteristics) • Compare number of operation required by different algorithms asymptotically, i.e. order of growth as size of input increases to infinity

Efficient Algorithm • Algorithm for which number of operations and amount of required memory cells do not grow very fast as size of input increased • What operations to count? • What is input size (e.g. number of digits for representing input or input number itself)? • Different input of the same size can cause different running time.

Efficient algorithms cont’d • Input size: usually the size of input representation (number of bits/digits) • Usually interested in worst case running time ,i.e. number of operations for worst case • Usually we count primitive operations (arithmetic and/or comparisons)

Complexity Analysis • Reminder: • Space complexity - number of deferred operations(Scheme) • Time complexity - number of primitive steps • Method I – Qualitative Analysis • Example: Factorial • Each recursive call produce one deferred operation(multiplication) which takes constant time to perform • Total number of operations(time complexity) (n) • Maximum number of deferred operations(n) during the last call • Method II – Recurrence Equations

Recurrence Equations Code (define (sum-squares n) (if (= n 1) 1 (+ (square n) (sum-squares (- n 1)) ))) Recurrence T(n) = T(n-1) + (1) Solve T(n) T(n-1) + c T(n-2) + c + c T(n-3) + c + c + c … = T(1) + c + c + … + c = c + c + … + c = nc O(n)

Recurrence Equations Code (define (fib n) (cond ((= n 0) 0) ((= n 1) 1) (else (+(fib (- n 1))(fib (- n 2)) )))) Recurrence T(n) = T(n-1) + T(n-2) + (1) Solve For simplicity, we will solve T(n) = 2T(n-1) + (1), Which will give us an upper bound

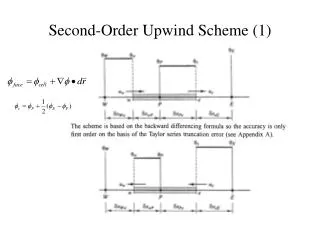

(1) T(n-1) T(n-1) 2(1) T(n-2) T(n-2) T(n-2) T(n-2) 4(1) T(1) T(1) T(1) T(1) 2n-1(1) Total: (2n) Recursion Trees T(n)

Fibonacci - Complexity • Time complexity • T(n) < 2T(n-1)+(1) • Upper Bound: O(2n) • Tight Bound: (n)where =(5+1)/2 • Space Complexity: (n)

Common Recurrences T(n) = T(n-1) + (1) (n) T(n) = T(n-1) + (n) (n2) T(n) = T(n/2) + (1) (logn) T(n) = T(n/2) + (n) (n) T(n) = 2T(n-1) + (1) (2n) T(n) = 2T(n/2) + (1) (n) T(n) = 2T(n/2) + (n) (nlogn)

Back to GCD (define (gcd a b) (if (= b 0) a (gcd b (remainder a b)))) • Claim: The small number (b) is at least halved every second iteration • For “double iterations” we have T(b) T(b/2) + (1) • T(a) O(log(b))

Syntactic sugar - let Adding new local variables (let ((var1 exp1) (var2 exp2) ... (varN expN)) body) Equivalent to (syntactic saugar) ((lambda (var1 var2…varN) body) exp1…expN)

Let – finger examples (let ((x 3)) (+ x (* x 10))) ((lambda (x) (+ x (* x 10))) 3) (define x 5) (+ (let ((x 3)) (+ x (* x 10))) x) (+ ((lambda (x) (+ x (* x 10))) 3) x) (let ((x 3) (y (+ x 2))) (* x y)) ((lambda (x y) (* x y)) 3 (+ x 2))

Input: • Continuous function f(x) • a, b such that f(a)<0<f(b) • Goal: Find a root of the equation f(x)=0 • Relaxation: Settle with a “close-enough” solution Finding roots of equations

General Binary Search • Search space: set of all potential solutions • e.g. every real number in the interval [a b] can be a root • Divide search space into halves • e.g. [a (a+b)/2) and [(a+b)/2 b] • Identify which half has a solution • e.g. r is in [a (a+b)/2) or r is in [(a+b)/2 b] • Find the solution recursively in reduced search space • [a (a+b)/2) • Find solution for small search spaces • E.g. if abs(a-b)<e, r=(a+b)/2

Back to finding roots • Theorem: if f(a)<0<f(b) and f(x) is continuous, then there is a point c(a,b) such that f(c)=0 • Note: if a>b then the interval is (b,a) • Half interval method • Divide (a,b) into 2 halves • If interval is small enough – return middle point • Otherwise, use theorem to select correct half interval • Repeat iteratively

Example a b

Example (continued) a b And again and again…

Scheme implementation x (search f a x) (search f x b) x (define (search f a b) (let ((x (average a b))) (if (close-enough? a b) (let ((y (f x))) (cond ((positive? y) ) ((negative? y) ) (else ))))))

We need to define (define (close-enough? x y) (< (abs (- x y)) 0.001)) Determine positive and negative ends (define (half-interval-method f a b) (let ((fa (f a)) (fb (f b))) (cond ((and ) (search f a b)) ((and ) (search f b a)) (else (display “values are not of opposite signs”))) )) (negative? fa) (positive? fb) (negative? fb) (positive? fa)

Examples: sin(x)=0, x(2,4) (half-interval-method 2.0 4.0) x3-2x-3=0, x(1,2) (half-interval-method 1.0 2.0) sin 3.14111328125… (lambda (x) (- (* x x x) (* 2 x) 3)) 1.89306640625

Double (define (double f) (lambda (x) (f (f x)))) (define (inc x) (+ x 1)) ((double inc) 5) => 7 inc(x) = x + 1 f(f(x)) = x + 2 ((double square) 5) => 625 square(x) = x^2 f(f(x)) = x^4

Double Double (((double double) inc) 5) => 9 (double double) = double twice ((double double) inc) = (double (double inc)) inc = x + 1 ((double double) inc) = x + 4 (((double double) square) 5) => 152587890625 square = x^2 (double square) = x^4 ((double double) square) = x^16

Double Double Double (((double (double double)) inc) 5) => 21 (double double) = double twice (double (double double) = twice, then twice again (4 times) inc = x + 1 ((double (double double)) inc) = x + 16

Compose • Compose f(x), g(x) to f(g(x)) (define (compose f g) (lambda (x) (f (g x)))) (define (inc x) (+ x 1)) ((compose inc square) 3) 10 ((compose square inc) 3) 16

Compose now Execute later Repeated f f(x), f(f(x)), f(f(f(x))), … apply f, n times (define (compose f g) (lambda (x) (f (g x)))) (= n 1) f (repeated f (- n 1)) (define (repeated f n) (if (compose f ))) ((repeated inc 5) 100) => 105 ((repeated square 2) 5) => 625

Do nothing until called later Repeated f - iterative (define (repeated-iter f n x) (if (= n 1) (f x) (repeated-iter f (- n 1) (f x)))) (define (repeated f n) (lambda (x) (repeated-iter f n x)))

Compose now Execute later Repeated f – Iterative II (define (repeated f n) (define (repeated-iter count accum) (if (= count n) accum (repeated-iter (+ count 1) (compose f accum)))) (repeated-iter 1 f))

Repeatedly smooth a function (define (repeated-smooth f n) ) Smooth a function f: g(x) = (f(x – dx) + f(x) + f(x + dx)) / 3 (define (smooth f) (let ((dx 0.1)) )) (define (average x y z) (/ (+ x y z) 3)) (lambda (x) (average (f (- x dx)) (f x) (f (+ x dx)))) ((repeated smooth n) f)