Algorithm Design Methodologies

Dynamic programming is a powerful algorithm design methodology primarily used to solve optimization problems. These problems often have multiple feasible solutions, and the goal is to identify the optimal one. This article explores key elements of dynamic programming, including optimal substructure and overlapping subproblems, while delving into practical examples such as matrix chain multiplication. Understanding how to efficiently compute minimum scalar multiplications across various matrix sequences is crucial for achieving optimal solutions in complex mathematical operations.

Algorithm Design Methodologies

E N D

Presentation Transcript

Algorithm Design Methodologies • Divide & Conquer • Dynamic Programming • Backtracking

Optimization Problems • Dynamic programming is typically applied to optimization problems • In such problems, there are many feasible solutions • We wish to find a solution with the optimal (maximum or minimum value) • Examples: Minimum spanning tree, shortest paths

Matrix Chain Multiplication • To multiply two matrices A (p by q) and B (q by r) produces a matrix of dimensions (p by r) and takes p * q * r “simple” scalar multiplications

Matrix Chain Multiplication Given a chain of matrices to multiply: A1 * A2 * A3 * A4 we must decide how we will paranthesize the matrix chain: • (A1*A2)*(A3*A4) • A1 * (A2 * (A3*A4)) • A1 * ((A2*A3) * A4) • (A1 * (A2*A3)) * A4 • ((A1*A2) * A3) * A4

Matrix Chain Multiplication • We define m[i,j] as the minimum number of scalar multiplications needed to compute Ai..j • Thus, the cheapest cost of multiplying the entire chain of n matrices is A [1,n] • If i <> j, we know m[i,j] = m[i,k] + m[k+1,j] + p[i-1]*p[k]*p[j] for some value of k [i,j)

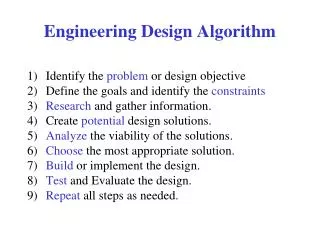

Elements of Dynamic Programming • Optimal Substructure • Overlapping Subproblems

Optimal Substructure • This means the optimal solution for a problem contains within it optimal solutions for subproblems. • For example, if the optimal solution for the chain A1*A2*…*A6 is ((A1*(A2*A3))*A4)*(A5*A6) then this implies the optimal solution for the subchain A1*A2*….*A4 is ((A1*(A2*A3))*A4)

Overlapping Subproblems • Dynamic programming is appropriate when a recursive solution would revisit the same subproblems over and over • In contrast, a divide and conquer solution is appropriate when new subproblems are produced at each recurrence