Optimization Issues for Huge Datasets and Long Computation

Explore optimization problems for large datasets, long computations, global solutions, and more with applications in data mining, model optimization, and solution acceleration.

Optimization Issues for Huge Datasets and Long Computation

E N D

Presentation Transcript

Optimization Issues for Huge Datasets and Long Computation Michael Ferris University of Wisconsin, Computer Sciences ferris@cs.wisc.edu Qun Chen, Jin-Ho Lim, Jeff Linderoth, Miron Livny, Todd Munson, Mary Vernon, Meta Voelker

Update on Gamma Knife • In use at U. Maryland Hospitals • Covered by Business Week (Apr 2001) • Better models, faster solution • Requires less user input • Skeletonization is key improvement

a. Target area b. A single line skeleton of an image 10 10 20 20 30 30 40 40 10 20 30 40 10 20 30 40 c. 8 initial shots are identified d. An optimal solution: 8 shots 2 10 1.5 20 1 30 0.5 40 10 20 30 40 1-4mm, 2-8mm, 5-14mm 1-4mm, 2-8mm, 5-14mm Skeleton Starting Points 10 20 30 40 50 10 20 30 40 50

Data Mining & Optimization Prediction, Categorization, Separation Equations, LP, QP, MIP, NLP Serial, Parallel, Condor GAMS, Matlab, so/dll

Global Exact Constrained Stochastic Large scale Fast convergence CPU + Memory + Smarts Local Approximate Unconstrained Deterministic Small scale Termination Optimization

MIP formulation minimize cTx subject to Ax b l x u and some xj integer Problems are specified by application convenient format - GAMS, AMPL, or MPS

Data delivery: pay-per-view • Optimization model for regional caches: minimize: Cremote+ PCregional over all possible cached objects/segments subject to: • Cregional Nchannels • regional storage Nsegments • regional server stores 0, k or K segments of each object • MIP (large number of objects/segments)

The “Seymour Problem” • Set covering problem used in proof of four color theorem • CPLEX 6.0 and Condor (2 option files) • Running since June 23, 1999 • Currently >590 days CPU time per job • (13 million nodes; 2.4 million nodes)

FAT COP • FAT - large # of processors • opportunistic environment (Condor) • COP - Master Worker control • fault tolerant: task exit, host suspend • portable parallel programming • Mixed Integer Program Solver • Branch and Bound: LP relaxations • MPS file, AMPL or GAMS input

CPLEX OSL SOPLEX MINOS ... FATCOP Internet Protocol Condor-PVM PVM MW GAMS AMPL MPS Application Problem LPSOLVER INTERFACE

MIP Technology • Each task is a subtree, time limit • Diving heuristic • Cutting planes (global) • Pseudocosts • Preprocessing • Master checkpoint • Worker has state, how to share info?

FATCOP Daily Log Note machine reboot at approx 3:00 am (night)

Back to Seymour • Schmieta, Pataki, Linderoth and MCF • explored to depth 8 in tree • applied cuts at each of these 256 nodes • solved in parallel, using whatever resources available (CPLEX, FATCOP,...) • Problem solved with over 1 year CPU • over 10 million nodes, 11,000 hours

Seymour Node 319 • FATCOP • 47.0 hrs with 2,887,808 nodes • average number of machine used is 108 • CPLEX • 12 days, 10 hrs with 356,600 nodes • single machine, clique cuts useful

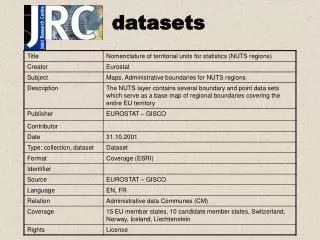

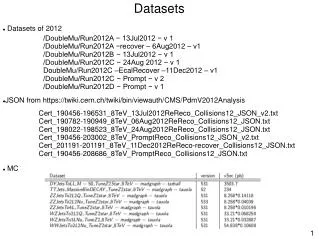

Large datasets • Enormous computational resources can sometimes facilitate solution • X-validation, slice modeling • What about the data? • In particular, what if the problem does not fit in core?

Definition: Example: Example: Componentwise definition: NCP functions

How can you use these? • Specialized codes • Asynchronous I/O • Specialized platforms • Condor (executable per architecture) • Specific input formats • GAMS, Matlab • Handholding operation

Model centric toolbox Specialized input Data warehouse GAMS optimization model Solvers LP,QP,MIP, NLP,MINLP Model data exchange Matlab programming environment Other model formats gms2xx Condor Resource Manager