Reliability, Validity, and Utility in Selection

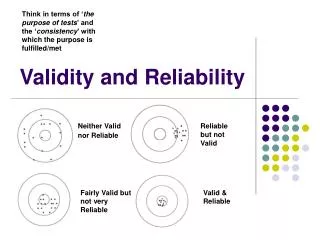

Reliability, Validity, and Utility in Selection Requirements for Selection Systems Reliable Valid Fair Effective Reliability the degree to which a measure is free from random error. Stability, Consistency, Accuracy, Dependability Represented by a correlation coefficient: r xx

Reliability, Validity, and Utility in Selection

E N D

Presentation Transcript

Requirements for Selection Systems • Reliable • Valid • Fair • Effective

Reliability • the degree to which a measure is free from random error. • Stability, Consistency, Accuracy, Dependability • Represented by a correlation coefficient: rxx • A perfect positive relationship equals +1.0 • A perfect negative relationship equals - 1.0 • Should be .80 or higher for selection

Factors that Affect Reliability • Test length - longer = better • Homogeneity of test items – higher r if all items measure same construct • Adherence to standardized procedures results in higher reliability

Factors that Negatively Affect Reliability • Poorly constructed devices • User error • Unstable attributes • Item difficulty – too hard or too easy inflates reliability

Standardized Administration • All test takers receive: • Test items presented in same order • Same time limit • Same test content • Same administration method • Same scoring method of responses

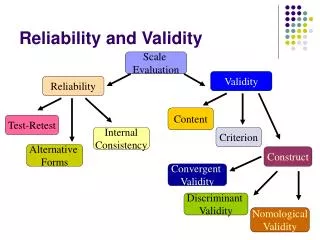

Types of Reliability • Test-retest • Alternate Forms • Internal Consistency • Interrater

Test-Retest Reliability • Temporal stability • Obtained by correlating pairs of scores from the same person on two different administrations of the same test • Drawbacks – maturation; learning; practice; memory

Alternate Forms • Form stability; aka parallel forms, equivalent forms • Two different versions of a test that have equal means, standard deviations, item content, and item difficulties • Obtained by correlating pairs of scores from the same person on two different versions of the same test • Drawbacks: need to create 2x items (cost); practice; learning; maturation

Internal Consistency - Split-half Reliability • obtained by correlating two pairs of scores obtained from equivalent halves of a single test administered once • r must be adjusted statistically to correct for test length • Spearman-Brown Prophecy formula • Advantages: efficient; eliminates some of the drawbacks seen in other methods

Internal Consistency – Coefficient Alpha • Represents the degree of correlation among all the items on a scale calculated from a single administration of a single form of a test • Obtained by averaging all possible split-half reliability estimates • Drawback: test must be uni-dimensional; can be artificially inflated if test is lengthened • Advantages: same as split-half • Most commonly used method of r

Interrater Reliability • Degree of agreement that exists between two or more raters or scorers • Used to determine if scores represent rater characteristics rather than what is being rated • Obtained by correlating ratings made by one rater with those of other raters for each person being rated

Validity • Extent to which inferences based on test scores are justified given the evidence • Is the test measuring what it is supposed to measure? • Extent to which performance on the measure is associated with performance on the job. • Builds upon reliability, i.e. reliability is necessary but not sufficient for validity • No single best strategy

Types of Validity • Content Validity • Criterion Validity • Construct Validity • Face Validity

Content Validity • Degree to which test taps into domain or “content” of what it is supposed to measure • performed by demonstrating that the items, questions, or problems posed by the test are a representative sample of the kinds of situations or problems that occur on the job. • Determined through Job Analysis • Identification of essential tasks • Identification of KSAOs required to complete tasks • Relies on judgment of SMEs • Can also be done informally

Criterion Validity • Degree to which a test is related (statistically) to a measure of job performance • Statistically represented by rxy • Usu. ranges from .30 to .55 for effective selection • Can be established two ways: • Concurrent Validity • Predictive Validity

Concurrent Validity • Test scores and criterion measure scores are obtained at the same time & correlated with each other • Drawbacks: • Must involve current employees, which results in range restriction & non-representative sample • Current employees will not be as motivated to do well on the test as job seekers

Predictive Validity • Test scores are obtained prior to hiring, and criterion measure scores are obtained after being on the job; scores are then correlated with each other • Drawbacks: • Will have range restriction unless all applicants are hired • Must wait several months for job performance (criterion) data

Construct Validity • Degree to which a test measures the theoretical construct it purports to measure • Construct – unobservable, underlying, theoretical trait

Construct Validity (cont.) • Often determined through judgment, but can be supported with statistical evidence: • Test homogeneity (high alpha; factor analysis) • Convergent validity evidence - test score correlates with other measures of same or similar construct • Discriminant or divergent validity evidence – test score does not correlate with measures of other theoretically dissimilar constructs

Additional Representations of Validity • Face Validity – degree to which a test appears to measure what it purports to measure; i.e., do the test items appear to represent the domain being evaluated? • Physical Fidelity – do physical characteristics of test represent reality • Psychological Fidelity – do psychological demands of test reflect real-life situation

Where to Obtain Reliability & Validity Information • Derive it yourself • Publications that contain information on tests • e.g., Buros’ Mental Measurements Yearbook • Test publishers – should have data available, often in the form of a technical report

Selection System Utility • Taylor-Russell Tables – estimate percentage of employees selected by a test who will be successful on the job • Expectancy Charts – similar to T-R, but not as accurate • Lawshe Tables – estimate probability of job success for a single applicant

Methods for Selection Decisions • Top-down – those with the highest scores are selected first • Passing or cutoff score – everyone above a certain score is hired • Banding – all scores within a statistically determined interval or band are considered equal • Multiple hurdles – several devices are used; applicants are eliminated at each step