Experimental Errors Just because a series of replicate analyses are precise does not mean the results are accurate

Experimental Errors Just because a series of replicate analyses are precise does not mean the results are accurate Sometimes less precise results for a series of analyses are more accurate than a more precise series of replicates See Figure 2-3 in FAC7, p. 15

Experimental Errors Just because a series of replicate analyses are precise does not mean the results are accurate

E N D

Presentation Transcript

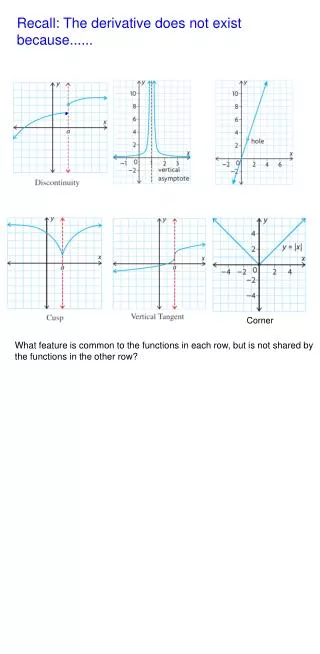

Experimental Errors • Just because a series of replicate analyses are precise does not mean the results are accurate • Sometimes less precise results for a series of analyses are more accurate than a more precise series of replicates • See Figure 2-3 in FAC7, p. 15 • Consider three situations that give results producing scatter in data or deviations from the true value • Determinate error sometimes called systematic error that produces a deviation in the results of an analysis from the true value • Indeterminate error sometimes called random error that produces uncertainty in the results of replicate analyses • Results in scattering in the observed measurements or results • The uncertainty is reflected in the quantitative measures of precision • Gross errors which occur occasionally and often are large in magnitude

Experimental Errors • Determinate Errors are inherently determinable or knowable • Instrumental errors are produced because apparatus is not properly calibrated, not clean or damaged • Electronic equipment can often give rise to such errors because contacts are dirty, power supplies degrade, reference voltages are inaccurate, etc. • Method errors result from non-ideal behavior of reagents and reactions used for analysis • Interferences • Slowness of reactions • Incompleteness of reactions • Species instability • Nonspecificity of reagents • Side reaction • Personal errors involve the judgement of the analyst • Bias in reading an instrument • Number bias - preference for certain digits

Experimental Errors • Effect of determinate errors on the results of an analysis • Constant error example: Suppose there is a -2.0 mg error in the mass of A containing 20.00% A • Examine the effect of sample size on %A calculated • The result is that for a constant error, the relative quantity of A approaches the true value at high sample masses.

Experimental Errors • Effect of determinate errors on the results of an analysis • Proportional error example: Suppose there is a + 5 ppt relative error in the mass of A for a sample that’s 20.00 % in A • Effect of sample size on %A • The %A is independent of sample size if a proportional error of constant size exists in the mass of A

Experimental Errors • Mitigating determinate errors • Instrument errors can be reduced by calibrating one’s apparatus • Personal errors can be reduced by being careful! • Method errors can be reduced by • Analyzing standard samples • The NIST has a wide variety of standard samples whose analyte concentrations are well established • The effect of interferences can often be accounted for by spiking the analytical sample with a known quantity of analyte or performing a standard additions analysis • The effect of the interferences on the added analyte should be the same as that on the original analyte • Independent analysis of replicates of the same bulk sample by a well proven method of significantly different design can check for determinate errors • Blank determinations may indicate the presence of a constant error • Carry out the analysis on samples that contain everything but the analyte • Vary the sample size in order to detect a constant error

Experimental Errors • Gross errors - such as arithmetic mistakes, using the wrong scale on an instrument can be cured by being careful! • Indeterminate or random errors producing uncertainty in results • Arise when a system is extended to its limit of precision • There are many, often unknown, uncontrolled, opportunities to introduce small variations in each measurement leading to an experimental result • One way to examine uncertainty is to produce a frequency distribution • Example: examine the frequency distribution for a measurement that contains four equal sized uncertainties, u1, u2, u3, u4 • The combinations of the u’s give certain numbers of possibilities:

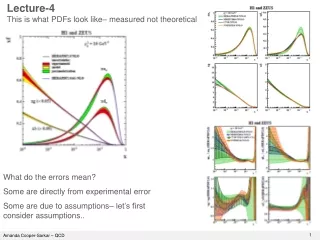

Experimental Errors • One way to examine uncertainty is to produce a frequency distribution • If the number of equal sized uncertainties is increased to 10 • only 1/500 chance of observing + 10u or -10u • If the number of indeterminate uncertainties is infinite one expects a smooth curve • The smooth curve is called the Gausian error curve and gives a normal distribution • Conclusions about the normal distribution • The mean is the most probable value for normally distributed data • This is because the most probable deviation from the mean is 0 (zero) • Large deviations from the mean are not very probable • The normal distribution curve is symmetric about the mean • The frequency of a particular positive deviation from the mean is the same and the same sized but negative deviation from the mean • Most experimental results from replicate analyses done in the same way form a normal distribution

Experimental Errors • Examine the data for the determination of the volume of water delivered by a 10.00 mL transfer pipet - FAC7, Table 3-2, p. 23 and Table 3-3, p. 24 and Figure 3-2, p. 24 • 26% of the 50 results are in the 0.003 mL range containing the mean • 72% of the 50 measurements are within the range ±1s of the mean • The Gaussian curve is shown for the smooth distribution having the same s=s and the same mean as this 50 sample set of data