AdaBoost

AdaBoost. Classifier. Simplest classifier. Adaboost : Agenda. ( Ada ptive Boost ing, R. Scharpire , Y. Freund, ICML, 1996): Supervised classifier Assembling classifiers Combine many low-accuracy classifiers (weak learners) to create a high-accuracy classifier (strong learners ).

AdaBoost

E N D

Presentation Transcript

Classifier • Simplest classifier

Adaboost: Agenda • (Adaptive Boosting, R. Scharpire, Y. Freund, ICML, 1996): • Supervised classifier • Assembling classifiers • Combine many low-accuracy classifiers (weak learners) to create a high-accuracy classifier (strong learners)

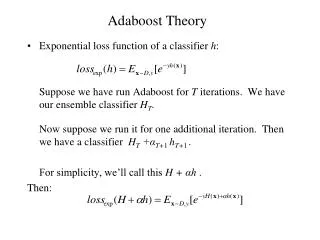

Adaboost • Strong classifier = linear combination of T weak classifiers (1) Design of weak classifier (2) Weight for each classifier (Hypothesis weight) (3) Update weight for each data (example distribution) • Weak Classifier: < 50% error over any distribution

Adaboost • Strong classifier = linear combination of T weak classifiers (1) Design of weak classifier (2) Weight for each classifier (Hypothesis weight) (3) Update weight for each data (example distribution) • Weak Classifier: < 50% error over any distribution

Adaboost: Design of weak classifier (2/2) • Select a weak classifier with thesmallest weighted error • Prerequisite:

Adaboost • Strong classifier = linear combination of T weak classifiers (1) Design of weak classifier (2) Weight for each classifier (Hypothesis weight) (3) Update weight for each data (example distribution) • Weak Classifier: < 50% error over any distribution

Adaboost: Hypothesis weight (1/2) • How to set ?

Adaboost • Strong classifier = linear combination of T weak classifiers (1) Design of weak classifier (2) Weight for each classifier (Hypothesis weight) (3) Update weight for each data (example distribution) • Weak Classifier: < 50% error over any distribution

Adaboost: Update example distribution (Reweighting) y * h(x) = 1 y * h(x) = -1

Reweighting In this way, AdaBoost “focused on” the informative or “difficult” examples.

Reweighting In this way, AdaBoost “focused on” the informative or “difficult” examples.

Summary t = 1

Example (1/5) Original Training set : Equal Weights to all training samples Taken from “A Tutorial on Boosting” by Yoav Freund and Rob Schapire

Example (2/5) ROUND 1

Example (3/5) ROUND 2

Example (4/5) ROUND 3