Introduction to Matrix Algebra and Regression Concepts

This document covers fundamental concepts of matrix algebra and regression analysis. It defines matrices as rectangular arrays of elements and explains key terms such as scalars, row vectors, and column vectors. The characteristics of square, symmetric, diagonal, and identity matrices are discussed, along with the trace of a matrix. Additionally, the document addresses matrix addition, subtraction, multiplication, and the process of inverting matrices, highlighting the importance of determinants and linear dependence. Finally, it presents the basics of regression in matrix notation, including parameter estimates.

Introduction to Matrix Algebra and Regression Concepts

E N D

Presentation Transcript

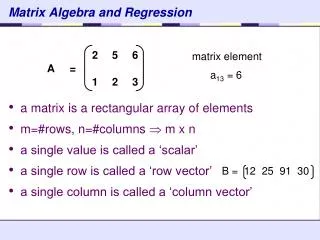

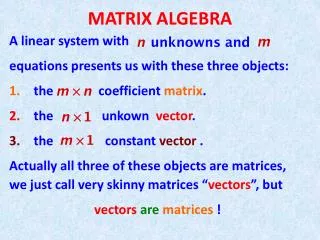

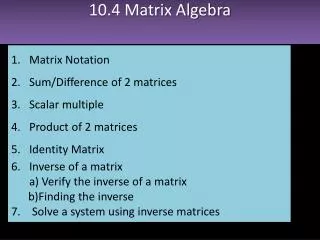

Matrix Algebra and Regression • a matrix is a rectangular array of elements • m=#rows, n=#columns m x n • a single value is called a ‘scalar’ • a single row is called a ‘row vector’ • a single column is called a ‘column vector’ matrix element a13 = 6 B = 12 25 91 30

Matrix Algebra and Regression • a square matrix has equal numbers of rows and columns • in a symmetric matrix, aij = aji • in a diagonal matrix, all off-diagonal elements = 0 • an identity matrix is a diagonal matrix with diagonals = 1 I =

Trace • The trace of a matrix is the sum of the elements on the main diagonal A = tr(A) = 2 + 6 + 3 + 1 + 8 = 20

Matrix Addition and Subtraction • The dimensions of the matrices must be the same

Matrix Multiplication C11 = 2*2 + 5*5 + 1*1 + 8*8 = 94 A m x n C m x p B n x p = X • The number of columns in A must equal the number of rows in B • The resulting matrix C has the number of rows in A and the number of columns in B • Note that the commutative rule of multiplication does not apply to matrices: A x B ≠ B x A

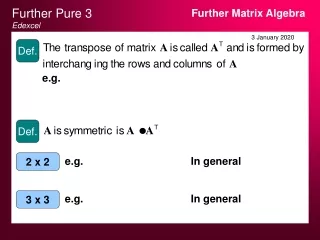

Transpose a Matrix • Multiplying A x A′ above will give the uncorrected sums of squares for each row in A on the diagonal of a 2 x 2 matrix, with the sums of crossproducts on the off-diagonals

Invert a Matrix • The inverse of a matrix is analogous to division in math • An inverted matrix multiplied by the original matrix will give the identity matrix M-1M = M-1M =I • It is easy to invert a diagonal matrix:

Inverting a 2x2 Matrix • Calculate the Determinant (D) of the matrix M • Verify • The extension to larger matrices is not simple – use a computer! M = M = |M| = D = ad - bc D = 2*9 – 5*3 M-1 = M-1 =

Linear Dependence M = M = D = ad - bc D = 2*9 – 6*3 = 0 The matrix M on the right is singular because one row (or column) can be obtained by multiplying another by a constant. A singular matrix will have D=0. The rank of a matrix = the number of linearly independent rows or columns (1 in this case). A nonsingular matrix is full rank and has a unique inverse. A generalized inverse (M–) can be obtained for any matrix, but the solution will not be unique if the matrix is singular. MM–M = M

Regression in Matrix Notation Linear model Y = X + ε Parameter estimates b = (X’X)-1X’Y