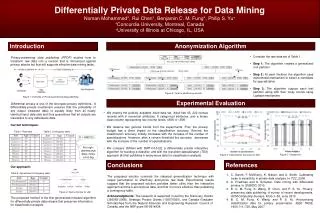

Differentially Private Data Release for Data Mining

Explore a new anonymization algorithm providing differential privacy guarantee for releasing data for data mining, handling both categorical and numerical attributes while preserving utility for classification analysis.

Differentially Private Data Release for Data Mining

E N D

Presentation Transcript

Differentially Private Data Release for Data Mining Noman Mohammed Concordia University Montreal, QC, Canada Rui Chen Concordia University Montreal, QC, Canada Benjamin C.M. Fung Concordia University Montreal, QC, Canada Philip S. Yu University of Illinois at Chicago, IL, USA

Outline 2 Overview Differential privacy Related Work Our Algorithm Experimental results Conclusion 2

Overview Anonymization algorithm 3 Data utility Privacy model 3

Contributions 4 Proposed an anonymization algorithm that provides differential privacy guarantee Generalization-based algorithm for differentially private data release Proposed algorithm can handle both categorical and numerical attributes Preserves information for classification analysis 4

Outline 5 Overview Differential privacy Related Work Our Algorithm Experimental results Conclusion 5

Differential Privacy [DMNS06] 6 D D’ D and D’ are neighbors if they differ on at most one record A non-interactive privacy mechanism A gives ε-differential privacy if for all neighbour D and D’, and for any possible sanitized database D* PrA[A(D) = D*] ≤ exp(ε) × PrA[A(D’) = D*] 6

Laplace Mechanism [DMNS06] 7 ∆f = maxD,D’||f(D) – f(D’)||1 For a counting query f: ∆f =1 For example, for a single counting query Q over a dataset D, returning Q(D) + Laplace(1/ε) maintains ε-differential privacy. 7

Exponential Mechanism [MT07] 8 Given a utility function u : (D × T) → R for a database instance D, the mechanism A, A(D, u) = return t with probability proportional to exp(ε× ×u(D, t)/2 ∆u) gives ε-differential privacy. 8

Composition properties 9 Sequential composition ∑iεi–differential privacy Parallel composition max(εi)–differential privacy 9

Outline 10 Overview Differential privacy Related Work Our Algorithm Experimental results Conclusion 10

Two Frameworks 11 Interactive: Multiple questions asked/answered adaptively Anonymizer 11

Two Frameworks 12 Interactive: Multiple questions asked/answered adaptively Anonymizer Non-interactive: Data is anonymized and released Anonymizer 12

Related Work 13 A. Blum, C. Dwork, F. McSherry, and K. Nissim. Practical privacy: The SuLQ framework. In PODS, 2005. A. Friedman and A. Schuster. Data mining with differential privacy. In SIGKDD, 2010. Is it possible to release data for classification analysis ? 13

Why Non-interactive framework ? 14 Disadvantages of interactive approach: Database can answer a limited number of queries Big problem if there are many data miners Provide less flexibility to perform data analysis 14

Non-interactive Framework 15 0 + Lap(1/ε) 15

Non-interactive Framework 16 0 + Lap(1/ε) For high-dimensional data, noise is too big 16

Outline 18 Overview Differential privacy Related Work Our Algorithm Experimental results Conclusion 18

Anonymization Algorithm 19 Job Age Class Count Any_Job [18-65) 4Y4N 8 Artist [18-65) 2Y2N 4 Professional [18-65) 2Y2N 4 Artist [18-40) 2Y2N 4 Artist [40-65) 0Y0N 0 Professional [18-40) 2Y1N 3 Professional [40-65) 0Y1N 1 Job Age [18-65) Any_Job Professional Artist [18-40) [40-65) Engineer Lawyer Dancer Writer 19 [18-30) [30-40)

Candidate Selection 20 we favor the specialization with maximum Score value First utility function: ∆u = Second utility function: ∆u = 1 20 20

Split Value 21 The split value of a categorical attribute is determined according to the taxonomy tree of the attribute How to determine the split value for numerical attribute ? 21

Split Value 22 The split value of a categorical attribute is determined according to the taxonomy tree of the attribute How to determine the split value for numerical attribute ? Age Class 60 Y 30 N 18 65 25 Y 25 45 30 40 60 40 N 25 Y 40 N 45 N 25 Y 22

Anonymization Algorithm 23 O(Aprx|D|log|D|) O(|candidates|) O(|D|) O(|D|log|D|) O(1) 23

Anonymization Algorithm 24 O(Aprx|D|log|D|) O((Apr+h)x|D|log|D|) O(|candidates|) O(|D|) O(|D|log|D|) O(1) 24

Outline 25 Overview Differential privacy Related Work Our Algorithm Experimental results Conclusion 25

Experimental Evaluation 26 Adult: is a Census data (from UCI repository) 6 continuous attributes. 8 categorical attributes. 45,222 census records 26

Data Utility for Max 27 ε = 0,1 ε = 0,25 ε = 0,5 ε = 1 86 BA = 85.3% 84 Average Accuracy (%) 82 80 78 76 LA = 75.5% 74 4 7 10 13 16 Number of specializations 27

Data Utility for InfoGain 28 ε = 0,1 ε = 0,25 ε = 0,5 ε = 1 86 BA = 85.3% 84 Average Accuracy (%) 82 80 78 76 LA = 75.5% 74 4 7 10 13 16 Number of specializations 28

Comparison 29 DiffP-C4,5 DiffGen (h=15) TDS (k=5) 86 BA = 85.3% 84 Average Accuracy (%) 82 80 78 76 LA = 75.5% 74 0.75 1 2 3 4 Privacy Budget 29

Scalability 30 Reading Anonymization Writing Total 180 160 ε=1 h=15 140 120 Time (seconds) 100 80 60 40 20 0 200 400 # of Records (in thousands) 600 800 1000 30

Outline 31 Overview Differential privacy Related Work Our Algorithm Experimental results Conclusion 31

Conclusions 32 Differentially Private Data Release Generalization-based differentially private algorithm Provides better utility than existing techniques 32

33 Thank You Very Much Q&A 33 33