3. Optimization Methods for Molecular Modeling

3. Optimization Methods for Molecular Modeling. by Barak Raveh. Outline. Introduction Local Minimization Methods (derivative-based) Gradient (first order) methods Newton (second order) methods Monte-Carlo Sampling (MC) Introduction to MC methods Markov-chain MC methods (MCMC)

3. Optimization Methods for Molecular Modeling

E N D

Presentation Transcript

3. Optimization Methods for Molecular Modeling by Barak Raveh

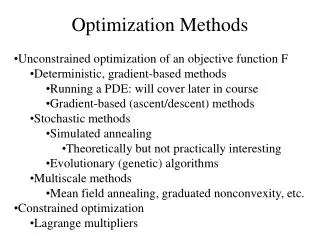

Outline • Introduction • Local Minimization Methods (derivative-based) • Gradient (first order) methods • Newton (second order) methods • Monte-Carlo Sampling (MC) • Introduction to MC methods • Markov-chain MC methods (MCMC) • Escaping local-minima

Prerequisites for Tracing the Minimal Energy Conformation I. The energy function:The in-silico energy function should correlate with the (intractable) physical free energy. In particular, they should share the same global energy minimum. II. The sampling strategy:Our sampling strategy should efficiently scan the (enormous) space of protein conformations

The Problem: Find Global Minimum on a Rough One Dimensional Surface rough = has multitude of local minima in a multitude of scales. *Adapted from slides by Chen Kaeasar, Ben-Gurion University

The Problem: Find Global Minimum on a Rough Two Dimensional Surface The landscape is rough because both small pits and the Sea of Galilee are local minima. *Adapted from slides by Chen Kaeasar, Ben-Gurion University

The Problem: Find Global Minimum on a RoughMulti-Dimensional Surface • A protein conformation is defined by the set of Cartesian atom coordinates (x,y,z) or by Internal coordinates (φ /ψ/χ torsion angles ; bond angles ; bond lengths) • The conformation space of a protein with 100 residues has ≈ 3000 dimensions • The X-ray structure of a protein is a point in this space. • A 3000-dimensional space cannot be systematically sampled, visualized or comprehended. *Adapted from slides by Chen Kaeasar, Ben-Gurion University

Characteristics of the Protein Energetic Landscape space of conformations energy smooth? rugged? Images by Ken Dill

Outline • Introduction • Local Minimization Methods (derivative-based) • Gradient (first order) methods • Newton (second order) methods • Monte-Carlo Sampling (MC) • Introduction to MC methods • Markov-chain MC methods (MCMC) • Escaping local-minima

Local Minimization Allows the Correction of Minor Local Errors in Structural Models Example: removing clashes from X-ray models *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

What kind of minima do we want? The path to the closest local minimum = local minimization *Adapted from slides by Chen Kaeasar, Ben-Gurion University

A Little Math – Gradients and Hessians Gradients and Hessians generalize the first and second derivatives (respectively) of multi-variate scalar functions ( = functions from vectors to scalars) Energy = f(x1, y1, z1, … , xn, yn, zn) Gradient Hessian

Analytical Energy Gradient (i) Cartesian Coordinates E = f(x1, y1 ,z1, … , xn, yn, zn) Energy, work and force: recall that Energy ( = work) is defined as force integrated over distance Energy gradient in Cartesian coordinates = vector of forces that act upon atoms (but this is not exactly so for statistical energy functions, that aim at the free energy ΔG) Example: Van der-Waals energy between pairs of atoms – O(n2) pairs:

Analytical Energy Gradient (ii) Internal Coordinates (torsions, etc.) Note: For simplicity, bond lengths and bond angles are often ignored E = f(1,1, 1, 11, 12, …) • Enrichment: Transforming a gradient between Cartesian and Internal coordinates(see Abe, Braun, Nogoti and Gö, 1984 ; Wedemeyer and Baker, 2003) • Consider an infinitesimal rotation of a vector r around a unit vector n. From physical mechanics, it can be shown that: n x r n n r • cross product – right hand rule adapted from image by Sunil Singh http://cnx.org/content/m14014/1.9/ Using the fold-tree (previous lesson), we can recursively propagate changes in internal coordinates to the whole structure (see Wedemeyer and Baker 2003)

Gradient Calculations – Cartesian vs. Internal Coordinates For some terms, Gradient computation is simpler and more natural with Cartesian coordinates, but harder for others: • Distance / Cartesian dependent: Van der-Waals term ; Electrostatics ; Solvation • Internal-coordinates dependent: Bond length and angle ; Ramachandran and Dunbrack terms (in Rosetta) • Combination: Hydrogen-bonds (in some force-fields) Reminder: Internal coordinates provide a natural distinction between soft constraints (flexibility of φ/ψ torsion angles) and hard constraints with steep gradient (fixed length of covalent bonds). Energy landscape of Cartesian coordinates is more rugged.

Analytical vs. Numerical Gradient Calculations • Analytical solutions require a closed-form algebraic formulation of energy score • Numerical solution try to approximate the gradient (or Hessian) • Simple example: f’(x) ≈ f(x+1) – f(x) • Another example: the Secant method (soon)

Outline • Introduction • Local Minimization Methods (derivative-based) • Gradient (first order) methods • Newton (second order) methods • Monte-Carlo Sampling (MC) • Introduction to MC methods • Markov-chain MC methods (MCMC) • Escaping local-minima

Gradient Descent Minimization Algorithm Sliding down an energy gradient good ( = global minimum) local minimum Image by Ken Dill

Gradient Descent – System Description • Coordinates vector (Cartesian or Internal coordinates):X=(x1, x2,…,xn) • Differentiable energy function:E(X) • Gradient vector: *Adapted from slides by Chen Kaeasar, Ben-Gurion University

Gradient Descent Minimization Algorithm: Parameters:λ = step size ; = convergence threshold • x = random starting point • While (x) > • Compute (x) • xnew = x + λ(x) • Line search: find the best step size λ in order to minimize E(xnew) (discussion later) • Note on convergence condition: in local minima, the gradient must be zero (but not always the other way around)

Line Search Methods – Solving argminλE[x + λ(x)]: • This is also an optimization problem, but in one-dimension… • Inexact solutions are probably sufficient Interval bracketing – (e.g., golden section, parabolic interpolation, Brent’s search) • Bracketing the local minimum by intervals of decreasing length • Always finds a local minimum Backtracking (e.g., with Armijo / Wolfe conditions): • Multiply step-size λ by c<1, until some condition is met • Variations: λ can also increase 1-D Newton and Secant methods We will talk about this soon…

2-D Rosenbrock’s Function: a Banana Shaped ValleyPathologically Slow Convergence for Gradient Descent 0 iterations 1000 iterations 100 iterations 10 iterations The (very common) problem: a narrow, winding “valley” in the energy landscape The narrow valley results in miniscule, zigzag steps

(One) Solution: Conjugate Gradient Descent • Use a (smart) linear combination of gradients from previous iterations to prevent zigzag motion Conjugated gradient descent Parameters:λ = step size ; = convergence threshold • x0 = random starting point • Λ0 = (x0) • While Λi > • Λi+1 = (xi) + βi∙Λi • choice of βi is important • Xi+1 = xi + λ ∙ Λi • Line search: adjust step size λ to minimize E(Xi+1) gradient descent • The new gradient is “A-orthogonal” to all previous search direction, for exact line search • Works best when the surface is approximately quadratic near the minimum (convergence in N iterations), otherwise need to reset the search every N steps (N = dimension of space)

Outline • Introduction • Local Minimization Methods (derivative-based) • Gradient (first order) methods • Newton (second order) methods • Monte-Carlo Sampling (MC) • Introduction to MC methods • Markov-chain MC methods (MCMC) • Escaping local-minima

Taylor’s Series First order approximation: Second order approximation: The full Series: = Example: (a=0)

From Taylor’s Series to Root Finding(one-dimension) First order approximation: Root finding by Taylor’s approximation:

Newton-Raphson Method for Root Finding(one-dimension) • Start from a random x0 • While not converged, update x with Taylor’s series:

Newton-Raphson: Quadratic Convergence Rate THEOREM: Let xroot be a “nice” root of f(x). There exists a “neighborhood” of some size Δ around xroot , in which Newton method will converge towards xrootquadratically( = the error decreases quadratically in each round) Image from http://www.codecogs.com/d-ox/maths/rootfinding/newton.php

The Secant Method(one-dimension) • Just like Newton-Raphson, but approximate the derivative by drawing a secant line between two previous points: Secant algorithm: Start from two random points: x0, x1 While not converged: • Theoretical convergence rate: golden-ratio (~1.62) • Often faster in practice: no gradient calculations

Newton’s Method:from Root Finding to Minimization Second order approximation of f(x): take derivative (by X) Minimum is reached when derivative of approximation is zero: • So… this is just root finding over the derivative (which makes sense since in local minima, the gradient is zero)

Newton’s Method for Minimization: • Start from a random vector x=x0 • While not converged, update x with Taylor’s series: • Notes: • if f’’(x)>0, then x is surely a local minimum point • We can choose a different step size than one

Newton’s Method for Minimization:Higher Dimensions • Start from a random vector x=x0 • While not converged, update x with Taylor’s series: • Notes: • H is the Hessian matrix (generalization of second derivative to high dimensions) • We can choose a different step size using Line Search (see previous slides)

Generalizing the Secant Method to High Dimensions: Quasi-Newton Methods • Calculating the Hessian (2nd derivative) is expensive numerical calculation of Hessian • Popular methods: • DFP (Davidson – Fletcher – Powell) • BFGS (Broyden – Fletcher – Goldfarb – Shanno) • Combinations • Timeline: • Newton-Raphson (17th century) Secant method DFP (1959, 1963) Broyden Method for roots (1965) BFGS (1970)

Some more Resources on Gradient and Newton Methods • Conjugate Gradient Descenthttp://www.cs.cmu.edu/~quake-papers/painless-conjugate-gradient.pdf • Quasi-Newton Methods: http://www.srl.gatech.edu/education/ME6103/Quasi-Newton.ppt • HUJI course on non-linear optimization by Benjamin Yakir http://pluto.huji.ac.il/~msby/opt-files/optimization.html • Line search: • http://pluto.huji.ac.il/~msby/opt-files/opt04.pdf • http://www.physiol.ox.ac.uk/Computing/Online_Documentation/Matlab/toolbox/nnet/backpr59.html • Wikipedia…

Outline • Introduction • Local Minimization Methods (derivative-based) • Gradient (first order) methods • Newton (second order) methods • Monte-Carlo Sampling (MC) • Introduction to MC methods • Markov-chain MC methods (MCMC) • Escaping local-minima

Harder Goal: Move from an Arbitrary Model to a Correct One Example: predict protein structure from its AA sequence. Arbitrary starting point *Adapted from slides by Chen Kaeasar, Ben-Gurion University

iteration 10 *Adapted from slides by Chen Kaeasar, Ben-Gurion University

iteration 100 *Adapted from slides by Chen Kaeasar, Ben-Gurion University

iteration 200 *Adapted from slides by Chen Kaeasar, Ben-Gurion University