The SPSS Sample Problem

The SPSS Sample Problem. To demonstrate these concepts, we will work the sample problem for logistic regression in SPSS Professional Statistics 7.5 , pages 37 - 64. The description of the problem can be found on page 39. The data for this problem is: Prostate.Sav. SPSS Sample Problem.

The SPSS Sample Problem

E N D

Presentation Transcript

The SPSS Sample Problem To demonstrate these concepts, we will work the sample problem for logistic regression in SPSS Professional Statistics 7.5, pages 37 - 64. The description of the problem can be found on page 39. The data for this problem is: Prostate.Sav. SPSS Sample Problem

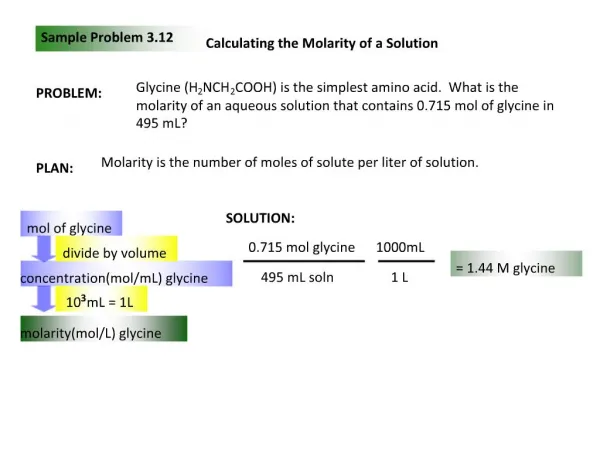

Stage One: Define the Research Problem • In this stage, the following issues are addressed: • Relationship to be analyzed • Specifying the dependent and independent variables • Method for including independent variables Relationship to be analyzed The goal of this analysis is to determine the relationship between the dependent variable NODALINV (whether or not the cancer has spread to the lymph nodes), and the independent variables of AGE (age of the subject), ACID (a laboratory test value that is elevated when the tumor has spread to certain areas), STAGE (whether or not the disease has reached an advanced stage), GRADE (aggressiveness of the tumor), and XRAY (positive or negative xray result). SPSS Sample Problem

Method for including independent variables Specifying the dependent and independent variables • The dependent variable is NODALINV 'Cancer spread to lymph nodes', a dichotomous variable. • The independent variables are: • AGE 'Age of the subject' • ACID 'Laboratory test score' • XRAY 'Positive X-ray result' • STAGE 'Disease reached advanced stage' • GRADE 'Aggressive tumor' Since we are interested in the relationship between the dependent variable and all of the independent variables, we will use direct entry of the independent variables. SPSS Sample Problem

Stage 2: Develop the Analysis Plan: Sample Size Issues • In this stage, the following issues are addressed: • Missing data analysis • Minimum sample size requirement: 15-20 cases per independent variable Missing data analysis There is no missing data in this problem. Minimum sample size requirement:15-20 cases per independent variable The data set has 53 cases and 5 independent variables for a ratio of 10 to 1, short of the requirement that we have 15-20 cases per independent variable. We should look for opportunities to validate our findings against other samples before generalizing our results. SPSS Sample Problem

Stage 2: Develop the Analysis Plan: Measurement Issues: • In this stage, the following issues are addressed: • Incorporating nonmetric data with dummy variables • Representing Curvilinear Effects with Polynomials • Representing Interaction or Moderator Effects Incorporating Nonmetric Data with Dummy Variables All of the nonmetric variables have recoded into dichotomous dummy-coded variables. Representing Curvilinear Effects with Polynomials We do not have any evidence of curvilinear effects at this point in the analysis. Representing Interaction or Moderator Effects We do not have any evidence at this point in the analysis that we should add interaction or moderator variables. SPSS Sample Problem

Stage 3: Evaluate Underlying Assumptions • In this stage, the following issues are addressed: • Nonmetric dependent variable with two groups • Metric or dummy-coded independent variables Nonmetric dependent variable having two groups The dependent variable NODALINV 'Cancer spread to lymph nodes' is a dichotomous variable. Metric or dummy-coded independent variables AGE 'Age of the subject' and ACID 'Laboratory test score' are metric variables. XRAY 'Positive X-ray result', STAGE 'Disease reached advanced stage', and GRADE 'Aggressive tumor' are nonmetric dichotomous variables. SPSS Sample Problem

Stage 4: Estimation of Logistic Regression and Assessing Overall Fit: Model Estimation • In this stage, the following issues are addressed: • Compute logistic regression model Compute the logistic regression The steps to obtain a logistic regression analysis are detailed on the following screens. SPSS Sample Problem

Requesting a Logistic Regression SPSS Sample Problem

Specifying the Dependent Variable SPSS Sample Problem

Specifying the Independent Variables SPSS Sample Problem

Specify the method for entering variables SPSS Sample Problem

Specifying Options to Include in the Output SPSS Sample Problem

Specifying the New Variables to Save SPSS Sample Problem

Complete the Logistic Regression Request SPSS Sample Problem

Stage 4: Estimation of Logistic Regression and Assessing Overall Fit: Assessing Model Fit • In this stage, the following issues are addressed: • Significance test of the model log likelihood (Change in -2LL) • Measures Analogous to R²: Cox and Snell R² and Nagelkerke R² • Hosmer-Lemeshow Goodness-of-fit • Classification matrices as a measure of model accuracy • Check for Numerical Problems • Presence of outliers SPSS Sample Problem

Initial statistics before independent variables are included The Initial Log Likelihood Function, (-2 Log Likelihood or -2LL) is a statistical measure like total sums of squares in regression. If our independent variables have a relationship to the dependent variable, we will improve our ability to predict the dependent variable accurately, and the log likelihood value will decrease. The initial –2LL value is 70.252 on step 0, before any variables have been added to the model. SPSS Sample Problem

Significance test of the model log likelihood The difference between these two measures is the model child-square value (22.126 = 70.252 - 48.126) that is tested for statistical significance. This test is analogous to the F-test for R² or change in R² value in multiple regression which tests whether or not the improvement in the model associated with the additional variables is statistically significant. In this problem the model Chi-Square value of 22.126 has a significance of 0.000, less than 0.05, so we conclude that there is a significant relationship between the dependent variable and the set of independent variables. SPSS Sample Problem

Measures Analogous to R² The next SPSS outputs indicate the strength of the relationship between the dependent variable and the independent variables, analogous to the R² measures in multiple regression. The Cox and Snell R² measure operates like R², with higher values indicating greater model fit. However, this measure is limited in that it cannot reach the maximum value of 1, so Nagelkerke proposed a modification that had the range from 0 to 1. We will rely upon Nagelkerke's measure as indicating the strength of the relationship. If we applied our interpretive criteria to the Nagelkerke R² of 0.465, we would characterize the relationship as strong. SPSS Sample Problem

Correspondence of Actual and Predicted Values of the Dependent Variable The final measure of model fit is the Hosmer and Lemeshow goodness-of-fit statistic, which measures the correspondence between the actual and predicted values of the dependent variable. In this case, better model fit is indicated by a smaller difference in the observed and predicted classification. A good model fit is indicated by a nonsignificant chi-square value. The goodness-of-fit measure has a value of 5.954 which has the desirable outcome of nonsignificance. SPSS Sample Problem

The Classification Matrices as a Measure of Model Accuracy The classification matrices in logistic regression serve the same function as the classification matrices in discriminant analysis, i.e. evaluating the accuracy of the model. If the predicted and actual group memberships are the same, i.e. 1 and 1 or 0 and 0, then the prediction is accurate for that case. If predicted group membership and actual group membership are different, the model "misses" for that case. The overall percentage of accurate predictions (77.4% in this case) is the measure of a model that I rely on most heavily for this analysis as well as for discriminant analysis because it has a meaning that is readily communicated, i.e. the percentage of cases for which our model predicts accurately. To evaluate the accuracy of the model, we compute the proportional by chance accuracy rate and the maximum by chance accuracy rates, if appropriate. The proportional by chance accuracy rate is equal to 0.530 (0.623^2 + 0.377^2). A 25% increase over the proportional by chance accuracy rate would equal 0.663. Our model accuracy race of 77.4% meets this criterion. Since one of our groups contains 62.3% of the cases, we might also apply the maximum by chance criterion. A 25% increase over the largest groups would equal 0.778. Our model accuracy race of 77.4% almost meets this criterion.

Stacked Histogram SPSS provides a visual image of the classification accuracy in the stacked histogram as shown below. To the extent to which the cases in one group cluster on the left and the other group clusters on the right, the predictive accuracy of the model will be higher.

Check for Numerical Problems There are several numerical problems that can occur in logistic regression that are not detected by SPSS or other statistical packages: multicollinearity among the independent variables, zero cells for a dummy-coded independent variable because all of the subjects have the same value for the variable, and "complete separation" whereby the two groups in the dependent event variable can be perfectly separated by scores on one of the independent variables. All of these problems produce large standard errors (over 2) for the variables included in the analysis and very often produce very large B coefficients as well. If we encounter large standard errors for the predictor variables, we should examine frequency tables, one-way ANOVAs, and correlations for the variables involved to try to identify the source of the problem. The standard errors and B coefficients are not excessively large, so there is no evidence of a numeric problem with this analysis. SPSS Sample Problem

Presence of outliers There are two outputs to alert us to outliers that we might consider excluding from the analysis: listing of residuals and saving Cook's distance scores to the data set. SPSS provides a casewise list of residuals that identify cases whose residual is above or below a certain number of standard deviation units. Like multiple regression there are a variety of ways to compute the residual. In logistic regression, the residual is the difference between the observed probability of the dependent variable event and the predicted probability based on the model. The standardized residual is the residual divided by an estimate of its standard deviation. The deviance is calculated by taking the square root of -2 x the log of the predicted probability for the observed group and attaching a negative sign if the event did not occur for that case. Large values for deviance indicate that the model does not fit the case well. The studentized residual for a case is the change in the model deviance if the case is excluded. Discrepancies between the deviance and the studentized residual may identify unusual cases. (See the SPSS chapter on Logistic Regression Analysis for additional details, pages 57-61). In the output for our problem, SPSS listed three cases that have may be considered outliers with a studentized residuals greater than 2: SPSS Sample Problem

Cook’s Distance SPSS has an option to compute Cook's distance as a measure of influential cases and add the score to the data editor. I am not aware of a precise formula for determining what cutoff value should be used, so we will rely on the more traditional method for interpreting Cook's distance which is to identify cases that either have a score of 1.0 or higher, or cases which have a Cook's distance substantially different from the other. The prescribed method for detecting unusually large Cook's distance scores is to create a scatterplot of Cook's distance scores versus case id. SPSS Sample Problem

Request the Scatterplot SPSS Sample Problem

Specifying the Variables for the Scatterplot SPSS Sample Problem

The Scatterplot of Cook's Distances On the plot of Cook's distances, we see a case that exceeds the 1.0 rule of thumb for influential cases. Scanning the data in the data editor, we find that the case with the large Cook's distance is case 24. If we study case 24 in the data editor, we will find that this case had the highest score for the acid variable, but no nodal involvement. Comparing this case to the two cases with the next highest acid score, case 25 with a score of 136 and case 53 with a score of 126, we see that both of these cases had nodal involvement, suggesting that a high acid score is associated with nodal involvement. We can consider case 24 as a candidate for exclusion from the analysis. SPSS Sample Problem

Stage 5: Interpret the Results • In this section, we address the following issues: • Identifying the statistically significant predictor variables • Direction of relationship and contribution to dependent variable SPSS Sample Problem

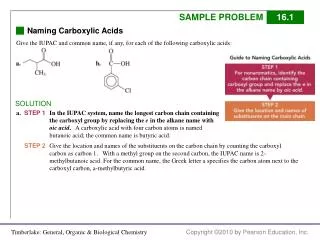

Identifying the statistically significant predictor variables The coefficients are found in the column labeled B, and the test that the coefficient is not zero, i.e. changes the odds of the dependent variable event is tested with the Wald statistic, instead of the t-test as was done for the individual B coefficients in the multiple regression equation. Similar to the output for a regression equation, we examine the probabilities of the test statistic in the column labeled "Sig," where we identity that the variable STAGE 'Disease reached advanced stage' and the variable XRAY 'Positive X-ray result' have a statistically significant relationship with the dependent variable. SPSS Sample Problem

Direction of relationship and contribution to dependent variable The signs of both of the statistically significant independent variables are positive, indicating a direct relationship with the dependent variable. Our interpretation of these variables is that positive (yes or 1) values to both questions XRAY 'Positive Xray result' and STAGE 'Disease reached advanced stage' are associated with the positive (yes or 1) category of the dependent variable NODALINV 'Cancer spread to lymph nodes'. Interpretation of the independent variables is aided by the "Exp (B)" column which contains the odds ratio for each independent variable. Thus, we would say that persons with a value of 1 STAGE 'Disease reached advanced stage' are 4.77 times as likely to have a score of 1 on the dependent variable NODALINV 'Cancer spread to lymph nodes'. Similarly, persons whose score is 1 on the independent variable XRAY 'Positive X-ray result' have a 7.73 greater likelihood of having lymph node involvement. SPSS Sample Problem

Stage 6: Validate The Model • When we have a small sample in the full data set as we do in this problem, a split half validation analysis is almost guaranteed to fail because we will have little power to detect statistical differences in analyses of the validation samples. In this circumstance, our alternative is to conduct validation analyses with random samples that comprise the majority of the sample. • We will demonstrate this procedure in the following steps: • Computing the First Validation Analysis • Computing the Second Validation Analysis • The Output for the Validation Analysis Computing the First Validation Analysis We set the random number seed and modify our selection variable so that is selects about 75-80% of the sample. SPSS Sample Problem

Set the Starting Point for Random Number Generation SPSS Sample Problem

Compute the Variable to Select a Large Proportion of the Data Set SPSS Sample Problem

Specify the Cases to Include in the First Validation Analysis SPSS Sample Problem

Specify the Value of the Selection Variable for the First Validation Analysis SPSS Sample Problem

Computing the Second Validation Analysis We reset the random number seed to another value and modify our selection variable so that is selects about 75-80% of the sample. SPSS Sample Problem

Set the Starting Point for Random Number Generation SPSS Sample Problem

Compute the Variable to Select a Large Proportion of the Data Set SPSS Sample Problem

Specify the Cases to Include in the Second Validation Analysis SPSS Sample Problem

Specify the Value of the Selection Variable for the Second Validation Analysis SPSS Sample Problem

Generalizability of the Logistic Regression Model We can summarize the results of the validation analyses in the following table. As we can see in the table, the results for each analysis are approximately the same, except that the variable STAGE 'Disease reached advanced stage' was not quite significant in the first validation analysis. Based on the validation analyses, I would conclude that our results are generalizable.