Cocktail Party Problem as Binary Classification

Cocktail Party Problem as Binary Classification. DeLiang Wang Perception & Neurodynamics Lab Ohio State University. Outline of presentation. Cocktail party problem Computational theory analysis Ideal binary mask Speech intelligibility tests

Cocktail Party Problem as Binary Classification

E N D

Presentation Transcript

Cocktail Party Problem as Binary Classification DeLiang Wang Perception & Neurodynamics Lab Ohio State University

Outline of presentation • Cocktail party problem • Computational theory analysis • Ideal binary mask • Speech intelligibility tests • Unvoiced speech segregation as binary classification

Real-world audition What? • Speech message speaker age, gender, linguistic origin, mood, … • Music • Car passing by Where? • Left, right, up, down • How close? Channel characteristics Environment characteristics • Room reverberation • Ambient noise

additive noise from other sound sources channel distortion reverberationfrom surface reflections Sources of intrusion and distortion

Cocktail party problem • Term coined by Cherry • “One of our most important faculties is our ability to listen to, and follow, one speaker in the presence of others. This is such a common experience that we may take it for granted; we may call it ‘the cocktail party problem’…” (Cherry’57) • “For ‘cocktail party’-like situations… when all voices are equally loud, speech remains intelligible for normal-hearing listeners even when there are as many as six interfering talkers” (Bronkhorst & Plomp’92) • Ball-room problem by Helmholtz • “Complicated beyond conception” (Helmholtz, 1863) • Speech segregation problem

Approaches to Speech Segregation Problem • Speech enhancement • Enhance signal-to-noise ratio (SNR) or speech quality by attenuating interference. Applicable to monaural recordings • Limitation: Stationarity and estimation of interference • Spatial filtering (beamforming) • Extract target sound from a specific spatial direction with a sensor array • Limitation: Configuration stationarity. What if the target switches or changes location? • Independent component analysis (ICA) • Find a demixing matrix from mixtures of sound sources • Limitation: Strong assumptions. Chief among them is stationarity of mixing matrix • “No machine has yet been constructed to do just that [solving the cocktail party problem].” (Cherry’57)

Auditory scene analysis • Listeners parse the complex mixture of sounds arriving at the ears in order to form a mental representation of each sound source • This perceptual process is called auditory scene analysis (Bregman’90) • Two conceptual processes of auditory scene analysis (ASA): • Segmentation. Decompose the acoustic mixture into sensory elements (segments) • Grouping. Combine segments into groups, so that segments in the same group likely originate from the same environmental source

Computational auditory scene analysis • Computational auditory scene analysis (CASA) approaches sound separation based on ASA principles • Feature based approaches • Model based approaches

Outline of presentation • Cocktail party problem • Computational theory analysis • Ideal binary mask • Speech intelligibility tests • Unvoiced speech segregation as binary classification

What is the goal of CASA? • What is the goal of perception? • The perceptual systems are ways of seeking and extracting information about the environment from sensory input (Gibson’66) • The purpose of vision is to produce a visual description of the environment for the viewer (Marr’82) • By analogy, the purpose of audition is to produce an auditory description of the environment for the listener • What is the computational goal of ASA? • The goal of ASA is to segregate sound mixtures into separate perceptual representations (or auditory streams), each of which corresponds to an acoustic event (Bregman’90) • By extrapolation the goal of CASA is to develop computational systems that extract individual streams from sound mixtures

Marrian three-level analysis • According to Marr (1982), a complex information processing system must be understood in three levels • Computational theory: goal, its appropriateness, and basic processing strategy • Representation and algorithm: representations of input and output and transformation algorithms • Implementation: physical realization • All levels of explanation are required for eventual understanding of perceptual information processing • Computational theory analysis – understanding the character of the problem – is critically important

Computational-theory analysis of ASA • To form a stream, a sound must be audible on its own • The number of streams that can be computed at a time is limited • Magical number 4 for simple sounds such as tones and vowels (Cowan’01)? • 1+1, or figure-ground segregation, in noisy environment such as a cocktail party? • Auditory masking further constrains the ASA output • Within a critical band a stronger signal masks a weaker one

Computational-theory analysis of ASA (cont.) • ASA outcome depends on sound types (overall SNR is 0) • Noise-Noise: pink , white , pink+white • Tone-Tone: tone1 , tone2 , tone1+tone2 • Speech-Speech: • Noise-Tone: • Noise-Speech: • Tone-Speech:

Some alternative CASA goals • Extract all underlying sound sources or the target sound source (the gold standard) • Implicit in speech enhancement, spatial filtering, and ICA • Segregating all sources is implausible, and probably unrealistic with one or two microphones • Enhance automatic speech recognition (ASR) • Close coupling with a primary motivation of speech segregation • Perceiving is more than recognizing (Treisman’99) • Enhance human listening • Advantage: close coupling with auditory perception • There are applications that involve no human listening

Ideal binary mask as CASA goal • Motivated by above analysis, we have suggested the ideal binary mask as a main goal of CASA (Hu & Wang’01, ’04) • Key idea is to retain parts of a mixture where the target sound is stronger than the acoustic background, and discard the rest • What a target is depends on intention, attention, etc. • The definition of the ideal binary mask (IBM) • s(t, f ): Target energy in unit (t, f ) • n(t, f ): Noise energy • θ: A local SNR criterion (LC) in dB, which is typically chosen to be 0 dB • It does not actually separate the mixture!

Properties of IBM • Flexibility: With the same mixture, the definition leads to different IBMs depending on what target is • Well-definedness: IBM is well-defined no matter how many intrusions are in the scene or how many targets need to be segregated • Consistent with computational-theory analysis of ASA • Audibility and capacity • Auditory masking • Effects of target and noise types • Optimality: Under certain conditions the ideal binary mask with θ = 0 dB is the optimal binary mask from the perspective of SNR gain • The ideal binary mask provides an excellent front-end for robust ASR

Subject tests of ideal binary masking • Recent studies found large speech intelligibility improvements by applying ideal binary masking for normal-hearing (Brungart et al.’06; Li & Loizou’08), and hearing-impaired (Anzalone et al.’06; Wang et al.’09) listeners • Improvement for stationary noise is above 7 dB for normal-hearing (NH) listeners, and above 9 dB for hearing-impaired (HI) listeners • Improvement for modulated noise is significantly larger than for stationary noise

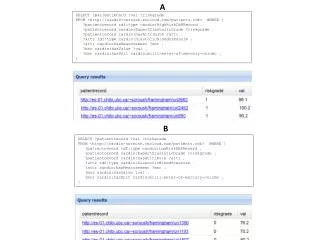

Test conditions of Wang et al.’09 • SSN: Unprocessed monaural mixtures of speech-shaped noise (SSN) and Dantale II sentences (0 dB: -10 dB: ) • CAFÉ: Unprocessed monaural mixtures of cafeteria noise (CAFÉ) and Dantale II sentences (0 dB: -10 dB: ) • SSN-IBM: IBM applied to SSN (0 dB: -10 dB: -20 dB: ) • CAFÉ-IBM: IBM applied to CAFÉ (0 dB: -10 dB: -20 dB: ) • Intelligibility results are measured in terms of speech reception threshold (SRT), the required SNR level for 50% intelligibility score

Wang et al.’s results • 12 NH subjects (10 male and 2 female), and 12 HI subjects (9 male and 3 female) • SRT means for the 4 conditions for NH listeners: (-8.2, -10.3, -15.6, -20.7) • SRT means for the 4 conditions for HI listeners: (-5.6, -3.8, -14.8, -19.4)

Speech perception of noise with binary gains • Wang et al. (2008) found that, when LC is chosen to be the same as the input SNR, nearly perfect intelligibility is obtained when input SNR is -∞ dB (i.e. the mixture contains noise only with no target speech)

Wang et al.’08 results • Mean numbers for the 4 conditions: (97.1%, 92.9%, 54.3%, 7.6%) • Despite a great reduction of spectrotemporal information, a pattern of binary gains is apparently sufficient for human speech recognition

Interim summary • Ideal binary mask is an appropriate computational goal of auditory scene analysis in general, and speech segregation in particular • Hence solving the cocktail party problem would amount to binary classification • This formulation opens the problem to a variety of pattern classification methods

Outline of presentation • Cocktail party problem • Computational theory analysis • Ideal binary mask • Speech intelligibility tests • Unvoiced speech segregation as binary classification

Unvoiced speech • Speech sounds consist of vowels and consonants; consonants further consist of voiced and unvoiced consonants • For English, unvoiced speech sounds come from the following consonant categories: • Stops (plosives) • Unvoiced: /p/ (pool), /t/ (tool), and /k/ (cake) • Voiced: /b/ (book), /d/ (day), and /g/ (gate) • Fricatives • Unvoiced: /s/(six), /sh/ (sheep), /f/ (fix), and /th/ (this) • Voiced: /z/ (zoo), /zh/ (pleasure), /v/ (vine), and /dh/ (that) • Mixed: /h/ (high) • Affricates (stop followed by fricative) • Unvoiced: /ch/ (chicken) • Voiced: /jh/ (orange) • We refer to the above consonants as expanded obstruents

Unvoiced speech segregation • Unvoiced speech constitutes 20-25% of all speech sounds • It carries crucial information for speech intelligibility • Unvoiced speech is more difficult to segregate than voiced speech • Voiced speech is highly structured, whereas unvoiced speech lacks harmonicity and is often noise-like • Unvoiced speech is usually much weaker than voiced speech and therefore more susceptible to interference

Processing stages of Hu-Wang’08 model • Peripheral processing results in a two-dimensional cochleagram

Auditory segmentation • Auditory segmentation is to decompose an auditory scene into contiguous time-frequency (T-F) regions (segments), each of which should contain signal mostly from the same sound source • The definition of segmentation applies to both voiced and unvoiced speech • This is equivalent to identifying onsets and offsets of individual T-F segments, which correspond to sudden changes of acoustic energy • Our segmentation is based on a multiscale onset/offset analysis (Hu & Wang’07) • Smoothing along time and frequency dimensions • Onset/offset detection and onset/offset front matching • Multiscale integration

Smoothed intensity Utterance: “That noise problem grows more annoying each day” Interference: Crowd noise in a playground. Mixed at 0 dB SNR Scale in freq. and time: (a) (0, 0), initial intensity. (b) (2, 1/14). (c) (6, 1/14). (d) (6, 1/4)

Segmentation result • The bounding contours of estimated segments from multiscale analysis. The background is represented by blue: • One scale analysis • Two-scale analysis • Three-scale analysis • Four-scale analysis • The ideal binary mask • The mixture

Grouping • Apply auditory segmentation to generate all segments for the entire mixture • Segregate voiced speech using an existing algorithm • Identify segments dominated by voiced target using segregated voiced speech • Identify segments dominated by unvoiced speech based on speech/nonspeech classification • Assuming nonspeech interference due to the lack of sequential organization

Speech/nonspeech classification • A T-F segment is classified as speech if • Xs: The energy of all the T-F units within segments • H0: The hypothesis that s is dominated by expanded obstruents • H1: The hypothesis that s is interference dominant

Speech/nonspeech classification (cont.) • By the Bayes rule, we have • Since segments have varied durations, directly evaluating the above likelihoods is computationally infeasible • Instead, we assume that each time frame within a segment is statistically independent given a hypothesis • A multilayer perceptron is trained to distinguish expanded obstruents from nonspeech interference

Speech/nonspeech classification (cont.) • The prior probability ratio of , is found to be approximately linear with respect to input SNR • Assuming that interference energy does not vary greatly over the duration of an utterance, earlier segregation of voiced speech enables us to estimate input SNR

Speech/nonspeech classification (cont.) • With estimated input SNR, each segment is then classified as either expanded obstruents or interference • Segments classified as expanded obstruents join the segregated voiced speech to produce the final output

Example of segregation Utterance: “That noise problem grows more annoying each day” Interference: Crowd noise in a playground (IBM: Ideal binary mask)

SNR of segregated target • Compared to spectral subtraction assuming perfect speech pause detection

Conclusion • Analysis of ideal binary mask as CASA goal • Formulation of the cocktail party problem as binary classification • Segregation of unvoiced speech based on segment classification • The proposed model represents the first systematic study on unvoiced speech segregation

Credits • Speech intelligibility tests of IBM: Joint with Ulrik Kjems, Michael S. Pedersen, Jesper Boldt, and Thomas Lunner, at Oticon • Unvoiced speech segregation: Joint with Guoning Hu