CURVE FITTING Student Notes

CURVE FITTING Student Notes. ENGR 351 Numerical Methods for Engineers Southern Illinois University Carbondale College of Engineering Instructor: L.R . Chevalier, Ph.D., P.E. Applications. Need to determine parameters for saturation-growth rate model to characterize microbial kinetics.

CURVE FITTING Student Notes

E N D

Presentation Transcript

CURVE FITTINGStudent Notes ENGR 351 Numerical Methods for Engineers Southern Illinois University Carbondale College of Engineering Instructor: L.R. Chevalier, Ph.D., P.E.

Applications Need to determine parameters for saturation-growth rate model to characterize microbial kinetics Specific Growth Rate, m Food Available, S

Applications Epilimnion Thermocline Hypolimnion

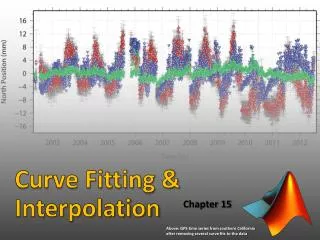

Applications Interpolation of data What is kinematic viscosity at 7.5º C?

f(x) x Can you suggest another? We want to find the best “fit” of a curve through the data. Here we see : a) Least squares fit b) Linear interpolation

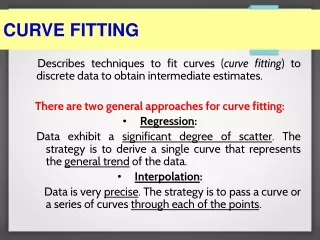

Material to be Covered in Curve Fitting • Linear Regression • Polynomial Regression • Multiple Regression • General linear least squares • Nonlinear regression • Interpolation • Lagrange polynomial • Coefficients of polynomials (Collocation-Polynomial Fit) • Splines

Specific Study Objectives • Understand the fundamental difference between regression and interpolation and realize why confusing the two could lead to serious problems • Understand the derivation of linear least squares regression and be able to assess the reliability of the fit using graphical and quantitative assessments.

Specific Study Objectives • Know how to linearize data by transformation • Understand situations where polynomial, multiple and nonlinear regression are appropriate • Understand the general matrix formulation of linear least squares • Understand that there is one and only one polynomial of degree n or less that passes exactly through n+1 points

Specific Study Objectives • Realize that more accurate results are obtained if data used for interpolation is centered around and close to the unknown point • Recognize the liabilities and risks associated with extrapolation • Understand why spline functions have utility for data with local areas of abrupt change

Least Squares Regression • Simplest is fitting a straight line to a set of paired observations • (x1,y1), (x2, y2).....(xn, yn) • The resulting mathematical expression is • y = ao + a1x + e • We will consider the error introduced at each data point to develop a strategy for determining the “best fit” equations

Determining the Coefficients Let’s consider where this comes from.

f(x) x Sum of the Residual Error, Sr

f(x) x Sum of the Residual Error, Sr Note: In this equation yi is the raw data point (dependent data) associated with xi (independent data)

f(x) x Sum of the Residual Error, Sr A line that models this data is: y = ao + a1x

f(x) x Sum of the Residual Error, Sr

f(x) x Sum of the Residual Error, Sr

Determining the Coefficients To determine the values for ao and a1, differentiate with respect to each coefficient Note: we have simplified the summation symbols. What mathematics technique will minimize Sr?

Determining the Coefficients Setting the derivative equal to zero will minimizing Sr. If this is done, the equations can be expressed as:

Determining the Coefficients Note: We have two simultaneous equations, with two unknowns, ao and a1. What are these equations? (hint: only place terms with ao and a1on the LHS of the equations) What are the final equations for ao and a1?

Determining the Coefficients These first two equations are called the normalequations

Example Determine the linear equation for the following data Strategy

Strategy • Set up a table • From these values determine the average values of x and y (x- and y-bar) • Calculate a0 and a1

f(x) x Error The most common measure of the “spread” of a sample is the standard deviation about the mean:

Error Coefficient of determination r2: r is the correlation coefficient

Error The following signifies that the line explains 100 percent of the variability of the data: Sr = 0 r = r2 = 1 If r = r2 = 0, then Sr = St and the fit is invalid.

Example Determine the R2 value for the following data Strategy

Strategy • Complete table • CalculateSr, St • Determine R2

Linearization of non-linear relationships Some data is simply ill-suited for linear least squares regression.... or so it appears. f(x) x

EXPONENTIAL EQUATIONS P t Linearize ln P intercept = ln P0 slope = r why? t

Can you see the similarity with the equation for a line: y = ao + a1x lnP intercept = ln Po slope = r t

After taking the natural log • of the y-data, perform linear • regression. • From this regression: • The value of ao will give us • ln (P0). Hence, P0 = eao • The value of a1 will give us r • directly. ln P intercept = ln P0 slope = r t

Q H POWER EQUATIONS (Flow over a weir) Here we linearize the equation by taking the log of H and Q data. What is the resulting intercept and slope? log Q log H

log Q slope = a log H intercept = log c

So how do we get c and a from performing regression on the log H vs log Q data? From : y = ao + a1x ao = log c c = 10ao a1 =a log Q slope = a log H intercept = log c

m S 1/m 1/ S SATURATION-GROWTH RATE EQUATION Here, m is the growth rate of a microbial population, mmax is the maximum growth rate, S is the substrate or food concentration, Ks is the substrate concentration at a value of m = mmax/2 slope = Ks/mmax intercept = 1/mmax

Example • Given the data below, determine the coefficients a and b for the equation y=axb Strategy

Strategy • Start a table. For y=axb you need a log-log table and graph. • Perform linear regression on the log-log data • Based on y = a0 + a1x, calculate • log a = a0 therefore a = 10a0 • b=a1

Residual Error Linear: y = ao + a1x Power: y = axb Exponential: y=aexb

Example Given the following results, determine Sr. Strategy

Strategy • Calculate e2 = (yi – ymodel)2 for each (x,y) pair • Determine Se2 = Sr

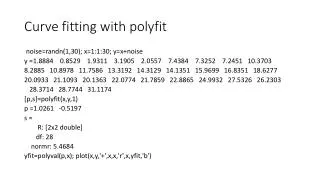

Demonstration with Excel See: 2012-L4-NonlinearRegressionExample.xlsx

General Comments of Linear Regression • You should be cognizant of the fact that there are theoretical aspects of regression that are of practical importance but are beyond the scope of this book • Statistical assumptions are inherent in the linear least squares procedure

General Comments of Linear Regression • x has a fixed value; it is not random and is measured without error • The y values are independent random variable and all have the same variance • The y values for a given x must be normally distributed

General Comments of Linear Regression • The regression of y versus x is not the same as x versus y • The error of y versus x is not the same as x versus y

f(x) x General Comments of Linear Regression • The regression of y versus x is not the same as x versus y • The error of y versus x is not the same as x versus y x-direction y-direction

Polynomial Regression • One of the reasons you were presented with the theory behind linear regression was to allow you the insight behind similar procedures for higher order polynomials • y = a0 + a1x • mth - degree polynomial • y = a0 + a1x + a2x2 +....amxm + e

Polynomial Regression Based on the sum of the squares of the residuals