Correlation

Correlation. A bit about Pearson’s r. Why does the maximum value of r equal 1.0? What does it mean when a correlation is positive? Negative? What is the purpose of the Fisher r to z transformation? What is range restriction? Range enhancement? What do they do to r ?.

Correlation

E N D

Presentation Transcript

Correlation A bit about Pearson’s r

Why does the maximum value of r equal 1.0? What does it mean when a correlation is positive? Negative? What is the purpose of the Fisher r to z transformation? What is range restriction? Range enhancement? What do they do to r? Give an example in which data properly analyzed by ANOVA cannot be used to infer causality. Why do we care about the sampling distribution of the correlation coefficient? What is the effect of reliability on r? Questions

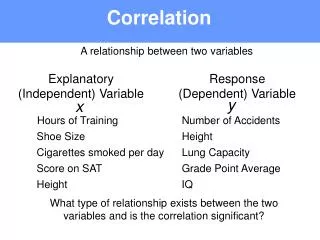

Basic Ideas • Nominal vs. continuous IV • Degree (direction) & closeness (magnitude) of linear relations • Sign (+ or -) for direction • Absolute value for magnitude • Pearson product-moment correlation coefficient

Illustrations Positive, negative, zero

Simple Formulas Use either N throughout or else use N-1 throughout (SD and denominator); result is the same as long as you are consistent. Pearson’s r is the average cross product of z scores. Product of (standardized) moments from the means.

Graphic Representation • Conversion from raw to z. 2. Points & quadrants. Positive & negative products. 3. Correlation is average of cross products. Sign & magnitude of r depend on where the points fall. 4. Product at maximum (average =1) when points on line where zX=zY.

Descriptive Statistics N Minimum Maximum Mean Std. Deviation Ht 10 60.00 78.00 69.0000 6.05530 Wt 10 110.00 200.00 155.0000 30.27650 Valid N (listwise) 10 r = 1.0

r=1 Leave X, add error to Y. r=.99

r=.99 Add more error. r=.91

Review • Why does the maximum value of r equal 1.0? • What does it mean when a correlation is positive? Negative?

Sampling Distribution of r Statistic is r, parameter is ρ (rho). In general, r is slightly biased. The sampling variance is approximately: Sampling variance depends both on N and on ρ.

Fisher’s r to z Transformation r .10 .20 .30 .40 .50 .60 .70 .80 .90 z .10 .20 .31 .42 .55 .69 .87 1.10 1.47 Sampling distribution of z is normal as N increases. Pulls out short tail to make better (normal) distribution. Sampling variance of z = (1/(n-3)) does not depend on ρ.

Hypothesis test: Result is compared to t with (N-2) df for significance. Say r=.25, N=100 p< .05 t(.05, 98) = 1.984.

Hypothesis test 2: One sample z test where r is sample value and ρ is hypothesized population value. Say N=200, r = .54, and ρ is .30. =4.13 Compare to unit normal, e.g., 4.13 > 1.96 so it is significant. Our sample was not drawn from a population in which rho is .30.

Hypothesis test 3: Testing equality of correlations from 2 INDEPENDENT samples. Say N1=150, r1=.63, N2=175, r2=70. = -1.18, n.s.

Hypothesis test 4: Testing equality of any number of independent correlations. Compare Q to chi-square with k-1 df. Chi-square at .05 with 2 df = 5.99. Not all rho are equal.

Hypothesis test 5: dependent r Hotelling-Williams test Say N=101, r12=.4, r13=.6, r23=.3 t(.05, 98) = 1.98 See my notes.

Review • What is the purpose of the Fisher r to z transformation? • Test the hypothesis that • Given that r1 = .50, N1 = 103 • r2 = .60, N2 = 128 and the samples are independent. • Why do we care about the sampling distribution of the correlation coefficient?

Reliability Reliability sets the ceiling for validity. Measurement error attenuates correlations. If correlation between true scores is .7 and reliability of X and Y are both .8, observed correlation is 7.sqrt(.8*.8) = .7*.8 = .56. Disattenuated correlation If our observed correlation is .56 and the reliabilities of both X and Y are .8, our estimate of the correlation between true scores is .56/.8 = .70.

Review • What is range restriction? Range enhancement? What do they do to r? • What is the effect of reliability on r?

SAS Power Estimation proc power; onecorr dist=fisherz corr = 0.35 nullcorr = 0.2 sides = 1 ntotal = 100 power = .; run; proc power; onecorr corr = 0.35 nullcorr = 0 sides = 2 ntotal = . power = .8; run; Computed N Total Alpha = .05 Actual Power = .801 Ntotal = 61 Computed Power Actual alpha = .05 Power = .486

Power for Correlations Sample sizes required for powerful conventional significance tests for typical values of the correlation coefficient in psychology. Power = .8, two tails, alpha is .05.