Why Parallel/Distributed Computing

Why Parallel/Distributed Computing. Sushil K. Prasad sprasad@gsu.edu. What is Parallel and Distributed computing?. Solving a single problem faster using multiple CPUs E.g. Matrix Multiplication C = A X B Parallel = Shared Memory among all CPUs Distributed = Local Memory/CPU

Why Parallel/Distributed Computing

E N D

Presentation Transcript

Why Parallel/Distributed Computing Sushil K. Prasad sprasad@gsu.edu

What is Parallel and Distributed computing? • Solving a single problem faster using multiple CPUs • E.g. Matrix Multiplication C = A X B • Parallel = Shared Memory among all CPUs • Distributed = Local Memory/CPU • Common Issues: Partition, Synchronization, Dependencies, load balancing

ASCI White (10 teraops/sec 2006) Mega flops = 10^6 flops = 2^20 Giga = 10^9 = billion = 2^30 Tera = 10^12 = trillion = 2^40 Peta = 10^15 = quadrillion = 2^50 Exa = 10^18 = quintillion = 2^60

Today - 2011 • 8 Peta flops = 10^15 flops • K computer ENIAC 350 flops 1946 65 Years of Speed Increases One Trillion Times Faster!

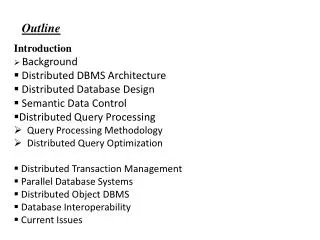

Why Parallel and Distributed Computing? • Grand Challenge Problems • Weather Forecasting; Global Warming • Materials Design – Superconducting material at room temperature; nano-devices; spaceships. • Organ Modeling; Drug Discovery

Why Parallel and Distributed Computing? • Physical Limitations of Circuits • Heat and light effect • Superconducting material to counter heat effect • Speed of light effect – no solution!

Micros Speed (log scale) Supercomputers Mainframes Minis Time Microprocessor Revolution Moore's Law

Why Parallel and Distributed Computing? • VLSI – Effect of Integration • 1 M transistor enough for full functionality - Dec’s Alpha (90’s) • Rest must go into multiple CPUs/chip • Cost – Multitudes of average CPUs give better FLPOS/$ compared to traditional supercomputers

Modern Parallel Computers • Caltech’s Cosmic Cube (Seitz and Fox) • Commercial copy-cats • nCUBE Corporation (512 CPUs) • Intel’s Supercomputer Systems • iPSC1, iPSC2, Intel Paragon (512 CPUs) • Thinking Machines Corporation • CM2 (65K 4-bit CPUs) – 12-dimensional hypercube - SIMD • CM5 – fat-tree interconnect - MIMD • Tiahe-1a 4.7 petaflops, 14K Xeon X5670 and 7,168 Nvidia Tesla M2050 • K-computer 8 petaflops (10^15 FLOPS), 2011, 68 K 2.0GHz 8-core CPUs 548,352 cores;

Why Parallel and Distributed Computing? • Everyday Reasons • Available local networked workstations and Grid resources should be utilized • Solve compute-intensive problems faster • Make infeasible problems feasible • Reduce design time • Leverage of large combined memory • Solve larger problems in same amount of time • Improve answer’s precision • Reduce design time • Gain competitive advantage • Exploit commodity multi-core and GPU chips • Find Jobs!

Why Shared Memory programming? • Easier conceptual environment • Programmers typically familiar with concurrent threads and processes sharing address space • CPUs within multi-core chips share memory • OpenMP an application programming interface (API) for shared-memory systems • Supports higher performance parallel programming of symmetrical multiprocessors • Java threads • MPI for Distributed Memory Programming

Seeking Concurrency • Data dependence graphs • Data parallelism • Functional parallelism • Pipelining

Data Dependence Graph • Directed graph • Vertices = tasks • Edges = dependencies

Data Parallelism • Independent tasks apply same operation to different elements of a data set • Okay to perform operations concurrently • Speedup: potentially p-fold, p #processors for i 0 to 99 do a[i] b[i] + c[i] endfor

Functional Parallelism • Independent tasks apply different operations to different data elements • First and second statements • Third and fourth statements • Speedup: Limited by amount of concurrent sub-tasks a 2 b 3 m (a + b) / 2 s (a2 + b2) / 2 v s - m2

Pipelining • Divide a process into stages • Produce several items simultaneously • Speedup: Limited by amount of concurrent sub-tasks = #of stages in the pipeline