Parallel Memory Allocation

Parallel Memory Allocation. Steven Saunders. Introduction. Fallacy : All dynamic memory allocators are either scalable , effective or efficient ... Truth : no allocator exists that handles all situations, allocation patterns and memory hierarchies best (serial or parallel)

Parallel Memory Allocation

E N D

Presentation Transcript

Parallel Memory Allocation Steven Saunders

Introduction • Fallacy: All dynamic memory allocators are either scalable, effective or efficient... • Truth: no allocator exists that handles all situations, allocation patterns and memory hierarchies best (serial or parallel) • Research results are difficult to compare • no standard benchmarks, simulators vs. real tests • Distributed memory, garbage collection, locality not considered in this talk Steven Saunders

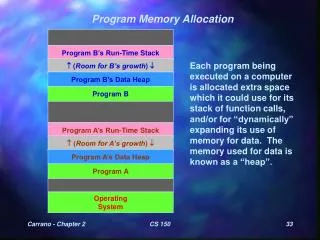

Definitions • Heap • pool of memory available for allocation or deallocation of arbitrarily-sized blocks in arbitrary order that will live an arbitrary amount of time • Dynamic Memory Allocator • used to request or return memory blocks to the heap • aware only of the size of a memory block, not its type and value • tracks which parts of the heap are in use and which parts are available for allocation Steven Saunders

Design of an Allocator • Strategy - consider regularities in program behavior and memory requests to determine a set of acceptable policies • Policy - decide where to allocate a memory block within the heap • Mechanism - implement the policy using a set of data structures and algorithms Emphasis has been on policies and mechanisms! Steven Saunders

Strategy • Ideal Serial Strategy • “put memory blocks where they won’t cause fragmentation later” • Serial program behavior: • ramps, peaks, plateaus • Parallel Strategies • “minimize unrelated objects on the same page” • “bound memory blowup and minimize false sharing” • Parallel program behavior: • SPMD, producer-consumer Steven Saunders

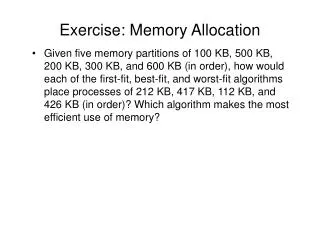

Policy • Common Serial Policies: • best fit, first fit, worst fit, etc. • Common Techniques: • splitting - break large blocks into smaller pieces to satisfy smaller requests • coalescing - free blocks to satisfy bigger requests • immediate - upon deallocation • deferred - wait until requests cannot be satisfied Steven Saunders

Mechanism • Each block contains header information • size, in use flag, pointer to next free block, etc. • Free List - list of memory blocks available for allocation • Singly/Doubly Linked List - each free block points to the next free block • Boundary Tag - size info at both ends of block • positive indicates free, negative indicates in use • Quick Lists - multiple linked lists, where each list contains blocks of equal size Steven Saunders

Performance • Speed - comparable to serial allocators • Scalability - scale linearly with processors • False Sharing - avoid actively causing it • Fragmentation - keep it to a minimum Steven Saunders

Memory 0 0 1 1 Cache X X X 0 1 Performance (False Sharing) • Multiple processors inadvertently share data that happens to reside in the same cache line • padding solves problem, but greatly increases fragmentation 0 1 X Steven Saunders

Performance (Fragmentation) • Inability to use available memory • External - available blocks cannot satisfy requests (e.g., too small) • Internal - block used to satisfy a request is larger than the requested size Steven Saunders

Example: ... x = malloc(s); free(x); ... Performance (Blowup) • Out of control fragmentation • unique to parallel allocators • allocator correctly reclaims freed memory but fails to reuse it for future requests • available memory not seen by allocating processor • p threads that serialize one after another require O(s) memory • p threads that execute in parallel require O(ps) memory • p threads that execute interleaved on 1 processor require O(ps) memory... blowup from exchanging memory (Producer-Consumer) Steven Saunders

Parallel Allocator Taxonomy • Serial Single Heap • global lock protects the heap • Concurrent Single Heap • multiple free lists or a concurrent free list • Multiple Heaps • processors allocate from any of several heaps • Private Heaps • processors allocate exclusively from a local heap • Global & Local Heaps • processors allocate from a local and a global heap Steven Saunders

Serial Single Heap • Make an existing serial allocator thread-safe • utilize a single global lock for every request • Performance • high speed, assuming a fast lock • scalability limited • false sharing not considered • fragmentation bounded by serial policy • Typically production allocators • IRIX, Solaris, Windows Steven Saunders

Concurrent Single Heap • Apply concurrency to existing serial allocators • use quick list mechanism, with a lock per list • Performance • moderate speed, could require many locks • scalability limited by number of requested sizes • false sharing not considered • fragmentation bounded by serial policy • Typically research allocators • Buddy System, MFLF (multiple free list first), NUMAmalloc Steven Saunders

Concurrent Single (Buddy System) • Policy/Mechanism • one free list per memory block size: 1,2,4,…,2i • blocks recursively split on list i into 2 buddies for list i-1 in order to satisfy smaller requests • only buddies are coalesced to satisfy larger requests • each free list can be individually locked • trade speed for reduced fragmentation • if free list empty, a thread’s malloc enters a wait queue • malloc could be satisfied by another thread freeing memory or by breaking a higher list’s block into buddies (whichever finishes first) • reducing the number of splits reduces fragmentation by leaving more large blocks for future requests Steven Saunders

Thread 1 Thread 2 1 1 2 2 4 4 2 1 1 8 8 16 16 Concurrent Single (Buddy System) • Performance • moderate speed, complicated locking/queueing, although buddy split/coalesce code is fast • scalability limited by number of requested sizes • false sharing very likely • high internal fragmentation x = malloc(8); x = malloc(5); y = malloc(8); free(x); Steven Saunders

Concurrent Single (MFLF) • Policy/Mechanism • set of quick lists to satisfy small requests exactly • malloc takes first block in appropriate list • free returns block to head of appropriate list • set of misc lists to satisfy large requests quickly • each list labeled with range of block sizes, low…high • malloc takes first block in list where request < low • trades linear search for internal fragmentation • free returns blocks to list where low < request < high • each list can be individually locked and searched Steven Saunders

Concurrent Single (MFLF) • Performance • high speed • scalability limited by number of requested sizes • false sharing very likely • high internal fragmentation • Approach similar to current state-of-the-art serial allocators Steven Saunders

Concurrent Single (NUMAmalloc) • Strategy - minimize co-location of unrelated objects on the same page • avoid page level false sharing (DSM/software DSM) • Policy - place same-sized requests in the same page (heuristic hypothesis) • basically MFLF on the page level • Performance • high speed • scalability limited by number of requested sizes • false sharing: helps page level but not cache level • high internal fragmentation Steven Saunders

Multiple Heaps • List of multiple heaps • individually growable, shrinkable and lockable • threads scan list looking for first available (trylock) • threads may cache result to reduce next lookup • Performance • moderate speed, limited by # list scans and lock • scalability limited by number of heaps and traffic • false sharing unintentionally reduced • blowup increased (up to O(p)) • Typically production allocators • ptmalloc (Linux), HP-UX Steven Saunders

Private Heaps • Processors exclusively utilize a local private heap for all allocation and deallocation • eliminates need for locks • Performance • extremely high speed • scalability unbounded • reduced false sharing • pass memory to another thread • blowup unbounded • Both research and production allocators • CILK, STL Steven Saunders

Global & Local Heaps • Processors generally utilize a local heap • reduces most lock contention • private memory is acquired/returned to global heap (which is always locked) as necessary • Performance • high speed, less lock contention • scalability limited by number of locks • low false sharing • blowup bounded (O(1)) • Typically research allocators • VH, Hoard Steven Saunders

Global & Local Heaps (VH) • Strategy - exchange memory overhead for improved scalability • Policy • memory broken into stacks of size m/2 • global heap maintains a LIFO of stack pointers that local heaps can use • global push releases a local heap’s stack • global pop acquires a local heap’s stack • local heaps maintain an Active and Backup stack • local operations private, i.e. don’t require locks • Mechanism - serial free list within stacks Steven Saunders

Global & Local Heaps (VH) • Memory Usage = M + m*p • M = amount of memory in use by program • p = number of processors • m = private memory (2 size m/2 stacks) • higher m reduces number of global heap lock operations • lower m reduces memory usage overhead global heap A A A private heap B B B 0 1 p Steven Saunders

Global & Local Heaps (Hoard) • Strategy - bound blowup and min. false sharing • Policy • memory broken into superblocks of size S • all blocks within a superblock are of equal size • global heap maintains a set of available superblocks • local heaps maintain local superblocks • malloc satisfied by local superblock • free returns memory to original allocating superblock (lock!) • superblocks acquired as necessary from global heap • if local usage drops below a threshold, superblocks are returned to the global heap • Mechanism – private superblock quick lists Steven Saunders

Global & Local Heaps (Hoard) • Memory Usage = O(M + p) • M = amount of memory in use by program • p = number of processors • False Sharing • since malloc is satisfied by a local superblock, and free returns memory to the original superblock, false sharing is greatly reduced • worst case: a non-empty superblock is released to global heap and another thread acquires it • set superblock size and emptiness threshold to minimize Steven Saunders

Global & Local Heaps (Compare) • VH proves a tighter memory bound than Hoard and only requires global locks • Hoard has a more flexible local mechanism and considers false sharing • They’ve never been compared! • Hoard is production quality and would likely win Steven Saunders

Summary • Memory allocation is still an open problem • strategies addressing program behavior still uncommon • Performance Tradeoffs • speed, scalability, false sharing, fragmentation • Current Taxonomy • serial single heap, concurrent single heap, multiple heaps, private heaps, global & local heaps Steven Saunders

References Serial Allocation • Paul Wilson, Mark Johnstone, Michael Neely, David Boles. Dynamic Storage Allocation: A Survey and Critical Review. 1995 International Workshop on Memory Management. Shared Memory Multiprocessor Allocation Concurrent Single Heap • Arun Iyengar. Scalability of Dynamic Storage Allocation Algorithms. Sixth Symposium on the Frontiers of Massively Parallel Computing. October 1996. • Theodore Johnson, Tim Davis. Space Efficient Parallel Buddy Memory Management. The Fourth International Conference on Computing and Information (ICCI'92). May 1992. • Jong Woo Lee, Yookun Cho. An Effective Shared Memory Allocator for Reducing False Sharing in NUMA Multiprocessors. IEEE Second International Conference on Algorithms & Architectures for Parallel Processing (ICAPP'96). Multiple Heaps • Wolfram Gloger. Dynamic Memory Allocator Implementations in Linux System Libraries. Global & Local Heaps • Emery Berger, Kathryn McKinley, Robert Blumofe, Paul Wilson. Hoard: A Scalable Memory Allocator for Multithreaded Applications. The Ninth International Conference on Architectural Support for Programming Languages and Operating Systems (ASPLOS-IX). November 2000. • Voon-Yee Vee, Wen-Jing Hsu. A Scalable and Efficient Storage Allocator on Shared-Memory Multiprocessors. The International Symposium on Parallel Architectures, Algorithms, and Networks (I-SPAN'99). June 1999. Steven Saunders