PART 3 Random Processes

PART 3 Random Processes. Huseyin Bilgekul Eeng571 Probability and astochastic Processes Department of Electrical and Electronic Engineering Eastern Mediterranean University. Random Processes. Kinds of Random Processes.

PART 3 Random Processes

E N D

Presentation Transcript

PART 3Random Processes Huseyin Bilgekul Eeng571 Probability and astochastic Processes Department of Electrical and Electronic Engineering Eastern Mediterranean University

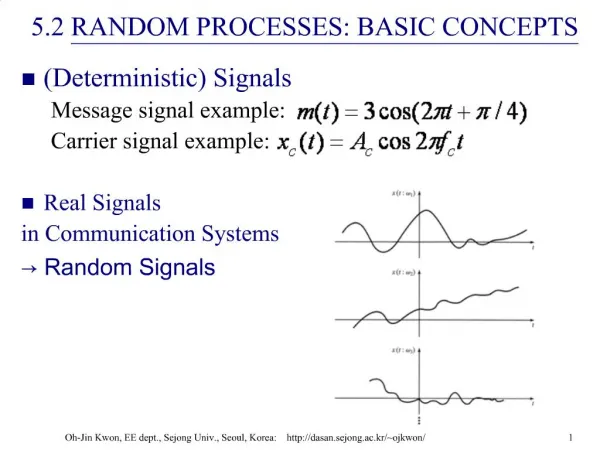

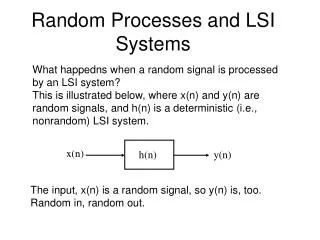

A RANDOM VARIABLEX, is a rule for assigning to every outcome, w, of an experiment a number X(w). Note: X denotes a random variable and X(w) denotes a particular value. A RANDOM PROCESSX(t) is a rule for assigning to every w, a function X(t,w). Note: for notational simplicity we often omit the dependence on w. Random Processes

Ensemble of Sample Functions The set of all possible functions is called the ENSEMBLE.

Random Processes • A general Random or Stochastic Process can be described as: • Collection of time functions (signals) corresponding to various outcomes of random experiments. • Collection of random variables observed at different times. • Examples of random processes in communications: • Channel noise, • Information generated by a source, • Interference. t1 t2

Random Processes Let denote the random outcome of an experiment. To every such outcome suppose a waveform is assigned. The collection of such waveforms form a stochastic process. The set of and the time index t can be continuous or discrete (countably infinite or finite) as well. For fixed (the set of all experimental outcomes), is a specific time function. For fixed t, is a random variable. The ensemble of all such realizations over time represents the stochastic

A random process is a process (i.e., variation in time or one dimensional space) whose behavior is not completely predictable and can be characterized by statistical laws. Examples of random processes Daily stream flow Hourly rainfall of storm events Stock index Introduction

Random Variable • A random variable is a mapping function which assigns outcomes of a random experiment to real numbers. Occurrence of the outcome follows certain probability distribution. Therefore, a random variable is completely characterized by its probability density function (PDF).

The term “stochastic processes” appears mostly in statistical textbooks; however, the term “random processes” are frequently used in books of many engineering applications. STOCHASTIC PROCESS

First-order densities of a random process A stochastic process is defined to be completely or totally characterized if the joint densities for the random variables are known for all times and all n. In general, a complete characterization is practically impossible, except in rare cases. As a result, it is desirable to define and work with various partial characterizations. Depending on the objectives of applications, a partial characterization often suffices to ensure the desired outputs. DENSITY OF STOCHASTIC PROCESSES

For a specific t, X(t) is a random variable with distribution . The function is defined as the first-order distribution of the random variable X(t). Its derivative with respect to x is the first-order density of X(t). DENSITY OF STOCHASTIC PROCESSES

If the first-order densities defined for all time t, i.e. f(x,t), are all the same, then f(x,t) does not depend on t and we call the resulting density the first-order density of the random process ; otherwise, we have a family of first-order densities. The first-order densities (or distributions) are only a partial characterization of the random process as they do not contain information that specifies the joint densities of the random variables defined at two or more different times. DENSITY OF STOCHASTIC PROCESSES

Mean and variance of a random process The first-order density of a random process, f(x,t), gives the probability density of the random variables X(t) defined for all time t. The mean of a random process, mX(t), is thus a function of time specified by MEAN AND VARIANCE OF RP • For the case where the mean of X(t) does not depend on t, we have • The variance of a random process, also a function of time, is defined by

Second-order densities of a random process For any pair of two random variables X(t1) and X(t2), we define the second-order densities of a random process as or . Nth-order densities of a random process The nth order density functions for at times are given by or . HIGHER ORDER DENSITY OF RP

Given two random variables X(t1) and X(t2), a measure of linear relationship between them is specified by E[X(t1)X(t2)]. For a random process, t1 and t2 go through all possible values, and therefore, E[X(t1)X(t2)] can change and is a function of t1 and t2. The autocorrelation function of a random process is thus defined by Autocorrelation function of RP

Strict-sense stationarity seldom holds for random processes, except for some Gaussian processes. Therefore, weaker forms of stationarity are needed. Stationarity of Random Processes

PDF of X(t) X(t) Time, t Stationarity of Random Processes

Equality Note that “x(t, i) = y(t, i) for every i” is not the same as “x(t, i) = y(t, i) with probability 1”. Equality and Continuity of RP

Mean square equality Mean Square Equality of RP

A random sequence or a discrete-time random process is a sequence of random variables {X1(), X2(), …, Xn(),…} = {Xn()}, . For a specific , {Xn()} is a sequence of numbers that might or might not converge. The notion of convergence of a random sequence can be given several interpretations. Stochastic Convergence

The sequence of random variables {Xn()} converges surely to the random variable X() if the sequence of functions Xn() converges to X() as n for all , i.e., Xn() X() as n for all . Sure Convergence (Convergence Everywhere)