Introduction to Supervised Machine Learning Concepts

Introduction to Supervised Machine Learning Concepts. PRESENTED BY B. Barla Cambazoglu ⎪ February 21, 2014. Guest Lecturer’s Background. Lecture Outline. Basic concepts in supervised machine learning Use case: Sentiment-focused web crawling. Basic Concepts. What is Machine Learning?.

Introduction to Supervised Machine Learning Concepts

E N D

Presentation Transcript

Introduction to SupervisedMachine Learning Concepts PRESENTED BY B. Barla Cambazoglu⎪ February 21, 2014

Lecture Outline • Basic concepts in supervised machine learning • Use case: Sentiment-focused web crawling

What is Machine Learning? • Wikipedia: “Machine learning is a branch of artificial intelligence, concerning the construction and study of systems that can learn from data.” • Arthur Samuel: “Field of study that gives computers the ability to learn without being explicitly programmed.” • Tom M. Mitchell: “A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P, if its performance at tasks in T, as measured by P, improves with experience E.”

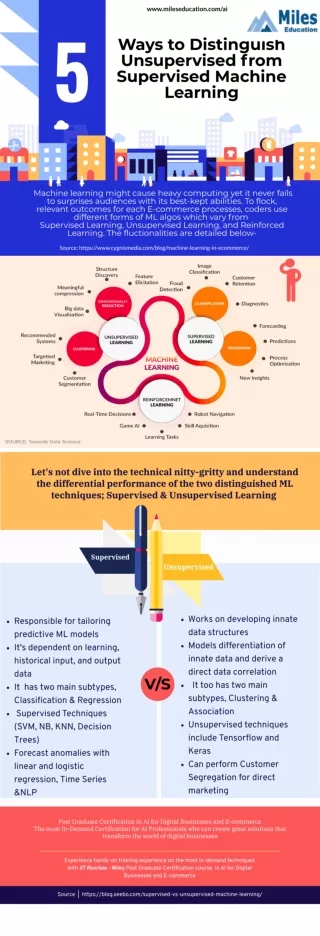

Unsupervised versus Supervised Machine Learning • Unsupervised learning • Assumes unlabeled data (the desired output is not known) • Objective is to discover the structure in the data • Supervised learning • Trained on labeled data (the desired output is known) • Objective is to generate an output for previously unseen input data

Supervised Machine Learning Applications • Common • Spam filtering • Recommendation and ranking • Fraud detection • Stock price prediction • Not so common • Recognize the user of a mobile device based on how he holds and moves the phone • Predict whether someone is a psychopath based on his twitter usage • Identify whales in the ocean based on audio recordings • Predict in advance whether a product launch will be successful or not

Terminology • Instance • Label • Feature • Training set • Test set • Learning model • Accuracy Toy problem: To predict the income level of a person based on his/her facial attributes.

Numeric Labels $3K $4K $2K $5K $7K $8K $11K $1K $9K $12K

Features Blonde No White No Male 5cm Bald No White Yes Male 0cm White NoBlackYes Male 3cm Dark YesWhiteNo Female 12cm

Training Set Blonde No White No Male 5cm Bald No White Yes Male 0cm White NoBlackYes Male 3cm Dark YesWhiteNo Female 12cm

Test Set Dark No White No Female 14cm Dark No White Yes Male 6cm Dark NoBlackNo Male 6cm Dark YesWhiteNo Female 15cm

Training and Testing Test instance Set of training instances Testing Prediction Training Model

Accuracy Actual labels Predicted labels Accuracy = # of correct predictions / total number of predictions = 2 / 4 = 50%

Precision and Recall • In certain cases, there are two class labels and predicting a particular class correctly is more important than predicting the other. • A good example is top-k ranking in web search. • Performance measures: • Recall • Precision

Some Practical Issues • Problem: Missing feature values • Solution: • Training: Use the most frequently observed (or average) feature value in the instance’s class. • Testing: Use the most frequently observed (or average) feature value in the entire training set. • Problem: Class imbalance • Solution • Oversampling: Duplicate the training instances in the small class • Undersampling: User fewer instances from the bigger class

Majority Classifier • Training: Find the class with the largest number of instances. • Testing: For every test instance, predict that class as the label, independent of the features of the test instance. Class Size 13 8 4 Testing Prediction Model

k-Nearest Neighbor Classifier k = 3 • Training: None! (known as a lazy classifier). • Testing: Find the k instances that are most similar to the test instance and use majority voting to decide on the label.

Decision Tree Classifier • Training: Build a tree where leaves represent labels and branches represent features that lead to those labels. • Testing: Traverse the tree using the feature values of the test instance. Black White Not black Yes Black No

Naïve Bayes Classifier P( | ) = 0.40 P( | ) = 0.65 P( | )= 0.78 • Training: For every feature value v and class c pair, we compute and store in a lookup table the conditional probability P(v | c). • Testing: For each class c, we compute:

Other Commonly Used Classifiers • Support vector machines • Boosted decision trees • Neural networks

Use Case:Sentiment-Focused Web Crawling G. Vural, B. B. Cambazoglu, and P. Senkul, “Sentiment-focused web crawling”, CIKM’12, pp. 2020-2024.

Problem • Early discovery of the opinionated content in the Web is important. • Use cases • Measuring brand loyalty or product adoption • Politics • Finance • We would like to design a sentiment-focused web crawler that aims to maximize the amount of sentimental/opinionated content fetched from the Web within a given amount of time.

Web Crawling • Subspaces • Downloaded pages • Discovered pages • Undiscovered pages

Sentiment-Focused Web Crawling • Challenge: to predict the sentimentality of an “unseen” web page, i.e., without having access to the page content.

Features • Assumption: Sentimental pages are more likely to be linked by other sentimental pages. • Idea: Build a learning model using features extracted from • Textual content of referring pages • Anchor text on the hyperlinks • URL of the target page

Labels • Our data (ClueWeb09-B) lacks ground-truth sentiment scores. • We created a ground-truth using the SentiStrength tool. • Assigns a sentiment score (between 0 and 8) to each web page as its label. • A small scale user-study is conducted with three judges to verify the suitability of this ground-truth. • 500 random pages sampled from the collection. • pages are labeled as sentimental or not sentimental. • Observations • 22% of the pages are labeled as sentimental. • High agreement between judges: the overlap is above 85%.

Learner and Performance Metric • As the learner, we use the LibSVM software in the regression mode. • We rebuild the prediction model at regular intervals throughout the crawling process. • As the main performance metric, we compute the total sentimentality score accumulated after fetching a certain number of pages.

Evaluated Crawlers • Proposed crawlers • based on the average sentiment score of referring page content • based on machine learning • Oracle crawlers • highest sentiment score • highest spam score • highest PageRank • Baseline crawlers • random • indegree-based • breadth first