Comprehensive Overview of Classification Techniques in Data Mining

This article explores various classification methods utilized in data mining, including regression, naive Bayes classifiers, k-nearest neighbors, and decision trees. It discusses the fundamental concepts of models and patterns, parameter estimation, and the importance of accuracy and comprehensibility in classifiers. The text also delves into supervised vs. unsupervised learning, the classification problem, and techniques for enhancing classifier performance. Key topics include the principles of pattern discovery, knowledge induction from data, and the role of decision trees in visualizing and making decisions based on data attributes.

Comprehensive Overview of Classification Techniques in Data Mining

E N D

Presentation Transcript

3. Classification Methods Patterns and Models Regression, NBC k-Nearest Neighbors Decision Trees and Rules Large size data Data Mining – Classification G Dong

Models and Patterns • A model is a global description of data, or an abstract representation of a real-world process • Estimating parameters of a model • Data-driven model building • Examples: Regression, Graphical model (BN), HMM • A pattern is about some local aspects of data • Patterns in data matrices • Predicates (age < 40) ^ (income < 10) • Patterns for strings (ASCII characters, DNA alphabet) • Pattern discovery: rules Data Mining – Classification G Dong

Performance Measures • Generality • How many instances are covered • Applicability • Or is it useful? All husbands are male. • Accuracy • Is it always correct? If not, how often? • Comprehensibility • Is it easy to understand? (a subjective measure) Data Mining – Classification G Dong

Forms of Knowledge • Concepts • Probabilistic, logical (proposition/predicate), functional • Rules • Taxonomies and Hierarchies • Dendrograms, decision trees • Clusters • Structures and Weights/Probabilities • ANN, BN Data Mining – Classification G Dong

Induction from Data • Inferring knowledge from data - generalization • Supervised vs. unsupervised learning • Some graphical illustrations of learning tasks (regression, classification, clustering) • Any other types of learning? • Compare: The task of deduction • Infer information/fact that is a logical consequence of facts in a database • Who is John’s grandpa? (deduced from e.g. Mary is John’s mother, Joe is Mary’s father) • Deductive databases: extending the RDBMS Data Mining – Classification G Dong

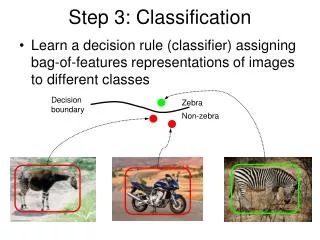

The Classification Problem • From a set of labeled training data, build a system (a classifier) for predicting the class of future data instances (tuples). • A related problem is to build a system from training data to predict the value of an attribute (feature) of future data instances. Data Mining – Classification G Dong

What is a bad classifier? • Some simplest classifiers • Table-Lookup • What if x cannot be found in the training data? • We give up!? • Or, we can … • A simple classifier Cs can be built as a reference • If it can be found in the table (training data), return its class; otherwise, what should it return? • A bad classifier is one that does worse than Cs. • Do we need to learn a classifier for data of one class? Data Mining – Classification G Dong

Many Techniques • Decision trees • Linear regression • Neural networks • k-nearest neighbour • Naïve Bayesian classifiers • Support Vector Machines • and many more ... Data Mining – Classification G Dong

Regression for Numeric Prediction • Linear regression is a statistical technique when class and all the attributes are numeric. • y = α + βx, where α and β are regression coefficients • We need to use instances <xi,y> to find α and β • by minimizing SSE (least squares) • SSE = Σ(yi-yi’)2 = Σ(yi- α - βxi)2 • Extensions • Multiple regression • Piecewise linear regression • Polynomial regression Data Mining – Classification G Dong

Nearest Neighbor • Also called instance based learning • Algorithm • Given a new instance x, • find its nearest neighbor <x’,y’> • Return y’ as the class of x • Distance measures • Normalization?! • Some interesting questions • What’s its time complexity? • Does it learn? Data Mining – Classification G Dong

Nearest Neighbor (2) • Dealing with noise – k-nearest neighbor • Use more than 1 neighbor • How many neighbors? • Weighted nearest neighbors • How to speed up? • Huge storage • Use representatives (a problem of instance selection) • Sampling • Grid • Clustering Data Mining – Classification G Dong

Naïve Bayes Classification • This is a direct application of Bayes’ rule • P(C|x) = P(x|C)P(C)/P(x) x - a vector of x1,x2,…,xn • That’s the best classifier you can ever build • You don’t even need to select features, it takes care of it automatically • But, there are problems • There are a limited number of instances • How to estimate P(x|C) Data Mining – Classification G Dong

NBC (2) • Assume conditional independence between xi’s • We have P(C|x) ≈ P(x1|C) P(xi|C) (xn|C)P(C) • How good is it in reality? • Let’s build one NBC for a very simple data set • Estimate the priors and conditional probabilities with the training data • P(C=1) = ? P(C=2) =? P(x1=1|C=1)? P(x1=2|C=1)? … • What is the class for x=(1,2,1)? P(1|x) ≈ P(x1=1|1) P(x2=2|1) P(x3=1|1) P(1), P(2|x) ≈ • What is the class for (1,2,2)? Data Mining – Classification G Dong

Example of NBC Data Mining – Classification G Dong

Golf Data Data Mining – Classification G Dong

Decision Trees • A decision tree Outlook sunny overcast rain Humidity Wind YES high normal strong weak NO YES NO YES Data Mining – Classification G Dong

How to `grow’ a tree? • Randomly Random Forests (Breiman, 2001) • What are the criteria to build a tree? • Accurate • Compact • A straightforward way to grow is • Pick an attribute • Split data according to its values • Recursively do the first two steps until • No data left • No feature left Data Mining – Classification G Dong

Discussion • There are many possible trees • let’s try it on the golf data • How to find the most compact one • that is consistent with the data? • Why the most compact? • Occam’s razor principle • Issue of efficiency w.r.t. optimality • One attribute at a time or … Data Mining – Classification G Dong

Grow a good tree efficiently • The heuristic – to find commonality in feature values associated with class values • To build a compact tree generalized from the data • It means we look for features and splits that can lead to pure leaf nodes. • Is it a good heuristic? • What do you think? • How to judge it? • Is it really efficient? • How to implement it? Data Mining – Classification G Dong

Outlook (7,7) Sun (5) OCa (4) Rain (5) Let’s grow one • Measuring the purity of a data set – Entropy • Information gain (see the brief review) • Choose the feature with max gain Data Mining – Classification G Dong

Different numbers of values • Different attributes can have varied numbers of values • Some treatments • Removing useless attributes before learning • Binarization • Discretization • Gain-ratio is another practical solution • Gain = root-Info – InfoAttribute(i) • Split-Info = -((|Ti|/|T|)log2 (|Ti|/|T|)) • Gain-ratio = Gain / Split-Info Data Mining – Classification G Dong

Another kind of problems • A difficult problem. Why is it difficult? • Similar ones are Parity, Majority problems. XOR problem 0 0 0 0 1 1 1 0 1 1 1 0 Data Mining – Classification G Dong

Tree Pruning • Overfitting: Model fits training data too well, but won’t work well for unseen data. • An effective approach to avoid overfitting and for a more compact tree (easy to understand) • Two general ways to prune • Pre-pruning: stop splitting further • Any significant difference in classification accuracy before and after division • Post-pruning to trim back Data Mining – Classification G Dong

Rules from Decision Trees • Two types of rules • Order sensitive (more compact, less efficient) • Order insensitive • The most straightforward way is … • Class-based method • Group rules according to classes • Select most general rules (or remove redundant ones) • Data-based method • Select one rule at a time (keep the most general one) • Work on the remaining data until all data is covered Data Mining – Classification G Dong

Variants of Decision Trees and Rules • Tree stumps • Holte’s 1R rules (1992) • For each attribute A • Sort according to its values v • Find the most frequent class value c for each v • Breaking tie with coin flipping • Output the most accurate rule as if A=v then c • An example (the Golf data) Data Mining – Classification G Dong

Handling Large Size Data • When data simply cannot fit in memory … • Is it a big problem? • Three representative approaches • Smart data structures to avoid unnecessary recalculation • Hash trees • SPRINT • Sufficient statistics • AVC-set (Attribute-Value, Class label) to summarize the class distribution for each attribute • Example: RainForest • Parallel processing • Make data parallelizable Data Mining – Classification G Dong

Ensemble Methods • A group of classifiers • Hybrid (Stacking) • Single type • Strong vs. weak learners • A good ensemble • Accuracy • Diversity • Some major approaches form ensembles • Bagging • Boosting Data Mining – Classification G Dong

Bibliography • I.H. Witten and E. Frank. Data Mining – Practical Machine Learning Tools and Techniques with Java Implementations. 2000. Morgan Kaufmann. • M. Kantardzic. Data Mining – Concepts, Models, Methods, and Algorithms. 2003. IEEE. • J. Han and M. Kamber. Data Mining – Concepts and Techniques. 2001. Morgan Kaufmann. • D. Hand, H. Mannila, P. Smyth. Principals of Data Mining. 2001. MIT. • T. G. Dietterich. Ensemble Methods in Machine Learning. I. J. Kittler and F. Roli (eds.) 1st Intl Workshop on Multiple Classifier Systems, pp 1-15, Springer-Verlag, 2000. Data Mining – Classification G Dong