Bringing Co-processor Performance to Every Programmer

This presentation outlines innovative methods to make parallel processing accessible to everyday programmers through the development of data-parallel array types in high-level languages. It discusses the programming model, operational capabilities, and provides examples and implementations targeted at GPUs and multi-core CPUs. The goal is to simplify parallelism for regular applications while achieving compelling performance. Attendees will learn how to leverage existing libraries and carry out efficient parallel programming practices.

Bringing Co-processor Performance to Every Programmer

E N D

Presentation Transcript

Bringing Co-processor Performance to Every Programmer David Tarditi, Sidd Puri, Jose Oglesby Microsoft Research presented by Turner Whitted

Outline • Basics – why, what, how • Programming model, operations, capabilities • Examples • Implementation • Performance • Directions

Outline • Basics – what, why, how • Programming model, operations, capabilities • Examples • Implementation • Performance • Directions

Our goal • Make parallel processing accessible … … to everyday programmers • And available … … for everyday applications

Approach • Extend existing high-level languages with new data-parallel array types • Ease of programming • Implemented as a library so programmers can use it now • Eventually fold into base languages. • Build implementations with compelling performance • Target GPUs and multi-core CPUs • Create examples and applications • Educate programmers, provide sample code

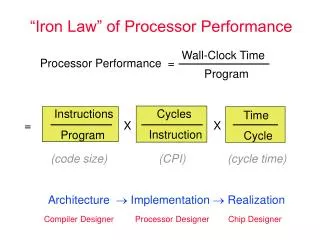

Why data parallel? • It’s the easiest parallel programming model. • It’s easy to debug. • It’s easy to adapt to massive parallelism. • Scaling to hundreds or thousands of parallel units requires no mindset, design, or code changes. • There’s widespread application experience in the scientific, financial, media, and graphics communities. • APL/Parallel Fortran/Connection Machines/Stream programming • In developing parallel software the data organization is much more important than parallelism in the code.

Data-parallel array types CPU GPU Array1[ … ] DPArray1[ … ] txtr1[ … ] … library_calls() pix_shdrs() API/Driver/ Hardware DPArrayN[ … ] txtrN[ … ] ArrayN[ … ]

Explicit coercion Explicit coercions between data-parallel arrays and normal arrays trigger GPU execution CPU GPU Array1[ … ] DPArray1[ … ] txtr1[ … ] … library_calls() pix_shdrs() API/Driver/ Hardware DPArrayN[ … ] txtrN[ … ] ArrayN[ … ]

Functional style CPU GPU Array1[ … ] DPArray1[ … ] txtr1[ … ] Functional style: each operation produces a new data-parallel array … pix_shdrs() API/Driver/ Hardware DPArrayN[ … ] txtrN[ … ] ArrayN[ … ]

Types of operations Restrict operations to allow data-parallel programming. No aliasing, pointer arithmetic, individual element access CPU GPU Array1[ … ] DPArray1[ … ] txtr1[ … ] … library_calls() pix_shdrs() API/Driver/ Hardware DPArrayN[ … ] txtrN[ … ] ArrayN[ … ]

Operations • Array creation • Element-wise arithmetic operations: +, *, -, etc. • Element-wise boolean operations: and, or, >, < etc. • Type coercions: integer to float, etc. • Reductions/scans: sum, product, max, etc. • Transformations: expand, pad, shift, gather, scatter, etc. • Basic linear algebra: inner product, outer product.

Example: 2-D convolution float[,] Blur(float[,] array, float[] kernel) { using (DFPA parallelArray = new DFPA(array)) { FPA resultX = new FPA(0.0f, parallelArray.Shape); for (int i = 0; i < kernel.Length; i++) { // Convolve in X direction. resultX += parallelArray.Shift(0,i) * kernel[i]; } FPA resultY = new FPA(0.0f, parallelArray.Shape); for (int i = 0; i < kernel.Length; i++) { // Convolve in Y direction. resultY += resultX.Shift(i,0) * kernel[i]; } using (DFPA result = resultY.Eval()) { float[,] resultArray; result.ToArray(out resultArray); return resultArray; } } }

What’s built • A data-parallel library for .NET • Simple, high-level set of operations • A just-in-time compiler that compiles on-the-fly to GPU pixel shader code • Runs on top of product CLR • Examples and applications • Versions using the library, C, and hand-written pixel shader.

Implementation details • See David Tarditi, Sidd Puri, Jose Oglesby, “Accelerator: using data-parallelism to program GPUs for general purpose uses,” to appear in Proceedings of ASPLOS XII, Oct. 2006.

Versions Three implementations • Accelerator, written in C# • Hand-written pixel shader 3.0 code • C (running on CPU) • Use Intel’s Math Kernel Library for sum, matrix-multiply, matrix-vector, part of neural net training Produce verifiably equivalent output (within epsilon)

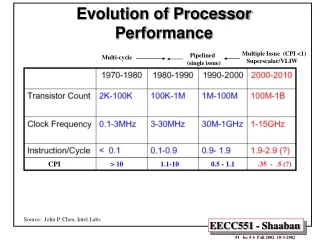

Hardware configuration • CPU: 3.2 Ghz P4, with 16K L1 cache, 1MB L2 cache • Machine(s): Dell Optiplex GX280, 1 GB memory, 400ns, PCI Express bus • GPUs: • Nvidia GE Force 6800 Ultra with 256MB, Brand: eVGA • Nvidia GE Force 7800 GTX with 256MB, Brand: eVGA • ATI x850 with 256 MB • ATI x1800 XT

Software configuration • C++ • Intel Math Kernel Library 7.0 • Intel C++ Compiler 9.0 for Windows • Visual Studio 2005 (“Whidbey”) Beta 2 • DirectX 9.0 (June 2005 update) • C# • Framework 2.0.50215 • DirectX for Managed Code 1.0.2902.0/1.0.2906.0 • Compiler flags used: • Intel C++: /Ox • Microsoft C++: /Ox /fp:fast • C#: /optimize+

Lessons learned/next steps • Need a non-graphics interface • For more flexibility • Less execution overhead • Need native GPU support • Replace library with language built-ins • Need to learn from users • Retarget for multi-core

Additional information • Tech Report, “Accelerator: simplified programming of graphics processing units for general-purpose uses via data parallelism,” MSR-TR-2005-184 • Available at http://research.microsoft.com • Download available from • http://research.microsoft.com/downloads • For questions contact • msraccde@microsoft.com

Acknowledgement • Jim Kajiya, Rick Szeliski, Raymond Endres, David Williams

![[Programmer]](https://cdn3.slideserve.com/5712235/slide1-dt.jpg)