CACTUS-Clustering Categorical Data Using Summaries

380 likes | 623 Vues

CACTUS-Clustering Categorical Data Using Summaries. Advisor : Dr. Hsu Graduate : Min-Hung Lin IDSL seminar 2001/10/30. Outline. Motivation Objective Related Work Definitions CACTUS Performance Evaluation Conclusions Comments. Motivation.

CACTUS-Clustering Categorical Data Using Summaries

E N D

Presentation Transcript

CACTUS-Clustering Categorical Data Using Summaries Advisor: Dr. Hsu Graduate:Min-Hung Lin IDSL seminar 2001/10/30

Outline • Motivation • Objective • Related Work • Definitions • CACTUS • Performance Evaluation • Conclusions • Comments

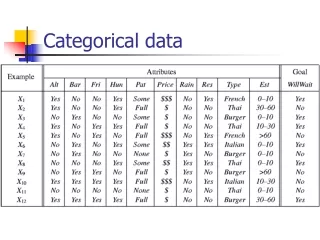

Motivation • Clustering with categorical attributes has received attention • Previous algorithms do not give a formal description of the clusters • Some of them need post-process the output of the algorithm to identify the final clusters.

Objective • Introduce a novel formalization of a cluster for categorical attributes. • Describe a fast summarization-based algorithm CACTUS that discovers clusters. • Evaluate the performance of CACTUS on synthetic and real datasets.

Related Work • EM algorithm [Dempster et al., 1977] • Iterative clustering technique • STIRR algorithm[Gibson et al., 1998] • Iterative algorithm based on non-linear dynamical systems • ROCK algorithm[Guha et al., 1999] • Hierarchical clustering algorithm

Formal Definition of a Cluster (cont’d) • is the cluster-projection of C on • C is called a sub-cluster if it satisfies conditions (1) and (3) • A cluster C over a subset of all attributes is called a subspace cluster on S; if |S| = k then C is called a k-cluster

Result • STIRR fails to discover • clusters consisting of overlapping cluster-projections on any attribute • clusters where two or more clusters share the same cluster projection • CACTUS correctly discovers all clusters

CACTUS • Three-phase clustering algorithm • Summarization Phase • Compute the summary information • Clustering Phase • Discover a set of candidate clusters • Validation Phase • Determine the actual set of clusters

Summarization Phase • Inter-attribute Summaries • Intra-attribute Summaries

Clustering Phase • Computing cluster-projections on attributes • Level-wise synthesis of clusters

Computing Cluster-Projections on Attributes • Step 1 :pairwise cluster-projection • Step 2 :intersection

Computing Cluster-Projections on Attributes (cont’d) Cluster- projection

Level-wise synthesis of clusters (cont’d) • Generation procedure

Level-wise synthesis of clusters (cont’d) Candidate cluster

Validation • Some of the candidate clusters may not have enough support because some of the 2-cluster may be due to different sets of tuples. • Check if the support of each candidate cluster is greater than the threshold: times the expected support of the cluster. • Only clusters whose support on D passes the threshold are retained.

Validation Procedure • Setting the supports of all candidate clusters to zero. • For each tuple increment the support of the candidate cluster to which t belongs. • At the end of the scan, delete all candidate clusters whose support is less than the threshold.

Extensions • Large Attribute Value Domains • Clusters in Subspaces

Performance Evaluation • Evaluation of CACTUS on Synthetic and Real Datasets • Compared the performance of CACTUS with the performance of STIRR

Synthetic Datasets • The test datasets were generated using the data generator developed by Gibson et al.(1 million tuples, 10 attributes, 100 attributes values for each attribute)

Real Datasets • Two sets of bibliographic entries • 7766 entries are database-related • 30919 entries are theory-related • Four attributes: the first author, the second author, the conference, and the year. • Attribute domains are {3418,3529,1631,44},{8043,8190,690,42},{10212,10527,2315,52}

Real Datasets (cont’d) Database-related Theory-related Mixture

Results • CACTUS is very fast and scalable(only two scans of the dataset) • CACTUS outperforms STIRR by a factor between 3 and 10

Conclusions • Formalized the definition of a cluster for categorical attributes. • Introduced a fast summarization-based algorithm CACTUS for discovering such clusters in categorical data. • Evaluated algorithm against both synthetic and real datasets.

Future Work • Relax the cluster definition by allowing sets of attribute values are “almost” strongly connected to each other. • Inter-attribute summaries can be incremental maintained=>Derive an incremental clustering algorithm • Rank the clusters based on a measure of interestingness

Comments • Pairwise cluster-projection is the NP-complete problem • A large number of candidate clusters is still a problem