Cache Friendly Parallel Priority Queue

Cache Friendly Parallel Priority Queue. Dinesh Agarwal Distributed Mobile Systems Lab ( DiMoS ) . Overview. Parallel Priority Queue Task Allocation Strategies Prefetching Experimental Results. Parallel Priority Queue. Parallel Binary Heap r elements per node Parallel Heap Property

Cache Friendly Parallel Priority Queue

E N D

Presentation Transcript

Cache Friendly Parallel Priority Queue Dinesh Agarwal Distributed Mobile Systems Lab (DiMoS)

Overview • Parallel Priority Queue • Task Allocation Strategies • Prefetching • Experimental Results

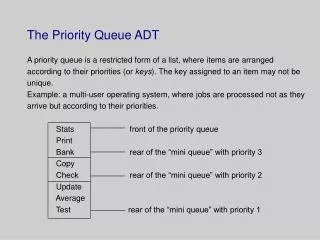

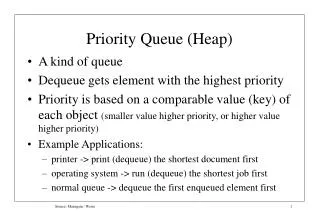

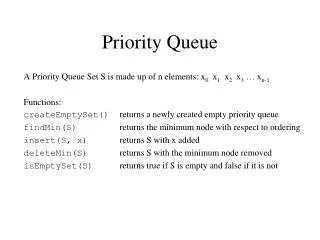

Parallel Priority Queue • Parallel Binary Heap • r elements per node • Parallel Heap Property • Top priority r items at root • General (worker) Processors • Maintenance Processors

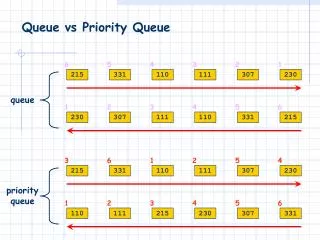

Task Allocation Strategies • Round Robin • After updating current level, a processor i jumps to Xthlevel; X =(current level + 2 * processors) • Blocking • After updating current level, a processor jumps to Xth level; X = (current level + 2) • Round Robin with Shift • Processor starts working at a level it worked on during last iteration

Prefetching • Observation: To update a node you need its children nodes • Prediction: If the next node to be operated on is known, so are the children • Touch the memory blocks where the children are stored • Overlap these memory reads with work

Experimental Results • Speedup for varying granularity:

Experimental Results • Performance for varying n

Experimental Results • Speedup for varying r:

Experimental Results • Performance on different Machines:

Experimental Results • Prefetching:

Experimental Results • Allocation Strategies: