Advanced Networking Services for LHC Experiments: Integration and Innovation

This project focuses on advancing network services to enhance the workflow of LHC experiments at various leading institutions including Caltech, the University of Michigan, Vanderbilt University, and the University of Texas at Arlington. By integrating cutting-edge networking tools like DYNES and OSCARS with existing LHC software stacks (PanDA, PhEDEx), we aim to optimize data transfers, enhance resource scheduling, and develop a cohesive network infrastructure. The initiative seeks to streamline operations for both CMS and ATLAS, paving the way for future research collaborations across the globe.

Advanced Networking Services for LHC Experiments: Integration and Innovation

E N D

Presentation Transcript

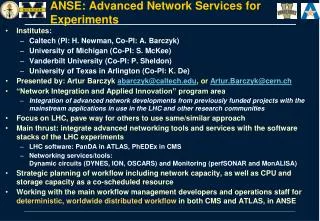

ANSE: Advanced Network Services for Experiments • Institutes: • Caltech (PI: H. Newman, Co-PI: A. Barczyk) • University of Michigan (Co-PI: S. McKee) • Vanderbilt University (Co-PI: P. Sheldon) • University of Texas in Arlington (Co-PI: K. De) • Presented by: ArturBarczykabarczyk@caltech.edu, or Artur.Barczyk@cern.ch • “Network Integration and Applied Innovation” program area • Integration of advanced network developments from previously funded projects with the mainstream applications in use in the LHC and other research communities • Focus on LHC, pave way for others to use same/similar approach • Main thrust: integrate advanced networking tools and services with the software stacks of the LHC experiments • LHC software: PanDA in ATLAS, PhEDEx in CMS • Networking services/tools: Dynamic circuits (DYNES, ION, OSCARS) and Monitoring (perfSONAR and MonALISA) • Strategic planning of workflow including network capacity, as well as CPU and storage capacity as a co-scheduled resource • Working with the main workflow management developers and operations staff for deterministic, worldwide distributed workflow in both CMS and ATLAS, in ANSE

ANSE - Relation to DYNES • In brief, DYNES is an NSF funded project to deploy a ‘cyberinstrument’ linking up to 50 US campuses through Internet2 dynamic circuit backbone • based on ION service, using OSCARS technology • Use of OpenFlow, through Internet2’s OS3E network, being considered/tested • DYNES instrument is intended as a production-grade ‘starter-kit’ • comes with a disk server, inter-domain controller (server) and FDT (transfer application) installation • FDT code includes OSCARS IDC API -> reserves bandwidth, and moves data through the created circuit • “Bandwidth on Demand” • The DYNES system is naturally capable of advance reservation • But we need the right agent code inside CMS/ATLAS to call the API whenever transfers involve two DYNES sites • Btw - DYNES is entering production-readiness in 2013 (now)

SDN Deployment at Caltech • earlier SDN installation, aka DCN testbed • ANSE is a SW development project, but will make use of infrastructure deployed as part of the DYNES instrument • This slide shows Caltech installation only! • Installation at other DYNES campuses varies • see http://dynes.internet2.edu for details • DYNES/ANSE @ Caltech: • 1 IDC server • 1 data server • 1 switch (future: OF-capable)

Outlook etc. • Currently, there is no GENI deployment at Caltech • The HEP group is investigating potential installation of a GENI rack • Intended use case: network R&D for HEP data distribution • The HEP Networking group at Caltech is active in SDN R&D: • OLiMPS (Openflow Link-layer Multipath Switching) project funded by DOE-OASCR • Contact: • ArturBarczyk(HEP networking group), abarczyk@caltech.edu • Harvey Newman, newman@hep.caltech.edu