Knowledge Transfer via Multiple Model Local Structure Mapping

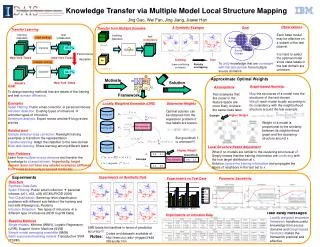

Design learning methods that adapt classifiers from multiple source domains to new domains without labeled data, relying on base models. Utilize locally weighted ensemble and graph-based heuristics for practical knowledge transfer.

Knowledge Transfer via Multiple Model Local Structure Mapping

E N D

Presentation Transcript

Knowledge Transfer via Multiple Model Local Structure Mapping Jing Gao† Wei Fan‡ Jing Jiang†Jiawei Han† †University of Illinois at Urbana-Champaign ‡IBM T. J. Watson Research Center

Standard Supervised Learning training (labeled) test (unlabeled) Classifier 85.5% New York Times New York Times

In Reality…… training (labeled) test (unlabeled) Classifier 64.1% Labeled data not available! Reuters New York Times New York Times

Domain Difference Performance Drop train test ideal setting Classifier NYT NYT 85.5% New York Times New York Times realistic setting Classifier NYT Reuters 64.1% Reuters New York Times

Other Examples • Spam filtering • Public email collection personal inboxes • Intrusion detection • Existing types of intrusions unknown types of intrusions • Sentiment analysis • Expert review articles blog review articles • The aim • To design learning methods that are aware of the training and test domain difference • Transfer learning • Adapt the classifiers learnt from the source domain to the new domain

All Sources of Labeled Information test (completely unlabeled) training (labeled) Reuters Classifier ? …… New York Times Newsgroup

A Synthetic Example Training (have conflicting concepts) Test Partially overlapping

Goal Source Domain Source Domain Target Domain Source Domain • To unify knowledge that are consistent with the test domain from multiple source domains

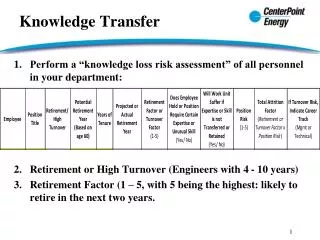

Summary of Contributions • Transfer from multiple source domains • Target domain has no labeled examples • Do not need to re-train • Rely on base models trained from each domain • The base models are not necessarily developed for transfer learning applications

Locally Weighted Ensemble Training set 1 C1 X-feature value y-class label Training set 2 C2 Test example x …… …… Training set k Ck

Optimal Local Weights Higher Weight 0.9 0.1 C1 Test example x 0.8 0.2 0.4 0.6 C2 • Optimal weights • Solution to a regression problem • Impossible to get since f is unknown!

Graph-based Heuristics Higher Weight • Graph-based weights approximation • Map the structures of a model onto the structures of the test domain • Weight of a model is proportional to the similarity between its neighborhood graph and the clustering structure around x.

Experiments Setup • Data Sets • Synthetic data sets • Spam filtering: public email collection personal inboxes (u01, u02, u03) (ECML/PKDD 2006) • Text classification: same top-level classification problems with different sub-fields in the training and test sets (Newsgroup, Reuters) • Intrusion detection data: different types of intrusions in training and test sets. • Baseline Methods • One source domain: single models (WNN, LR, SVM) • Multiple source domains: SVM on each of the domains • Merge all source domains into one: ALL • Simple averaging ensemble: SMA • Locally weighted ensemble: LWE

Conclusions • Locally weighted ensemble framework • transfer useful knowledge from multiple source domains • Graph-based heuristics to compute weights • Make the framework practical and effective