Optimizing Network Performance with Traffic Modeling Insights

Explore the importance of traffic modeling for network performance and QoS. Learn about different traffic models, concepts like mean and variance, bursts, and correlation. Discover how traffic characteristics impact network efficiency. Real-world examples and insights provided.

Optimizing Network Performance with Traffic Modeling Insights

E N D

Presentation Transcript

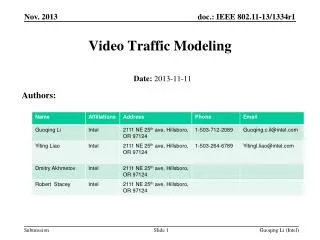

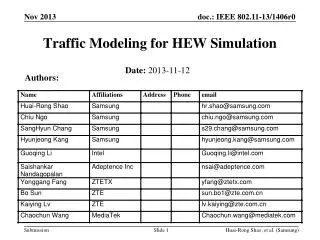

Traffic Modeling Nelson L. S. da Fonseca Institute of Computing State University of Campinas Campinas, Brazil

Network Workload: “Traffic” • Telecom networks built to transport traffic • Flow of bytes or packets or messages

Quality of Services • “Perception of the quality of the transfer of information expressed by quantitative metrics”

33011 bytes (33) 25239 bytes (30) 17179 bytes (27) 9265 bytes (24) 7042 bytes (21) 4819 bytes (18) 2617 bytes (15) 2006 bytes (12) 1393 bytes (9) 793 bytes (6) 447 bytes (3) 227 bytes (1) Quality of Service 1393 bytes (9) 793 bytes (6) 447 bytes (3) 227 bytes (1)

Why is Traffic Modeling Important? • Need for performance evaluation and capacity planning • Understand the nature of traffic generated by applications and their transformation along the network • Conception of effective traffic control mechanisms: congestion control, flow control, routing …

Why is Traffic Modeling Important? • Accurate performance prediction requires realistic traffic models • Can use in analysis or testing (“synthetic traffic”) • Synthetic traffic matches real traces, is more efficient to use

An Example • Traffic: 5 synchronous streams of 299,040 cells/sec • Buffer size B, line rate 312,500 cells/sec • An ATM cell 53 bytes

An Example • G/D/1/B or nD/D/1 r = 0.957 • E(W) = 2.7 msec • W < Wmax = 4 x 3.2 = 12.8 msec • No losses for B=4 or bigger • If Poisson: M/D/1, already big difference • E(W) = r m / 2(1- r) = r 3.2 /2(1- r) = 35.6 msec! • Need B = 249 for cell loss 10-9! • Conclusion: Mean is not enough; smoothness or burstiness affects a lot

Our Goal • To understand relevance of traffic characteristics with respect to network performance and QoS • Identify and familiarize with common traffic models

Mean and Variance Mean amount of work arrived at each slot = 4 Variance =

Mean and Variance Mean amount of work arrived= 4.0 Variance of the amount of work arrived =

Mean and Variance • Both processes have the same mean and variance. But what is their impact on queuing?

Mean and Variance • Consider a queue with a server, service rate of two cells per time unit and buffer space of two cells. 2/1

Mean and Variance When the first stream Feeds the queue No loss! Service = 2/1

Mean and Variance But when the second flow feeds the queue then 1/5 pkts are lost! 2/1

Correlation Low Correlation High Correlation

Variance v=Autocovariance Integral -5.0 -4.0 -3.0 -2.0 -1.0 0.0 1.0 2.0 3.0 4.0 5.0 Autocovariance

Origins of Correlations… • Superposition of weakly correlated sources • Size of files • User behavior • Protocol dynamics • etc ….

Video • MPEG

Video • 500x500 pixels por frame = 250.000 pixels por frame, generating 2 Mbits/frame for an 8-bit gray scale at a rate of 30 frames/sec 60 Mbits/seg. If three colors are used, the transmission rate is 180 Mbits/sec Compression Variable Bit Rate

Burstiness • Variable with time scales

Traffic Bursts and Scales • Hierarchical view • Call • Burst • Packet • How to characterize: Mean? Peak? Variance? Autocorrelation?

Burstiness • Most traffic types in high-speed networks are bursty • Burstiness is mainly due to autocorrelation • Renewal models assume autocorrelation away for tractability • Performance prediction non-realistic without burstiness

Taxonomy of Models • Renewal and IID: no dependence • Short Range Dependent (SRD) • Markov modulated, Interrupted Poisson Process etc. • Semi Markov • MAP • Fluid: useful for analysis and also for simulation • Regression models – classical statistics • Long range Dependent (LRD) • Monofractal (self-similar) • Multifractal

Voice Source • Human voice bursts of 0.4 a 1.2 sec durations and periods of silence of 0.6 a 1.8 sec (exponentially distributed); • Sampling at 125 mseg intervals with 8 bits coding 170 cells (53 bytes) ATM/sec ON OFF

Fluid-Flow Equations • x– buffer occupancy; • Each voice source generates V cells/sec during talk spurt with duration of 1/a sec; • A channel with capacity VCcells/sec has aC units of information per second; • N sources; • Fi(t,x) – system state, at time t there are isources in state on and the buffer occupancy is x;

Fluid-Flow Equations • Fj(x) = Prob. [j sources on, buffer occupancy≤x]

Markov Modulated Process • Arrival rate depends on understanding Markov chain 1 2 n l1 l2 ln

Superposition of Voice Sources 1 2 l1 l2

Markov Modulated Process • M/G/1 – type • Efficient algorithms

Markov Modulated Process single Arrivals batch discrete Time continuous

Markov Modulated Process • Markov Modulated Poisson Process – MMPP (continuous time, single arrival) • Batch Markovian arrival process – BMAP (continuous time, batch arrival)

Markov Modulated Process • Discrete Time Batch Markovian Arrival Process – D-BMAP (discrete time, batch arrivals) • Discrete Time Markovian Arrival Process (D-MAP)